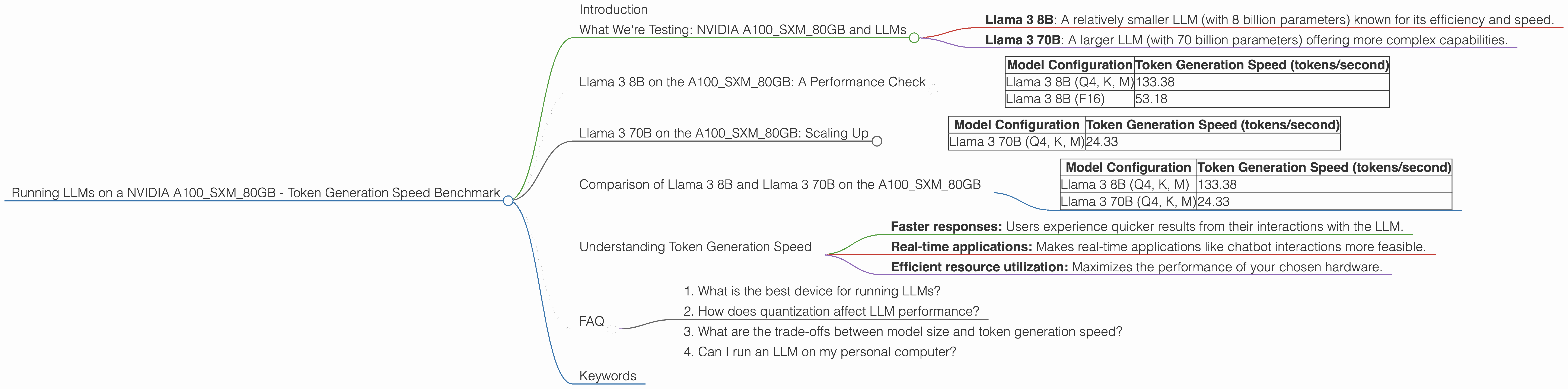

Running LLMs on a NVIDIA A100 SXM 80GB Token Generation Speed Benchmark

Introduction

In the bustling world of large language models (LLMs), speed is king. These powerful AI models can generate text, translate languages, summarize information, and more, but their real-world applications hinge on how quickly they can process information. This is where the hardware powering those models comes into play.

This article dives into the performance of one of the most powerful GPUs available, the NVIDIA A100SXM80GB, when running a selection of popular LLMs. We'll test token generation speeds, which directly impact the responsiveness of your LLM application. If you're a developer or just curious about the computational prowess behind LLMs, buckle up!

What We're Testing: NVIDIA A100SXM80GB and LLMs

For our benchmark, we're focusing on the NVIDIA A100SXM80GB, a powerhouse GPU designed for demanding AI workloads. This GPU boasts high memory capacity (80GB), massive parallel processing capabilities, and accelerated tensor cores, making it an ideal choice for running large-scale LLMs.

We'll test it with three popular LLMs:

- Llama 3 8B: A relatively smaller LLM (with 8 billion parameters) known for its efficiency and speed.

- Llama 3 70B: A larger LLM (with 70 billion parameters) offering more complex capabilities.

Let's delve into the performance of each model on the A100SXM80GB.

Llama 3 8B on the A100SXM80GB: A Performance Check

Llama 3 8B is a great starting point for testing token generation speed. This LLM is relatively lightweight, making it ideal for experimentation and development.

Here are the results for Llama 3 8B on the A100SXM80GB:

| Model Configuration | Token Generation Speed (tokens/second) |

|---|---|

| Llama 3 8B (Q4, K, M) | 133.38 |

| Llama 3 8B (F16) | 53.18 |

Let's break down what these numbers mean:

- Q4, K, M: This configuration involves quantization. Quantization is like a "diet" for LLMs – it reduces the precision of the model's weights, making it smaller and faster but sacrificing some accuracy. Q4 stands for 4-bit quantization, which is a commonly used approach for LLMs. The "K" and "M" indicate further optimizations to speed up the model.

- F16: This indicates the model is running with half-precision floating point numbers (16-bit). This is a more common setting, offering a good balance between accuracy and speed.

Observations:

- The Q4, K, M configuration is a clear winner, achieving significantly higher token generation speed compared to the F16 configuration. This is a typical outcome as quantization techniques can significantly boost performance for LLMs.

- The F16 configuration, while slower, still delivers a decent speed.

Think of it this way: The Q4, K, M configuration is like a speeding race car, while the F16 version is a more comfortable and reliable sedan. Both get you to your destination, but with different levels of speed and smoothness.

Llama 3 70B on the A100SXM80GB: Scaling Up

Let's jump to a larger LLM: Llama 3 70B. This is a heavy-hitter with a vast model size and more advanced linguistic capabilities. Let's see how it performs on the A100SXM80GB:

| Model Configuration | Token Generation Speed (tokens/second) |

|---|---|

| Llama 3 70B (Q4, K, M) | 24.33 |

Key Takeaways:

- With its larger size, Llama 3 70B achieves a considerably lower token generation speed compared to Llama 3 8B, even with the optimized Q4, K, M configuration. This is expected because the model's larger size demands more computational resources.

Think of it like this: Imagine you're trying to drive a compact car (Llama 3 8B) and then a large SUV (Llama 3 70B) on the same road. The SUV will be slower because it's much heavier and requires more power to move.

Comparison of Llama 3 8B and Llama 3 70B on the A100SXM80GB

To see the difference in a more direct way, let's compare the token generation speeds of the two LLMs side-by-side:

| Model Configuration | Token Generation Speed (tokens/second) |

|---|---|

| Llama 3 8B (Q4, K, M) | 133.38 |

| Llama 3 70B (Q4, K, M) | 24.33 |

Observations:

- The token generation speed of Llama 3 8B is almost six times faster than Llama 3 70B. This significant difference highlights the impact of model size on performance.

Remember: While the A100SXM80GB is a powerhouse, it still faces limitations when dealing with massive LLMs. The larger the model, the more resources it needs, leading to slower generation speeds.

Understanding Token Generation Speed

Token generation speed essentially measures how quickly an LLM can process information and generate output. It's a crucial factor in determining the responsiveness and user experience of your LLM applications.

Think of tokens as the building blocks of text: Each word, punctuation mark, and even spaces are represented as individual tokens. The more tokens a model can process per second, the faster it can generate text, translate languages, or perform other tasks.

High token generation speed benefits:

- Faster responses: Users experience quicker results from their interactions with the LLM.

- Real-time applications: Makes real-time applications like chatbot interactions more feasible.

- Efficient resource utilization: Maximizes the performance of your chosen hardware.

FAQ

1. What is the best device for running LLMs?

The "best" device depends on your specific needs. For smaller LLMs like Llama 3 8B, even a powerful CPU might suffice. However, for larger models, a GPU like the A100SXM80GB is often necessary for optimal performance.

2. How does quantization affect LLM performance?

Quantization reduces the size of the LLM's weights, making them easier to store and faster to process. This leads to improved token generation speed but can slightly impact accuracy.

3. What are the trade-offs between model size and token generation speed?

Larger LLMs offer more capabilities but come with the cost of slower token generation speed. Smaller LLMs, while less powerful, often deliver faster performance.

4. Can I run an LLM on my personal computer?

Running LLMs locally on your computer is possible, especially for smaller LLMs. However, high-performance GPUs and sufficient RAM are essential for larger models.

Keywords

NVIDIA A100SXM80GB, LLM, Llama 3, 8B, 70B, token generation speed, GPU, performance, benchmark, quantization, F16, Q4, K, M, speed, resources, model size, trade-offs.