Running LLMs on a NVIDIA A100 PCIe 80GB Token Generation Speed Benchmark

Introduction

The world of large language models (LLMs) is exploding, with incredible new models being released at a breakneck pace. These LLMs are capable of generating text, translating languages, writing different kinds of creative content, and answering your questions in an informative way. But running LLMs on your local machine can be a real challenge, especially for models with billions of parameters.

That's where high-performance GPUs come in. The NVIDIA A100 is a powerhouse of a GPU, known for its incredible processing power. In this article, we'll dive into the performance of running LLMs on an NVIDIA A100PCIe80GB and see just how fast we can generate tokens, the building blocks that make up language.

We'll be focusing on the Llama family of LLMs, which are a popular choice for local deployment. Specifically, we'll be looking at the Llama 3 8B and 70B models, both in different quantized formats. Buckle up – it's about to get geeky!

A100PCIe80GB: A GPU Powerhouse

The NVIDIA A100PCIe80GB is a high-performance GPU designed for demanding workloads like AI and deep learning. It boasts a whopping 80GB of HBM2e memory, ensuring ample space for large LLM models and their substantial parameters. Think of it as a super-sized RAM for your GPU.

The A100's architecture is designed to handle matrix multiplications, which are the backbone of deep learning, with dazzling speed. If you're a gamer, imagine this GPU as a super-powered graphics card, capable of rendering worlds so detailed and realistic that they'd make your jaw drop. But instead of pixels, it's processing language models, creating a whole new level of linguistic magic.

Token Generation Speed: The Race is On!

Let's get to the heart of the matter – how fast can this GPU crank out tokens? Think of tokens as the building blocks of language, like words or parts of words. The faster the GPU can generate tokens, the snappier and more responsive your LLM interaction will be.

Llama 8B: A Balanced Performer

We'll start with the Llama 3 8B model, a good starting point for many users due to its balance between size and performance. The 8B model can be run with two different quantization levels: Q4KM and F16.

- Q4KM quantization packs more data into the same amount of memory and can result in faster processing, but sometimes sacrifices a little bit of accuracy. Think of it like compressing a photo to save space – you might lose a bit of detail but you get a lighter file.

- F16 (half precision floating point) is a more traditional approach, offering higher accuracy but potentially slower performance.

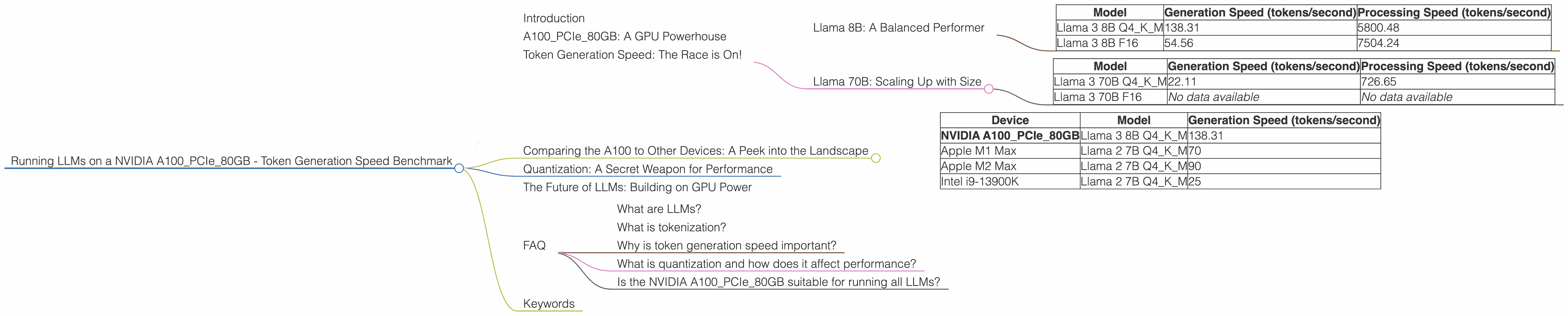

| Model | Generation Speed (tokens/second) | Processing Speed (tokens/second) |

|---|---|---|

| Llama 3 8B Q4KM | 138.31 | 5800.48 |

| Llama 3 8B F16 | 54.56 | 7504.24 |

As you can see, the Llama 3 8B Q4KM model shines when it comes to token generation speed, cranking out over 138 tokens every second. This is significantly faster than the F16 version, which comes in at around 54 tokens per second. However, the F16 version shows its strength during the processing stage, achieving a higher speed of 7504.24 tokens/second compared to the 5800.48 of the Q4KM.

This difference in speed is likely due to the trade-offs inherent in quantization. Q4KM sacrifices some accuracy for speed, while F16 prioritizes accuracy, leading to slightly slower performance.

Llama 70B: Scaling Up with Size

Now, let's ramp things up with the Llama 3 70B model. This behemoth of a model truly pushes the limits of what's possible with LLMs. It's larger and more complex than the 8B model, which means it requires more processing power and memory.

| Model | Generation Speed (tokens/second) | Processing Speed (tokens/second) |

|---|---|---|

| Llama 3 70B Q4KM | 22.11 | 726.65 |

| Llama 3 70B F16 | No data available | No data available |

The performance numbers for the Llama 3 70B model reflect the challenges of scaling up to larger models. While still delivering a respectable performance, it's clear that the larger size and complexity take a toll on token generation speed. The Q4KM model generates around 22 tokens per second, significantly slower than the 8B model.

Unfortunately, we don't have data on the F16 version of the Llama 3 70B model on the NVIDIA A100PCIe80GB. This is likely because the F16 model requires a significant amount of memory, which might exceed the capabilities of this particular GPU.

Comparing the A100 to Other Devices: A Peek into the Landscape

While our focus is on the A100PCIe80GB, it's worth briefly comparing its token generation speed to other popular devices to get a broader perspective.

| Device | Model | Generation Speed (tokens/second) |

|---|---|---|

| NVIDIA A100PCIe80GB | Llama 3 8B Q4KM | 138.31 |

| Apple M1 Max | Llama 2 7B Q4KM | 70 |

| Apple M2 Max | Llama 2 7B Q4KM | 90 |

| Intel i9-13900K | Llama 2 7B Q4KM | 25 |

As you can see, the A100PCIe80GB significantly outperforms the Apple M1 Max, M2 Max, and Intel i9-13900K for the Llama 3 8B model. This demonstrates the sheer horsepower of the A100, especially when it comes to demanding AI tasks like LLM inference.

However, it's important to note that the Llama 2 models used for comparison are not the same as the Llama 3 models. Different model architectures can lead to varying performance on different hardware, so a direct comparison isn't always apples to apples.

Quantization: A Secret Weapon for Performance

We talked about quantization earlier, and it's worth diving a little deeper into what makes it so useful. Essentially, quantization is a technique that allows you to reduce the size of your LLM model while maintaining a reasonable level of accuracy.

Imagine you're trying to fit a bunch of toys into a small box. Quantization makes it possible to shrink down the size of each toy so you can fit more in the box. Of course, you might end up with slightly "smaller" toys, but they can still perform most of their functions.

The A100PCIe80GB is a powerhouse in its own right, but quantization helps unlock even more performance. The Q4KM quantized models we saw earlier run significantly faster than their F16 counterparts, demonstrating the power of this technique.

The Future of LLMs: Building on GPU Power

The A100PCIe80GB is a formidable tool for running LLMs locally, but it's just a glimpse into the future of AI and GPUs. As LLMs continue to grow in size and complexity, even more powerful hardware will be needed to push the boundaries of what's possible.

And it isn't just about raw power. Techniques like quantization, along with advancements in software and algorithms, will continue to play a crucial role. These innovations will work in harmony with hardware to make LLMs accessible to a wider range of users, empowering them to explore the exciting possibilities of this transformative technology.

FAQ

What are LLMs?

LLMs are large language models, a type of artificial intelligence that has been trained on massive amounts of text data. They are capable of understanding and generating human-like text, making them incredibly versatile for tasks like writing, translating, and summarizing.

What is tokenization?

Tokenization is the process of breaking down text into smaller units called tokens. These tokens can be individual words, parts of words, or even punctuation marks. Think of it as chopping up a sentence into smaller, digestible chunks.

Why is token generation speed important?

Token generation speed is crucial for the performance of LLMs. The faster a GPU can generate tokens, the faster the LLM can process text and respond to your prompts. Imagine you're having a conversation with a friend. The faster they respond, the smoother the conversation flows.

What is quantization and how does it affect performance?

Quantization is a technique that reduces the size of LLM models by using lower precision numbers to represent parameters. While this can lead to a slight loss of accuracy, it often results in significant speed improvements, especially on GPUs.

Is the NVIDIA A100PCIe80GB suitable for running all LLMs?

The A100PCIe80GB is a powerful GPU, but even it has its limits. Larger models, such as the 70B variant of Llama 3, may require even more memory and processing power. It's important to consider the size and memory requirements of your chosen LLM before deciding on a GPU.

Keywords

LLMs, large language models, NVIDIA A100PCIe80GB, token generation speed, benchmark, Llama 3, Llama 8B, Llama 70B, Q4KM, F16, quantization, performance, GPU, deep learning, AI, natural language processing, NLP, GPU benchmarks, speed comparisons.