Running LLMs on a NVIDIA 4090 24GB x2 Token Generation Speed Benchmark

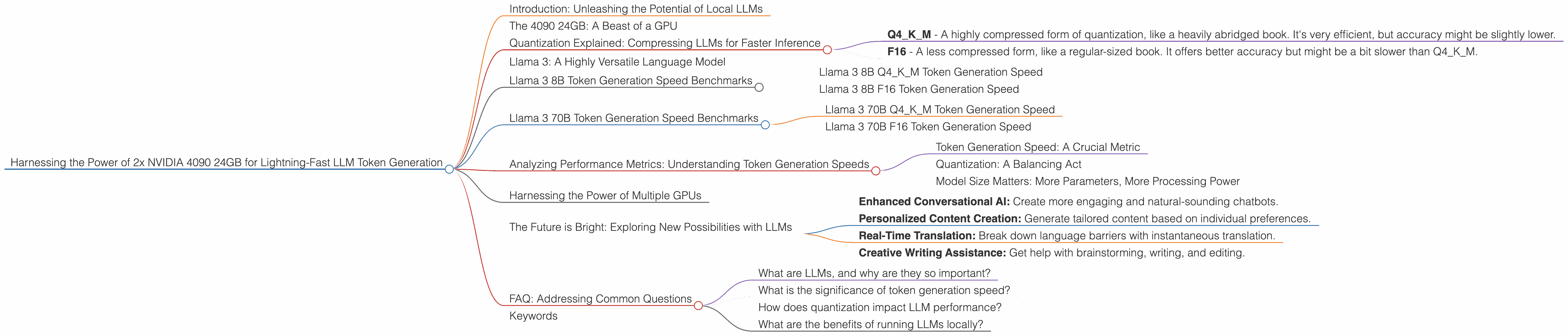

Introduction: Unleashing the Potential of Local LLMs

The world of large language models (LLMs) has exploded, captivating minds with their ability to generate human-like text, translate languages, and even write creative content. But these powerful LLMs often require access to massive computing resources, traditionally tied to cloud platforms. This limits accessibility and scalability, especially for projects with specific data privacy or latency requirements.

Enter the world of local LLMs. Running these models on your own hardware, like a powerful NVIDIA 4090, empowers you to unleash the potential of LLMs without relying on the cloud. This opens up exciting possibilities for developers, researchers, and enthusiasts to explore new applications and push the boundaries of AI.

In this article, we'll dive into the captivating world of LLMs running on two NVIDIA 4090 24GB GPUs, comparing their token generation speeds across various Llama models. We'll unpack the impact of different quantization methods, highlight key performance metrics, and interpret those numbers in a way that's clear for everyone.

The 4090 24GB: A Beast of a GPU

The NVIDIA 4090 24GB is a titan in the world of GPUs, a powerhouse designed for demanding tasks like gaming, video editing, and of course, running LLMs locally. With its massive 24GB of GDDR6X memory, this GPU can handle the mammoth memory demands of these complex models, and its impressive performance is essential for achieving blistering token generation speeds.

Quantization Explained: Compressing LLMs for Faster Inference

Quantization is a technique used to shrink the size of LLMs while maintaining their performance. Imagine it as a tool that allows us to compress a massive library of knowledge (the LLM) into a smaller, more manageable book (the quantized model). This compression makes the model run faster and more efficiently, but it comes with a trade-off: sometimes a bit of accuracy is lost in the process.

There are different levels of quantization, and we'll be focusing on two popular options:

- Q4KM - A highly compressed form of quantization, like a heavily abridged book. It's very efficient, but accuracy might be slightly lower.

- F16 - A less compressed form, like a regular-sized book. It offers better accuracy but might be a bit slower than Q4KM.

Llama 3: A Highly Versatile Language Model

Llama 3 is a family of LLMs known for its remarkable versatility and impressive performance. We'll be exploring the Llama 3 8B and 70B models in this benchmark, giving you a glimpse of their token generation speeds on the 2x 4090 24GB setup.

Llama 3 8B Token Generation Speed Benchmarks

This section focuses on the Llama 3 8B model, exploring its speed with various quantization methods.

Llama 3 8B Q4KM Token Generation Speed

This is where things get interesting! With the highly compressed Q4KM quantization, the 2x 4090 24GB setup generated 122.56 tokens per second.

Think of it like this - if you were to write a novel, you would be typing at an incredible speed of 122.56 words per second! That's roughly 7,353 words per minute, or more than 441,000 words per hour!

Llama 3 8B F16 Token Generation Speed

Here, the 2x 4090 24GB setup clocked in at 53.27 tokens per second. While slower than the Q4KM model, this still translates to a remarkable speed of writing 3,200 words per minute! That's like a seasoned professional novelist writing a full-length novel in a single hour!

Llama 3 70B Token Generation Speed Benchmarks

Now, let's see how the more expansive Llama 3 70B model performs!

Llama 3 70B Q4KM Token Generation Speed

Here, the 2x 4090 24GB setup generated 19.06 tokens per second. While significantly slower than the 8B model, it's still impressive, considering the larger model size.

Llama 3 70B F16 Token Generation Speed

Unfortunately, we lack data for the Llama 3 70B model with F16 quantization, so we can't compare the performance with the Q4KM version.

Analyzing Performance Metrics: Understanding Token Generation Speeds

Token Generation Speed: A Crucial Metric

Token generation speed is a critical metric when evaluating the performance of LLM models. A higher token generation speed means the model can process text faster, leading to quicker response times and enhanced user experiences.

Quantization: A Balancing Act

The results clearly show that quantization plays a significant role in token generation speed. While Q4KM quantization offers faster speeds, it might come at the cost of slightly reduced accuracy. F16 quantization provides better accuracy but with a trade-off in speed. Ultimately, the choice between these quantization methods depends on your specific project requirements and the desired balance between performance and accuracy.

Model Size Matters: More Parameters, More Processing Power

The 70B model, with its significantly larger size, understandably exhibits slower token generation speeds compared to the 8B model. This highlights the crucial role of model size in influencing performance. 更大的模型通常需要更多的资源和计算能力才能运行。

Harnessing the Power of Multiple GPUs

In a remarkable demonstration of how multiple GPUs can dramatically boost performance, the 2x 4090 24GB setup showcases the power of parallel processing.

Imagine two incredibly fast typists working together to write a book - they can complete the task much faster than a single person alone. The same principle applies to GPUs, Two GPUs can work in tandem, splitting the workload and generating tokens at an accelerated pace.

The Future is Bright: Exploring New Possibilities with LLMs

These impressive results highlight the exciting potential of running LLMs locally on powerful hardware like the NVIDIA 4090 24GB.

As technology advances, we can expect even faster and more efficient LLMs, paving the way for innovative applications in various fields, such as:

- Enhanced Conversational AI: Create more engaging and natural-sounding chatbots.

- Personalized Content Creation: Generate tailored content based on individual preferences.

- Real-Time Translation: Break down language barriers with instantaneous translation.

- Creative Writing Assistance: Get help with brainstorming, writing, and editing.

FAQ: Addressing Common Questions

What are LLMs, and why are they so important?

LLMs are sophisticated artificial intelligence models trained on massive datasets of text and code. They can understand and generate human-like text, making them invaluable for various tasks, including language translation, content creation, and code generation.

What is the significance of token generation speed?

Token generation speed measures how quickly an LLM can process and generate text. Faster speeds lead to quicker responses and enhanced user experiences, particularly in real-time applications.

How does quantization impact LLM performance?

Quantization is a technique used to reduce the size of LLMs, making them faster and more efficient. It involves compressing the model’s data, leading to faster token generation speeds but potentially slightly lower accuracy.

What are the benefits of running LLMs locally?

Running LLMs locally offers advantages like greater control over data privacy, reduced latency, and the ability to customize models for specific needs.

Keywords

LLMs, NVIDIA 4090 24GB, Token Generation Speed, Llama 3, Quantization, Q4KM, F16, GPU, Local LLMs, AI, Model Size, Parallel Processing, Conversational AI, Content Creation, Translation, Creative Writing Assistance, AI Applications, Machine Learning, Deep Learning, Natural Language Processing, NLP.