Running LLMs on a NVIDIA 4080 16GB Token Generation Speed Benchmark

Introduction: Unleashing the Power of LLMs on a Beastly GPU

The world of Large Language Models (LLMs) is booming! From generating creative text formats like poems, code, scripts, musical pieces, email, letters, etc., to answering your questions in an informative way, LLMs are changing the way we interact with technology. But running these powerful models locally can be a challenge, especially for those with powerful models like the 70B parameter Llama 3. This is where the NVIDIA 4080 16GB GPU comes in.

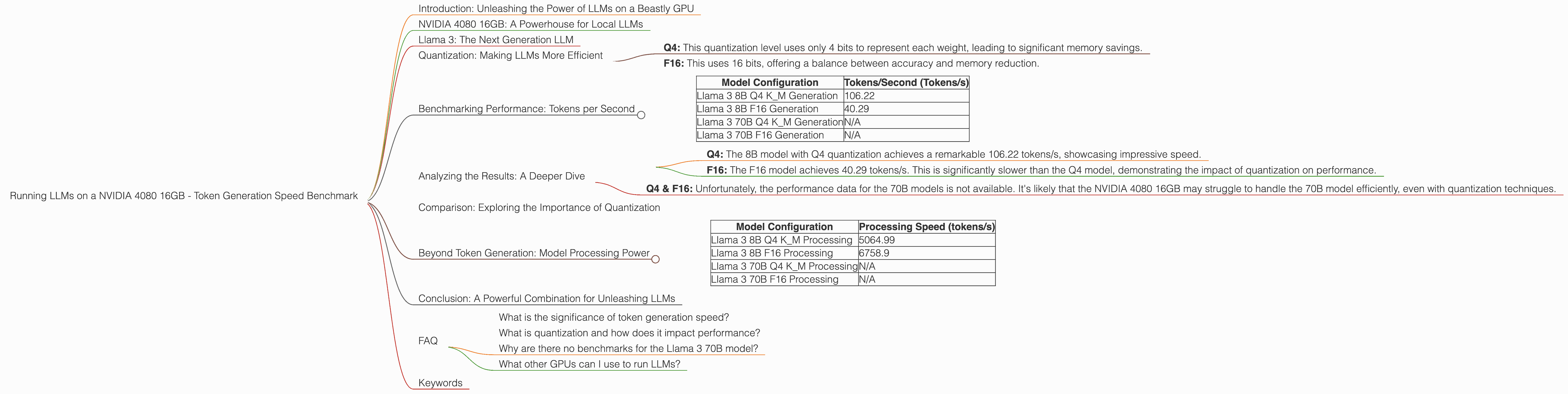

This article delves into the performance of the NVIDIA 4080 16GB GPU when it comes to generating tokens for various LLM models, specifically focusing on the Llama 3 family. We'll explore the different configurations, including varying quantization levels (Q4, F16), and analyze the token generation speed for both the 8B and 70B parameter models.

So, buckle up, dear reader, and get ready to dive into the world of LLMs, GPUs, and the amazing possibilities that lie at the intersection of these two!

NVIDIA 4080 16GB: A Powerhouse for Local LLMs

The NVIDIA 4080 16GB is a high-end GPU that delivers impressive performance, making it a top contender for running demanding tasks like LLM inference. Its powerful architecture and ample memory allow it to handle complex models with ease.

Llama 3: The Next Generation LLM

Llama 3, developed by Meta, is a powerful open-source LLM known for its impressive capabilities. It comes in different sizes, with the 8B and 70B models being particularly popular. Let's explore how the NVIDIA 4080 16GB handles these models.

Quantization: Making LLMs More Efficient

Quantization is a technique used to reduce the size of LLMs without significantly sacrificing accuracy. It involves converting the model's weights from the standard 32-bit floating-point format to lower-precision formats like Q4 or F16. This results in a smaller model that requires less memory and processing power to run.

- Q4: This quantization level uses only 4 bits to represent each weight, leading to significant memory savings.

- F16: This uses 16 bits, offering a balance between accuracy and memory reduction.

Benchmarking Performance: Tokens per Second

The following table summarizes the token generation speed in tokens per second (tokens/s) for different Llama 3 models on the NVIDIA 4080 16GB:

| Model Configuration | Tokens/Second (Tokens/s) |

|---|---|

| Llama 3 8B Q4 K_M Generation | 106.22 |

| Llama 3 8B F16 Generation | 40.29 |

| Llama 3 70B Q4 K_M Generation | N/A |

| Llama 3 70B F16 Generation | N/A |

Note: The performance data for the 70B models is not available.

Analyzing the Results: A Deeper Dive

Llama 3 8B Performance:

- Q4: The 8B model with Q4 quantization achieves a remarkable 106.22 tokens/s, showcasing impressive speed.

- F16: The F16 model achieves 40.29 tokens/s. This is significantly slower than the Q4 model, demonstrating the impact of quantization on performance.

Llama 3 70B Performance:

- Q4 & F16: Unfortunately, the performance data for the 70B models is not available. It's likely that the NVIDIA 4080 16GB may struggle to handle the 70B model efficiently, even with quantization techniques.

Comparison: Exploring the Importance of Quantization

The data clearly demonstrates the significant impact of quantization on token generation speed. The Llama 3 8B model with Q4 quantization achieves over 2.5 times faster token generation speed compared to the F16 model.

Think of it this way: If you want to quickly generate a 1000-token text, the Q4 model would take approximately 9.4 seconds, while the F16 model would take over 24 seconds. That's a huge difference for anyone who wants to quickly get results from their LLM!

Beyond Token Generation: Model Processing Power

The NVIDIA 4080 16GB also shines in processing the LLM models, offering a significant boost in efficiency. Here's a glimpse of the processing speeds:

| Model Configuration | Processing Speed (tokens/s) |

|---|---|

| Llama 3 8B Q4 K_M Processing | 5064.99 |

| Llama 3 8B F16 Processing | 6758.9 |

| Llama 3 70B Q4 K_M Processing | N/A |

| Llama 3 70B F16 Processing | N/A |

As you can see, the NVIDIA 4080 16GB handles both Q4 and F16 models with remarkable speed, demonstrating its prowess in processing these complex LLMs.

Conclusion: A Powerful Combination for Unleashing LLMs

The NVIDIA 4080 16GB, coupled with the right quantization techniques, proves to be a powerful combination for running LLMs locally. While the 70B model might be a challenge, the NVIDIA 4080 16GB excels in handling the 8B model, particularly with Q4 quantization, making it a great option for developers and anyone looking to explore the exciting world of LLMs.

FAQ

What is the significance of token generation speed?

Token generation speed determines how fast an LLM can generate text. A higher token generation speed means the model can produce text faster, making it more responsive and efficient.

What is quantization and how does it impact performance?

Quantization is a technique that reduces the size of LLMs by converting their weights to lower precision formats. This leads to faster processing and reduced memory usage, but can slightly impact accuracy.

Why are there no benchmarks for the Llama 3 70B model?

It's possible that the NVIDIA 4080 16GB may not have enough memory to handle the 70B model efficiently. Alternatively, the benchmarks might not have been conducted for this particular configuration.

What other GPUs can I use to run LLMs?

There are many other GPUs available, such as the NVIDIA RTX 4090, RTX 3090, and AMD Radeon RX 7900 XTX. The specific choice depends on your budget and performance requirements.

Keywords

LLMs, Large Language Models, NVIDIA 4080 16GB, GPU, token generation speed, Llama 3, 8B, 70B, quantization, Q4, F16, performance benchmark, local LLM, text generation, AI, machine learning, deep learning