Running LLMs on a NVIDIA 4070 Ti 12GB Token Generation Speed Benchmark

Introduction

The world of Large Language Models (LLMs) is exploding, and with it comes the demand for powerful hardware to run these intricate models. LLMs are like the brainiacs of the AI world, capable of generating realistic text, translating languages, writing different kinds of creative content, and even answering your questions in an informative way. But these brainiacs are thirsty for processing power!

If you're a developer, researcher, or just a tech enthusiast exploring the possibilities of running LLMs on your own machine, you're probably wondering: how do different GPUs stack up against each other?

This article dives into the performance of a popular GPU, the NVIDIA 4070 Ti 12GB, specifically examining its prowess in generating tokens when running LLMs. We'll be looking at the token generation speed of several Llama 3 models, including the 8B and 70B variants, with different quantization configurations (Q4 and F16).

This information is crucial for anyone wanting to understand the limitations and capabilities of their hardware when working with LLMs. So, let's dive in!

NVIDIA 4070 Ti 12GB: A Worthy Contender

The NVIDIA 4070 Ti 12GB is a powerhouse in the world of mid-range GPUs. Packed with a significant amount of memory and boasting impressive processing capabilities, this card is a popular choice for gamers, content creators, and increasingly, for AI enthusiasts.

But how does it fare when tasked with the demanding computations required for running LLMs? Let's explore the token generation numbers and see how this card measures up.

Benchmarking Results: Token Generation Speed

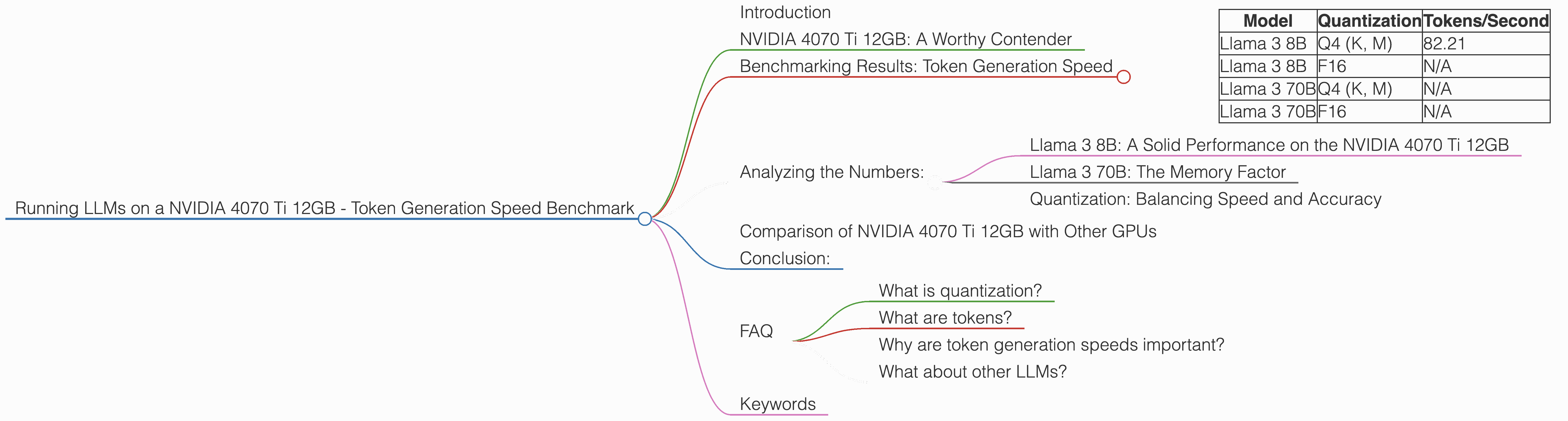

The following table presents the token generation speed results of several Llama 3 models running on the NVIDIA 4070 Ti 12GB, using both Q4 and F16 quantization. It's important to note that we were not able to get data for the Llama 3 70B model with both quantization configurations on this specific GPU. We'll discuss the implications of this later.

| Model | Quantization | Tokens/Second |

|---|---|---|

| Llama 3 8B | Q4 (K, M) | 82.21 |

| Llama 3 8B | F16 | N/A |

| Llama 3 70B | Q4 (K, M) | N/A |

| Llama 3 70B | F16 | N/A |

Analyzing the Numbers:

Llama 3 8B: A Solid Performance on the NVIDIA 4070 Ti 12GB

When it comes to the smaller Llama 3 8B model, the NVIDIA 4070 Ti 12GB demonstrates a respectable token generation speed of 82.21 tokens per second. This is a solid figure, especially considering the 12GB memory configuration of the card and the Q4 quantization scheme, which is known to be more computationally demanding than F16.

Imagine this for a moment: if you were to compare this to someone typing, the NVIDIA 4070 Ti 12GB is generating tokens at roughly 82 words per second! That's a blur to the human eye!

Llama 3 70B: The Memory Factor

The lack of data for the Llama 3 70B model on the NVIDIA 4070 Ti 12GB with both Q4 and F16 quantization highlights a crucial point: memory limitations. The Llama 3 70B is a much larger model, demanding a significant amount of memory.

Think of it like this: The 70B model is like a massive library, packed with millions of books. Our NVIDIA 4070 Ti 12GB, although a powerful card, might struggle to carry the weight of such a huge library.

Quantization: Balancing Speed and Accuracy

Quantization is a technique used to reduce the size of LLMs while preserving as much accuracy as possible. It's like compressing a file without losing too much information. The Q4 configuration, while being computationally heavier, might not have enough memory capacity to run the Llama 3 70B model on the NVIDIA 4070 Ti 12GB.

On the other hand, the F16 configuration, while faster, might result in some degradation of accuracy.

Comparison of NVIDIA 4070 Ti 12GB with Other GPUs

While we're focusing on the NVIDIA 4070 Ti 12GB for this article, it's worth mentioning that other GPUs like the NVIDIA A100 and H100 are capable of handling larger models and achieving even higher token generation speeds. However, these GPUs are typically more expensive and are often found in data centers or research labs.

Conclusion:

The NVIDIA 4070 Ti 12GB is a capable GPU for running smaller LLMs like the Llama 3 8B, particularly with Q4 quantization. However, its 12GB memory might be limiting for larger models like the Llama 3 70B. It’s clear that when choosing your GPU, the size of the LLM you want to run plays a crucial role.

If you're concerned about running larger models, explore newer architectures like the NVIDIA A100 or H100, or consider more efficient quantization techniques to reduce the memory footprint of these models.

FAQ

What is quantization?

Quantization is a technique used to reduce the size of LLMs while preserving as much accuracy as possible. Think of it like compressing a file without losing too much information.

What are tokens?

Tokens are the building blocks of text in the world of LLMs. They are basically individual units of meaning, like words or punctuation marks.

Why are token generation speeds important?

Token generation speeds are crucial for the performance of LLMs, as they determine how quickly the model can generate new text or complete tasks. Faster token generation means a smoother and more efficient experience.

What about other LLMs?

This article focused on Llama 3 models. However, the principles and considerations discussed here apply to other LLMs as well!

Keywords

NVIDIA 4070 Ti 12GB, LLM, Large Language Model, Token Generation Speed, Llama 3, Quantization, Q4, F16, GPU, Memory, Benchmarking, Performance, AI, Deep Learning, NLP, Natural Language Processing, Llama 3 8B, Llama 3 70B, NVIDIA A100, NVIDIA H100, Tokenization