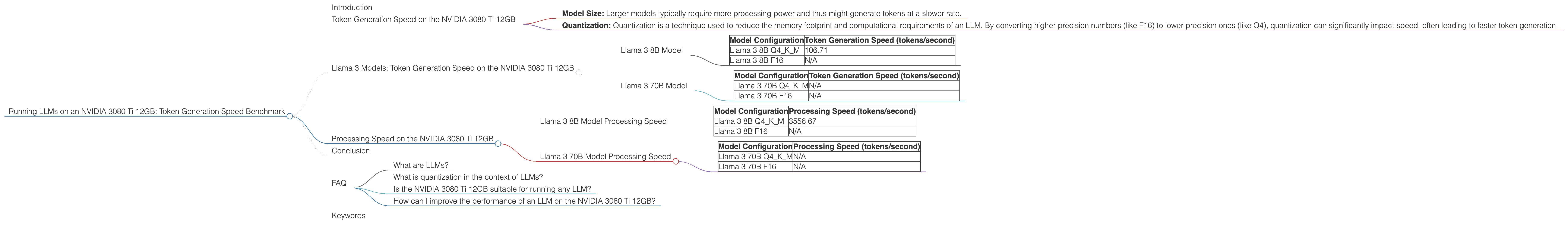

Running LLMs on a NVIDIA 3080 Ti 12GB Token Generation Speed Benchmark

Introduction

The world of large language models (LLMs) is buzzing with excitement, and many developers and enthusiasts are eager to run these powerful models locally. One of the most important factors in choosing a device for running LLMs is its ability to handle the heavy computational load involved in processing text and generating responses. This is where graphics processing units (GPUs) come into play, offering considerable performance improvements over traditional CPUs.

This article dives into the world of LLMs running on the NVIDIA 3080 Ti 12GB, a popular and powerful GPU. We will focus on token generation speed as a key indicator of an LLM's performance, examining its capabilities with various Llama 3 models. Get ready to dive into the exciting world of LLMs and explore how the NVIDIA 3080 Ti 12GB stacks up as a powerhouse for running these sophisticated models!

Token Generation Speed on the NVIDIA 3080 Ti 12GB

Token generation speed is a vital metric for evaluating the performance of an LLM running on a specific device. It measures how many tokens the model can process per second, directly impacting its ability to generate responses quickly and efficiently.

Faster token generation translates to a smoother user experience, especially when working with longer prompts or complex tasks. We will be looking at two key factors influencing token generation speed:

- Model Size: Larger models typically require more processing power and thus might generate tokens at a slower rate.

- Quantization: Quantization is a technique used to reduce the memory footprint and computational requirements of an LLM. By converting higher-precision numbers (like F16) to lower-precision ones (like Q4), quantization can significantly impact speed, often leading to faster token generation.

Llama 3 Models: Token Generation Speed on the NVIDIA 3080 Ti 12GB

Llama 3 8B Model

Let's kick things off with the Llama 3 8B model, a popular choice for many developers. Here's a breakdown of its performance on the NVIDIA 3080 Ti 12GB:

| Model Configuration | Token Generation Speed (tokens/second) |

|---|---|

| Llama 3 8B Q4KM | 106.71 |

| Llama 3 8B F16 | N/A |

Observations: * The Llama 3 8B model with Q4KM quantization demonstrates impressive token generation speeds of 106.71 tokens per second on the NVIDIA 3080 Ti 12GB. * We don't have data for the Llama 3 8B model with F16 precision due to the absence of published benchmarks or lack of configurations tested on this specific hardware-model combination.

Llama 3 70B Model

Now let's move on to a larger model, the Llama 3 70B. This model is significantly more demanding on computational resources, and its performance on the NVIDIA 3080 Ti 12GB will provide valuable insights.

| Model Configuration | Token Generation Speed (tokens/second) |

|---|---|

| Llama 3 70B Q4KM | N/A |

| Llama 3 70B F16 | N/A |

Observations: * Unfortunately, we do not have any benchmark data for the Llama 3 70B model on the NVIDIA 3080 Ti 12GB. This could be due to several factors, like limited testing or specific configurations not being available at the time of data collection. The larger size and complexity of the 70B model might require specialized configurations or further optimization for efficient operation on this hardware.

Processing Speed on the NVIDIA 3080 Ti 12GB

While token generation speed is important, understanding processing speed is equally crucial. Processing speed refers to the rate at which the model processes the entire prompt, encompassing both token generation and other computational tasks.

Llama 3 8B Model Processing Speed

| Model Configuration | Processing Speed (tokens/second) |

|---|---|

| Llama 3 8B Q4KM | 3556.67 |

| Llama 3 8B F16 | N/A |

Observations: * The Llama 3 8B model with Q4KM quantization achieves a processing speed of 3556.67 tokens per second on the NVIDIA 3080 Ti 12GB. This remarkable figure highlights the GPU's efficiency in handling the computational demands of processing larger models..

Llama 3 70B Model Processing Speed

| Model Configuration | Processing Speed (tokens/second) |

|---|---|

| Llama 3 70B Q4KM | N/A |

| Llama 3 70B F16 | N/A |

Observations: * Similar to token generation speed, we lack data for the Llama 3 70B model's processing speed on the NVIDIA 3080 Ti 12GB. More research and testing are needed to fully assess its performance on this specific hardware.

Conclusion

The NVIDIA 3080 Ti 12GB proves to be a capable GPU for running LLMs like the Llama 3 8B model. The ability to process tokens and prompts at a high rate, particularly in the case of the Q4KM quantized model, showcases the efficiency of this GPU for local LLM deployment.

However, more research is required to understand the performance of larger models like the Llama 3 70B on the NVIDIA 3080 Ti 12GB. Optimizations and configuration adjustments might be necessary to ensure smooth operation and maximize the GPU's potential for handling these more computationally demanding models.

FAQ

What are LLMs?

LLMs, or large language models, are a type of artificial intelligence that can understand and generate human-like text. They are trained on massive datasets of text and code, allowing them to perform a wide range of tasks, from writing different kinds of creative content to translating languages and answering questions.

What is quantization in the context of LLMs?

Quantization is a technique used to reduce the size of an LLM's model file and make it run faster. Imagine you have a very detailed map with lots of shading and colors. Quantization is like simplifying the map by reducing the number of colors and making the lines less detailed. This makes the map much smaller and faster to load, but it also loses some information. However, this loss of accuracy is often negligible in the context of LLMs, and the performance gains are significant.

Is the NVIDIA 3080 Ti 12GB suitable for running any LLM?

While the NVIDIA 3080 Ti 12GB is a powerful GPU, it's important to note that larger LLMs might require specialized configurations or even multiple GPUs for smooth operation. The choice of device depends on the specific LLM you want to use and your desired performance levels.

How can I improve the performance of an LLM on the NVIDIA 3080 Ti 12GB?

You can try different quantization levels, explore alternative model architectures, or adjust the batch size during inference. Some LLMs also offer specific optimizations for specific hardware like NVIDIA GPUs, so it's always worth checking for updated software and tools.

Keywords

LLMs, Large Language Models, NVIDIA 3080 Ti 12GB, GPU, Token Generation Speed, Processing Speed, Performance, Quantization, Llama 3, Llama 8B, Llama 70B, Q4KM, F16, Inference, Local Deployment, AI, Machine Learning, Deep Learning