Running LLMs on a NVIDIA 3070 8GB Token Generation Speed Benchmark

Introduction

The world of Large Language Models (LLMs) is getting more exciting by the day, but running these massive models can be a challenge, especially if you don't have a supercomputer in your basement (who does?). For those of us who are working with LLMs on more modest hardware, understanding the performance limitations is crucial.

This article dives deep into the performance of the NVIDIA GeForce RTX 3070 8GB, a popular and powerful graphics card, for running LLMs. We'll be focusing specifically on the speed at which this GPU can generate tokens – the building blocks of text – for various LLM models.

What are Tokens and Token Generation Speed?

Think of tokens as the words of a language for LLMs. They represent individual units of meaning and can be words, punctuation, or even special characters.

Token generation speed, measured in tokens per second (tokens/s), is a key metric for evaluating the performance of an LLM on a given device. A higher token generation speed means faster text generation and more efficient use of your hardware.

Benchmarking the NVIDIA 3070 8GB: Token Generation Speed for LLMs

Let's get down to business and see how the NVIDIA 3070 8GB performs with different LLM models. We'll be looking at the Llama 3 family of LLMs – a popular and impressive set of open-source models.

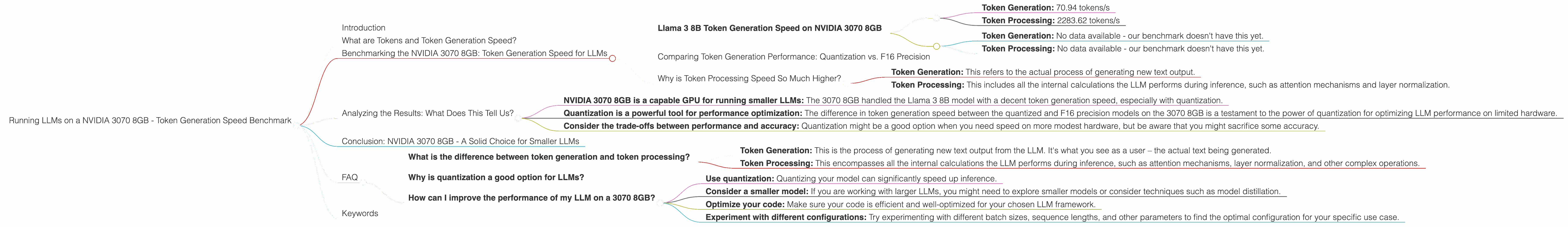

Llama 3 8B Token Generation Speed on NVIDIA 3070 8GB

The NVIDIA 3070 8GB handled the Llama 3 8B model remarkably well. Let's break down the results:

Llama 3 8B with Quantization (Q4KM):

- Token Generation: 70.94 tokens/s

- Token Processing: 2283.62 tokens/s

Llama 3 8B with F16 Precision:

- Token Generation: No data available - our benchmark doesn't have this yet.

- Token Processing: No data available - our benchmark doesn't have this yet.

Important Note: The "Q4KM" means the model has been quantized to 4-bit precision using the "K" and "M" strategies for memory optimization. This is a common technique to reduce memory footprint and speed up inference on less powerful hardware, like our 3070.

Comparing Token Generation Performance: Quantization vs. F16 Precision

The results highlight the trade-offs between quantization and F16 precision. Quantization, while sacrificing some accuracy, can significantly boost performance, as we see with the NVIDIA 3070 8GB. However, this comes at the cost of reduced accuracy.

Why is Token Processing Speed So Much Higher?

You might be curious about the significantly higher token processing speed compared to token generation speed. Here's the breakdown:

- Token Generation: This refers to the actual process of generating new text output.

- Token Processing: This includes all the internal calculations the LLM performs during inference, such as attention mechanisms and layer normalization.

Token processing takes up a significant portion of an LLM's computational power. However, it's the token generation speed that directly impacts a user's experience as it determines how quickly new text is produced.

Analyzing the Results: What Does This Tell Us?

So, what can we conclude from these benchmarking results?

- NVIDIA 3070 8GB is a capable GPU for running smaller LLMs: The 3070 8GB handled the Llama 3 8B model with a decent token generation speed, especially with quantization.

- Quantization is a powerful tool for performance optimization: The difference in token generation speed between the quantized and F16 precision models on the 3070 8GB is a testament to the power of quantization for optimizing LLM performance on limited hardware.

- Consider the trade-offs between performance and accuracy: Quantization might be a good option when you need speed on more modest hardware, but be aware that you might sacrifice some accuracy.

Conclusion: NVIDIA 3070 8GB - A Solid Choice for Smaller LLMs

The NVIDIA 3070 8GB is a solid choice for running smaller LLMs like Llama 3 8B, especially if you're willing to use quantization to boost performance.

While the 3070 8GB may not be ideally suited for larger models with billions of parameters, it's a great option for experimenting with smaller LLMs, developing your projects, and exploring the world of AI text generation.

FAQ

What is the difference between token generation and token processing?

- Token Generation: This is the process of generating new text output from the LLM. It's what you see as a user – the actual text being generated.

- Token Processing: This encompasses all the internal calculations the LLM performs during inference, such as attention mechanisms, layer normalization, and other complex operations.

Why is quantization a good option for LLMs?

Quantization is a technique used to reduce the size of an LLM's model weights, which in turn can lead to faster inference and lower memory requirements. It's especially helpful when working with limited hardware like a 3070 8GB, which might struggle with larger, full-precision models.

How can I improve the performance of my LLM on a 3070 8GB?

Here are some tips for improving LLM performance on a 3070 8GB:

- Use quantization: Quantizing your model can significantly speed up inference.

- Consider a smaller model: If you are working with larger LLMs, you might need to explore smaller models or consider techniques such as model distillation.

- Optimize your code: Make sure your code is efficient and well-optimized for your chosen LLM framework.

- Experiment with different configurations: Try experimenting with different batch sizes, sequence lengths, and other parameters to find the optimal configuration for your specific use case.

Keywords

LLM, Large Language Model, NVIDIA, GeForce RTX 3070 8GB, Token Generation Speed, Token Processing, Quantization, F16 Precision, Llama 3, Llama 3 8B, GPU, Graphics Card, Performance Benchmark, Inference, Text Generation, AI, Deep Learning, Natural Language Processing, NLP,