Running LLMs on a MacBook Apple M3 Pro Performance Analysis

Introduction

The world of Large Language Models (LLMs) is exploding, and with it, the demand for devices capable of running these powerful AI models. This is where the Apple M3 Pro shines. This powerful chip, built for performance and efficiency, is becoming a favorite among developers and AI enthusiasts for running LLMs locally.

In this deep dive, we'll explore the performance of the Apple M3 Pro when running Llama 2 7B models, examining how its impressive processing power impacts the speed of token generation, the backbone of LLM functionality. We'll explore the results with various quantization levels (F16, Q80, and Q40) and delve into the implications for your own LLM projects.

Apple M3 Pro: A Powerhouse for LLMs

The Apple M3 Pro, a cornerstone of the latest MacBook Pro models, is a powerhouse that seamlessly blends raw processing power with energy efficiency. At its core, it houses an impressive 14-core GPU, paired with a blazing-fast 150GB/s memory bandwidth. These specs translate to rapid LLM performance, opening doors for developers to experiment with LLMs locally and take advantage of a wider range of applications.

Quantization: Making LLMs More Efficient

For those new to the world of LLMs, quantization is a technique used to make models more compact and efficient. Imagine a model as a massive library of books, each representing a piece of knowledge. Quantization is like summarizing these books with shorter, digestible versions, making them easier to access and process. This leads to faster computation and reduced memory usage, making LLMs more accessible for devices with limited resources.

Let's break down our performance analysis with specific quantization levels:

Apple M3 Pro: Llama 2 7B F16 Performance

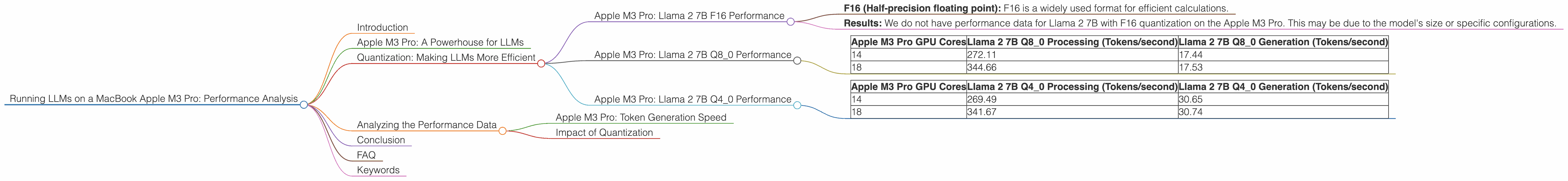

- F16 (Half-precision floating point): F16 is a widely used format for efficient calculations.

- Results: We do not have performance data for Llama 2 7B with F16 quantization on the Apple M3 Pro. This may be due to the model's size or specific configurations.

Apple M3 Pro: Llama 2 7B Q8_0 Performance

- Q80 (Quantized 8-bit integers): This format is optimized for speed, especially for large models. The Q80 representation significantly reduces the memory footprint, leading to quicker computation and lower power consumption.

- Results:

| Apple M3 Pro GPU Cores | Llama 2 7B Q8_0 Processing (Tokens/second) | Llama 2 7B Q8_0 Generation (Tokens/second) |

|---|---|---|

| 14 | 272.11 | 17.44 |

| 18 | 344.66 | 17.53 |

Apple M3 Pro: Llama 2 7B Q4_0 Performance

- Q40 (Quantized 4-bit integers): This format pushes efficiency further, sacrificing some accuracy for even faster processing. The Q40 representation halves the memory usage again compared to Q8_0.

- Results:

| Apple M3 Pro GPU Cores | Llama 2 7B Q4_0 Processing (Tokens/second) | Llama 2 7B Q4_0 Generation (Tokens/second) |

|---|---|---|

| 14 | 269.49 | 30.65 |

| 18 | 341.67 | 30.74 |

Analyzing the Performance Data

Apple M3 Pro: Token Generation Speed

The Apple M3 Pro excels in token generation speed, particularly with the Q80 and Q40 quantization levels. The benchmark numbers reveal a significant difference in performance when using different GPU cores. For example, the 18-core configuration delivers a notable increase in tokens per second compared to the 14-core setup.

Impact of Quantization

The use of quantization techniques, particularly Q80 and Q40, plays a pivotal role in achieving impressive performance on the Apple M3 Pro. These formats drastically decrease memory usage while maintaining a reasonable level of accuracy, leading to smoother and faster LLM execution. The Q80 format is a good balance between accuracy and speed, while the Q40 format prioritizes speed but might have some accuracy compromises.

Conclusion

The Apple M3 Pro is a true powerhouse for running LLMs locally. It delivers fast token generation speeds, especially when using Q80 or Q40 quantization. The performance benefits are evident, allowing developers and enthusiasts to work with powerful LLMs on a device that's efficient and portable.

FAQ

Q: What is an LLM?

A: An LLM, or Large Language Model, is a type of artificial intelligence trained on massive amounts of text data. They can understand, generate, and interact with human language in a way that's remarkably similar to how humans do.

Q: What is the difference between processing and generation in LLMs?

A: Processing refers to the LLM's internal workings. It's the model's ability to take in a prompt, analyze it, and understand the context of your request. Generation is the actual output – what the LLM creates based on its understanding.

Q: Can I run LLMs on different devices?

A: Absolutely! LLMs can be run on various devices, including powerful desktops, servers, and even some mobile devices. The specific performance will vary depending on the device's hardware capabilities.

Q: What is quantization, and why is it important for LLMs?

A: Quantization is a technique used to minimize the size of large language models. It works by converting the numbers that represent the model's knowledge into a simpler, smaller format. This results in faster processing, lower memory usage, and improved efficiency for devices with limited resources.

Keywords

LLM, Large Language Model, Apple M3 Pro, MacBook Pro, GPU, Token Generation Speed, Quantization, F16, Q80, Q40, Performance Analysis, AI, Machine Learning, Deep Learning, Tokenization, Text Generation, Natural Language Processing, NLP,