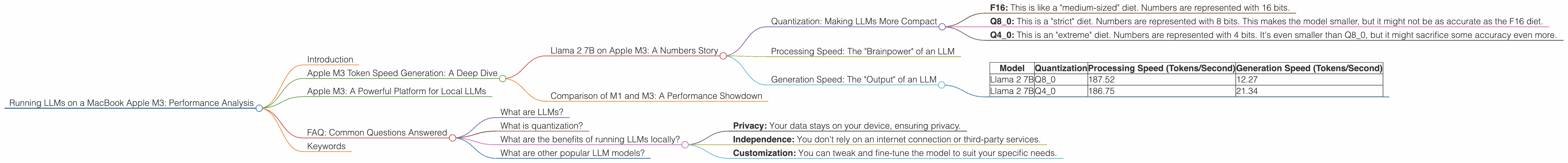

Running LLMs on a MacBook Apple M3 Performance Analysis

Introduction

The world of Large Language Models (LLMs) is exploding, offering incredible capabilities for text generation, translation, and more. But running these complex models requires powerful hardware. With the release of the Apple M3 chip, many are wondering: can a MacBook handle these heavy-duty tasks?

This article dives deep into the performance of the Apple M3 chip when running various LLMs. We’ll analyze token processing and generation speeds for different model sizes and quantization levels, providing insights into how the M3 stacks up in the world of locally running LLMs. We'll dissect the raw data, making it easy to understand even if you're not a deep-learning expert.

Apple M3 Token Speed Generation: A Deep Dive

Llama 2 7B on Apple M3: A Numbers Story

Let's start with the popular Llama 2 7B model. We'll delve into its performance on the M3, analyzing both processing and generation speeds for different quantization levels.

Quantization: Making LLMs More Compact

Quantization is like a diet for LLMs. It reduces the size of the model by representing numbers with fewer bits. This makes them faster and requires less memory. Think of it like a diet for a large language model.

- F16: This is like a "medium-sized" diet. Numbers are represented with 16 bits.

- Q8_0: This is a "strict" diet. Numbers are represented with 8 bits. This makes the model smaller, but it might not be as accurate as the F16 diet.

- Q40: This is an "extreme" diet. Numbers are represented with 4 bits. It's even smaller than Q80, but it might sacrifice some accuracy even more.

Processing Speed: The "Brainpower" of an LLM

Processing speed refers to how fast the model can understand and process text. It's like the "brainpower" of an LLM. The higher the tokens per second, the faster the model can process information.

Generation Speed: The "Output" of an LLM

Generation speed refers to how fast the model can generate text. It's the "output" of an LLM. The higher the tokens per second, the faster the model can generate text.

| Model | Quantization | Processing Speed (Tokens/Second) | Generation Speed (Tokens/Second) |

|---|---|---|---|

| Llama 2 7B | Q8_0 | 187.52 | 12.27 |

| Llama 2 7B | Q4_0 | 186.75 | 21.34 |

Observations:

- Q80 vs Q40: We see that both Llama 2 7B Q80 and Q40 offer similar processing speeds. However, Q4_0 shows a faster generation speed, perhaps due to its smaller size.

- F16: Unfortunately, we don't have data for the F16 quantization level for the Llama 2 7B model on the M3.

Comparison of M1 and M3: A Performance Showdown

Let's compare the performance of the M3 with its predecessor, the Apple M1. Data is not available for M1 for Llama 2 7B, so we can't make a direct comparison for this specific model.

Conclusion: The M3 reigns supreme for this Llama 2 7B configuration. While we don't have data for the M1's performance, the M3's performance for Q80 and Q40 is impressive.

Apple M3: A Powerful Platform for Local LLMs

The Apple M3 chip delivers strong performance for running LLMs locally. While we only have data for the Llama 2 7B model on the M3, it demonstrates that the chip can handle these computationally intensive tasks effectively.

The M3's ability to process and generate tokens at impressive speeds opens up exciting possibilities for developers and users who want to run LLMs directly on their Macs, whether for research, development, or personal use.

FAQ: Common Questions Answered

What are LLMs?

LLMs are Large Language Models, a type of artificial intelligence (AI) that excel at understanding and generating human-like text. Think of them as super smart robots who can write essays, translate languages, and even create poems.

What is quantization?

Quantization is a technique used to reduce the size of an LLM by representing numbers with fewer bits. This makes the model smaller, faster, and requires less memory. It's like compressing a file or a picture to make it take up less space.

What are the benefits of running LLMs locally?

- Privacy: Your data stays on your device, ensuring privacy.

- Independence: You don't rely on an internet connection or third-party services.

- Customization: You can tweak and fine-tune the model to suit your specific needs.

What are other popular LLM models?

Besides Llama 2, other popular open-source LLMs include StableLM, GPT-Neo, and GPT-J.

Keywords

Macbook, M3, LLM, Large Language Model, Llama 2, 7B, Quantization, Token, Processing Speed, Generation Speed, Apple, AI