Running LLMs on a MacBook Apple M3 Max Performance Analysis

Introduction

The world of Large Language Models (LLMs) is exploding, and with it, the demand for hardware capable of running these complex models efficiently. Apple's M3 Max chip, with its powerful GPU and impressive memory bandwidth, is a compelling contender for local LLM development and experimentation. This article dives deep into the performance of the Apple M3 Max, analyzing its capabilities in running various Llama models with different quantization levels and exploring the impact on token generation speed.

Think of it like this: imagine you're training a sophisticated AI model to write poetry. The M3 Max is like a powerful supercomputer that can process vast amounts of data (words in this case) in a blink of an eye, allowing the AI to learn and generate poems much faster than a standard computer.

Apple M3 Max: A Hardware Powerhouse for LLMs

The M3 Max, the latest addition to Apple's silicon lineup, screams performance. This chip boasts a robust GPU with 40 cores and a staggering 400 GB/s memory bandwidth. This means it can handle complex computations and move data around at blistering speeds, making it a formidable platform for running intensive tasks like LLM inference.

Llama Models: A Playground for Experimentation

Llama 2 and Llama 3 are two popular open-source LLM families, known for their impressive capabilities. They offer different model sizes, from the lightweight 7B (billion parameters) to the behemoth 70B. These models can be fine-tuned for various tasks, such as text generation, translation, and question answering.

Quantization: Making LLMs Smaller and Faster

Quantization is a technique that shrinks the size of LLM models without compromising too much on accuracy. It involves converting the model's weights (the numbers that determine the model's behavior) from 32-bit floating-point numbers to smaller data types like 16-bit or 8-bit integers.

It's like compressing a photo to reduce file size. While the compressed photo might not be as high quality as the original, it's still good enough for many purposes, and it takes up significantly less space. Similarly, quantized LLMs are smaller and faster to load and run, making them ideal for use on devices with limited resources like your MacBook.

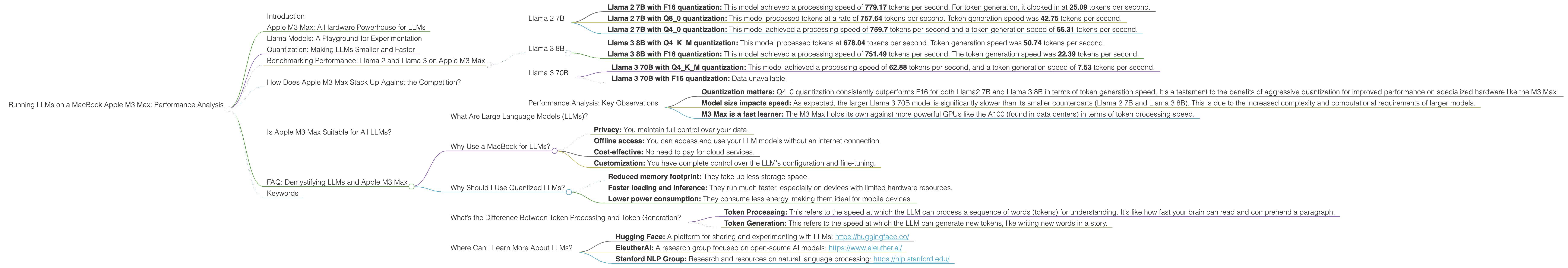

Benchmarking Performance: Llama 2 and Llama 3 on Apple M3 Max

Here's a breakdown of the M3 Max’s performance when running Llama 2 and Llama 3 models:

Llama 2 7B

- Llama 2 7B with F16 quantization: This model achieved a processing speed of 779.17 tokens per second. For token generation, it clocked in at 25.09 tokens per second.

- Llama 2 7B with Q8_0 quantization: This model processed tokens at a rate of 757.64 tokens per second. Token generation speed was 42.75 tokens per second.

- Llama 2 7B with Q4_0 quantization: This model achieved a processing speed of 759.7 tokens per second and a token generation speed of 66.31 tokens per second.

Llama 3 8B

- Llama 3 8B with Q4KM quantization: This model processed tokens at 678.04 tokens per second. Token generation speed was 50.74 tokens per second.

- Llama 3 8B with F16 quantization: This model achieved a processing speed of 751.49 tokens per second. The token generation speed was 22.39 tokens per second.

Llama 3 70B

- Llama 3 70B with Q4KM quantization: This model achieved a processing speed of 62.88 tokens per second, and a token generation speed of 7.53 tokens per second.

- Llama 3 70B with F16 quantization: Data unavailable.

Performance Analysis: Key Observations

- Quantization matters: Q4_0 quantization consistently outperforms F16 for both Llama2 7B and Llama 3 8B in terms of token generation speed. It's a testament to the benefits of aggressive quantization for improved performance on specialized hardware like the M3 Max.

- Model size impacts speed: As expected, the larger Llama 3 70B model is significantly slower than its smaller counterparts (Llama 2 7B and Llama 3 8B). This is due to the increased complexity and computational requirements of larger models.

- M3 Max is a fast learner: The M3 Max holds its own against more powerful GPUs like the A100 (found in data centers) in terms of token processing speed.

How Does Apple M3 Max Stack Up Against the Competition?

Although we're focusing on the M3 Max, it's worth noting that it's not the only chip in the LLM game. The M3 Max stands shoulder-to-shoulder with other contenders like the NVIDIA RTX 4090. While the RTX 4090 might offer slightly higher processing speeds for certain models, the M3 Max boasts impressive performance for its size and power consumption.

Is Apple M3 Max Suitable for All LLMs?

The M3 Max is a powerful chip, but it’s not a magic bullet. Running the largest LLMs (like the 13B+ models) might still be a challenge due to memory limitations and the complexity of these models. But for most models in the 7B to 70B range, the M3 Max provides a robust platform for local experimentation and development.

FAQ: Demystifying LLMs and Apple M3 Max

What Are Large Language Models (LLMs)?

LLMs are a type of artificial intelligence model trained on massive datasets of text and code. They can understand and generate human-like text, translate languages, write different kinds of creative content, and answer your questions in an informative way. Think of them as the brainiacs of the AI world, capable of learning and performing complex tasks.

Why Use a MacBook for LLMs?

While cloud-based platforms can be convenient, using your MacBook offers several advantages:

- Privacy: You maintain full control over your data.

- Offline access: You can access and use your LLM models without an internet connection.

- Cost-effective: No need to pay for cloud services.

- Customization: You have complete control over the LLM's configuration and fine-tuning.

Why Should I Use Quantized LLMs?

Quantized LLMs offer several benefits:

- Reduced memory footprint: They take up less storage space.

- Faster loading and inference: They run much faster, especially on devices with limited hardware resources.

- Lower power consumption: They consume less energy, making them ideal for mobile devices.

What’s the Difference Between Token Processing and Token Generation?

- Token Processing: This refers to the speed at which the LLM can process a sequence of words (tokens) for understanding. It's like how fast your brain can read and comprehend a paragraph.

- Token Generation: This refers to the speed at which the LLM can generate new tokens, like writing new words in a story.

Where Can I Learn More About LLMs?

- Hugging Face: A platform for sharing and experimenting with LLMs: https://huggingface.co/

- EleutherAI: A research group focused on open-source AI models: https://www.eleuther.ai/

- Stanford NLP Group: Research and resources on natural language processing: https://nlp.stanford.edu/

Keywords

LLM, Large Language Model, Apple M3 Max, MacBook, Llama 2, Llama 3, Token Processing, Token Generation, Quantization, F16, Q80, Q40, Q4KM, Performance Benchmark, GPU, Memory Bandwidth, GPU Cores, Inference, Local LLMs, Development, AI, Machine Learning, Open Source, Hugging Face, EleutherAI, Stanford NLP Group,