Running LLMs on a MacBook Apple M1 Performance Analysis

Introduction

The world of large language models (LLMs) is exploding, with new models and advancements emerging every day. This has sparked a growing interest in running these complex models locally, opening up opportunities for developers, researchers, and enthusiasts to experiment and personalize LLMs within their own environments. While powerful GPUs are typically the go-to choice for running LLMs, the Apple M1 chip with its impressive integrated GPU has shown surprising potential for local LLM execution.

In this article, we'll delve into the performance of the Apple M1 chip when running popular LLMs like Llama 2 and Llama 3, analyzing the processing and generation speeds based on various quantization levels and exploring the impact of different GPU core configurations. Get ready to discover whether your M1 MacBook can handle the computational demands of LLMs, and even if it can become your own personal language model playground!

Apple M1 Token Speed Generation: A Deep Dive

The Apple M1 chip, with its powerful integrated GPU, has been a surprise contender for running LLMs locally. But how does it actually perform? Let's take a closer look at the performance of different LLM models on the M1 using various quantization levels, which is like a "data diet" for these large models that can make them run faster without sacrificing too much accuracy.

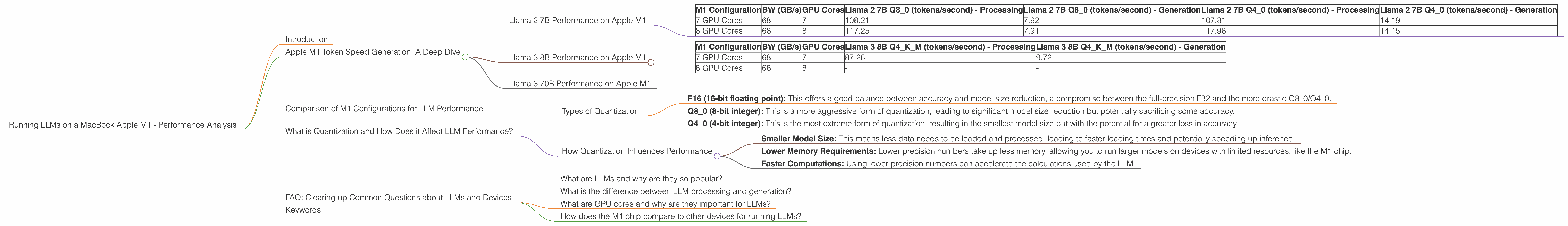

Llama 2 7B Performance on Apple M1

Note: The data for Llama 2 7B F16 (16-bit floating point) on both configurations of the M1 chip is unavailable.

| M1 Configuration | BW (GB/s) | GPU Cores | Llama 2 7B Q8_0 (tokens/second) - Processing | Llama 2 7B Q8_0 (tokens/second) - Generation | Llama 2 7B Q4_0 (tokens/second) - Processing | Llama 2 7B Q4_0 (tokens/second) - Generation |

|---|---|---|---|---|---|---|

| 7 GPU Cores | 68 | 7 | 108.21 | 7.92 | 107.81 | 14.19 |

| 8 GPU Cores | 68 | 8 | 117.25 | 7.91 | 117.96 | 14.15 |

Analysis:

- Quantization Matters: Both Q80 and Q40 quantization levels show decent performance for Llama 2 7B on the M1. Q40 generally offers faster processing speeds compared to Q80, hinting at the potential benefits of using more compressed models, even if the performance difference is not dramatic.

- Scaling with GPU Cores: The 8 GPU core M1 configuration consistently performs better than the 7 GPU core version for processing and generation of Llama 2 7B. This underscores the importance of having a high-performance GPU for smoother LLM execution.

- Processing vs. Generation: The processing speeds are significantly faster than generation speeds, suggesting that the M1 chip excels at handling the core LLM computations but struggles with generating outputs.

Llama 3 8B Performance on Apple M1

Note: The data for Llama 3 8B F16 (16-bit floating point) on both configurations of the M1 chip is unavailable.

| M1 Configuration | BW (GB/s) | GPU Cores | Llama 3 8B Q4KM (tokens/second) - Processing | Llama 3 8B Q4KM (tokens/second) - Generation |

|---|---|---|---|---|

| 7 GPU Cores | 68 | 7 | 87.26 | 9.72 |

| 8 GPU Cores | 68 | 8 | - | - |

Analysis:

- Smaller yet More Robust: The Llama 3 8B model, though smaller than the 70B variant, may require more computational power for optimal performance on the M1. Quantization (Q4KM) helps to improve this, and the 7 GPU core configuration shows decent performance.

- Missing Data: Unfortunately, data for the 8 GPU core M1 configuration for Llama 3 8B is missing. This makes it difficult to fully assess the scaling potential of the model on the M1 with a higher number of GPU cores.

Llama 3 70B Performance on Apple M1

Note: Data for Llama 3 70B on the M1 chip is unavailable. This suggests that the M1 is likely not powerful enough to run such a large model efficiently.

Comparison of M1 Configurations for LLM Performance

While we don't have data for all model-configuration pairs, the available results suggest that the M1 chip generally benefits from a higher number of GPU cores for LLM performance. This makes sense, as more processing units mean more power to handle complex calculations.

Note: While the M1 chip demonstrates potential for running smaller LLM models, it's important to remember that it's not a high-end GPU designed for dedicated LLMs. Its strengths lie in its power efficiency and integration, making it a good option for everyday tasks and potentially, for smaller LLM experiments.

What is Quantization and How Does it Affect LLM Performance?

Quantization is a technique used to reduce the size of LLMs by representing their weights and activations with lower precision numbers. Think of it as a "data diet" for your LLM, where you replace large, detailed meals with smaller, lighter snacks. This doesn't mean the LLM loses its intelligence; it just becomes more efficient and takes up less space.

Types of Quantization

There are various types of quantization, each with its own trade-offs:

- F16 (16-bit floating point): This offers a good balance between accuracy and model size reduction, a compromise between the full-precision F32 and the more drastic Q80/Q40.

- Q8_0 (8-bit integer): This is a more aggressive form of quantization, leading to significant model size reduction but potentially sacrificing some accuracy.

- Q4_0 (4-bit integer): This is the most extreme form of quantization, resulting in the smallest model size but with the potential for a greater loss in accuracy.

How Quantization Influences Performance

Quantization helps improve the performance of LLMs in several ways:

- Smaller Model Size: This means less data needs to be loaded and processed, leading to faster loading times and potentially speeding up inference.

- Lower Memory Requirements: Lower precision numbers take up less memory, allowing you to run larger models on devices with limited resources, like the M1 chip.

- Faster Computations: Using lower precision numbers can accelerate the calculations used by the LLM.

Example: Imagine you have a massive recipe book for cooking, but you want to make a quick snack. You can use a simplified recipe guide with fewer detailed instructions, which will be faster to follow and easier to understand. In the same way, quantization can be used to simplify the data used by LLMs, making them faster and more efficient without drastically affecting their overall performance.

FAQ: Clearing up Common Questions about LLMs and Devices

What are LLMs and why are they so popular?

LLMs are powerful computer programs that can understand and generate human-like text. They learn from massive amounts of data, allowing them to perform impressive tasks like writing stories, translating languages, and providing helpful information. Their popularity stems from their ability to perform tasks that were previously unimaginable for computers.

What is the difference between LLM processing and generation?

Processing refers to the core calculations and data manipulation that the LLM performs. Think of it as the LLM "thinking" or understanding the input.

Generation is the process of generating the final response or output. It's like the LLM expressing its understanding of the input in the form of text.

What are GPU cores and why are they important for LLMs?

GPU cores act as the muscle behind a GPU, responsible for performing complex mathematical operations. LLMs require massive amounts of calculations, and GPUs with more cores can handle these tasks more efficiently, leading to faster processing and generation speeds.

How does the M1 chip compare to other devices for running LLMs?

While the M1 chip is a powerful chip for its integrated GPU, it is not a dedicated high-end GPU. It may struggle with very large LLM models and might not perform as well as dedicated GPUs (like those found in gaming PCs or high-performance workstations).

Keywords

Apple M1, LLMs, performance analysis, Llama 2, Llama 3, GPU cores, quantization, F16, Q80, Q40, token speed, processing, generation, MacBook, local LLMs, model compression.