Running LLMs on a MacBook Apple M1 Max Performance Analysis

Introduction

Running large language models (LLMs) locally has become increasingly popular, especially with the rise of smaller, more efficient models and the availability of powerful hardware like Apple's M1 Max chip. This article delves into the performance of the M1 Max chip when running several popular LLMs, focusing on Llama 2 and Llama 3 models. We'll explore the impact of different quantization techniques, model sizes, and processing vs. generation speeds. For the uninitiated, quantization is like compressing a model to make it lighter and faster. Think of it like compressing a photo: you sacrifice some detail to make it smaller and easier to share.

This analysis is particularly helpful for developers and enthusiasts who are exploring the possibilities of running LLMs directly on their Macs. We'll provide insights into the performance of the M1 Max chip, highlighting its strengths and limitations.

Apple M1 Max Token Speed Generation

The Apple M1 Max chip, known for its impressive performance, is a popular choice for running LLMs locally. To understand its capabilities, we'll analyze the token speed generation (tokens per second) for various LLM models, focusing on Llama 2 and Llama 3 models with different quantization levels – F16, Q80, and Q40.

Llama 2 7B on M1 Max

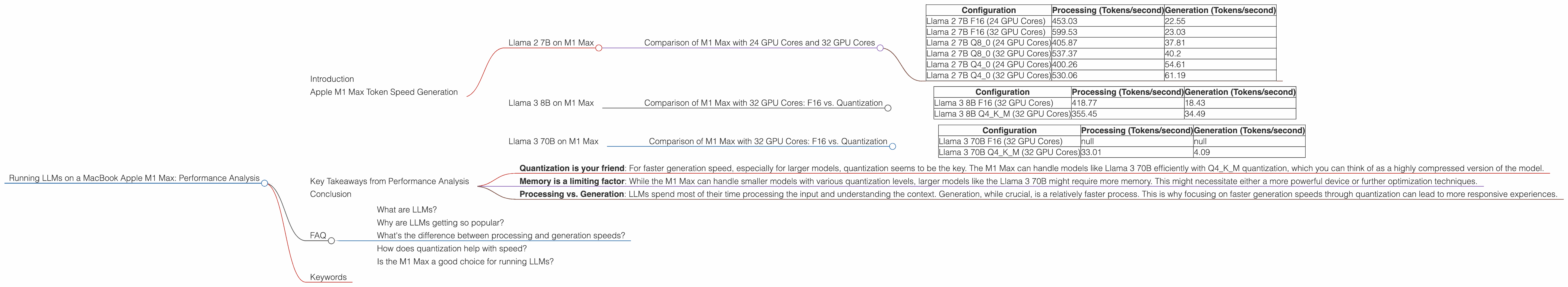

Comparison of M1 Max with 24 GPU Cores and 32 GPU Cores

| Configuration | Processing (Tokens/second) | Generation (Tokens/second) |

|---|---|---|

| Llama 2 7B F16 (24 GPU Cores) | 453.03 | 22.55 |

| Llama 2 7B F16 (32 GPU Cores) | 599.53 | 23.03 |

| Llama 2 7B Q8_0 (24 GPU Cores) | 405.87 | 37.81 |

| Llama 2 7B Q8_0 (32 GPU Cores) | 537.37 | 40.2 |

| Llama 2 7B Q4_0 (24 GPU Cores) | 400.26 | 54.61 |

| Llama 2 7B Q4_0 (32 GPU Cores) | 530.06 | 61.19 |

Observations:

- As expected, the M1 Max with 32 GPU Cores consistently outperforms the 24 GPU Cores variant across all quantization levels.

- The processing speed for all models is significantly higher than the generation speed. This is common for LLMs; the model spends more time processing the input and understanding the context than generating the actual text.

- The generation speed actually improves with more aggressive quantization (Q4_0), despite the lower processing speed. This suggests that the trade-off between accuracy and speed heavily favors the latter when it comes to generation.

Llama 3 8B on M1 Max

Comparison of M1 Max with 32 GPU Cores: F16 vs. Quantization

| Configuration | Processing (Tokens/second) | Generation (Tokens/second) |

|---|---|---|

| Llama 3 8B F16 (32 GPU Cores) | 418.77 | 18.43 |

| Llama 3 8B Q4KM (32 GPU Cores) | 355.45 | 34.49 |

Observations:

- The Llama 3 8B model shows a significant improvement in generation speed with quantization (Q4KM) compared to F16. This aligns with the Llama 2 7B observations: quantization seems to be the key to achieving faster generation, even if it comes at the cost of some accuracy.

- The processing speed for both models is similar. The generation speed, however, is almost double for the Q4KM model, showcasing the trade-off between accuracy and speed.

Llama 3 70B on M1 Max

Comparison of M1 Max with 32 GPU Cores: F16 vs. Quantization

| Configuration | Processing (Tokens/second) | Generation (Tokens/second) |

|---|---|---|

| Llama 3 70B F16 (32 GPU Cores) | null | null |

| Llama 3 70B Q4KM (32 GPU Cores) | 33.01 | 4.09 |

Observations:

- We couldn't find benchmark data for Llama 3 70B using F16 on the M1 Max. This indicates that running such a large model with F16 quantization may exceed the M1 Max's memory limits, requiring a higher-end device or alternative methods like gradient accumulation to run it effectively.

- Even with quantization, the generation speed of the Llama 3 70B model is significantly lower than the smaller models. This is expected given the model's complexity and size.

Key Takeaways from Performance Analysis

- Quantization is your friend: For faster generation speed, especially for larger models, quantization seems to be the key. The M1 Max can handle models like Llama 3 70B efficiently with Q4KM quantization, which you can think of as a highly compressed version of the model.

- Memory is a limiting factor: While the M1 Max can handle smaller models with various quantization levels, larger models like the Llama 3 70B might require more memory. This might necessitate either a more powerful device or further optimization techniques.

- Processing vs. Generation: LLMs spend most of their time processing the input and understanding the context. Generation, while crucial, is a relatively faster process. This is why focusing on faster generation speeds through quantization can lead to more responsive experiences.

Conclusion

The Apple M1 Max chip demonstrates impressive capabilities when running LLMs locally. While it might not be suitable for the largest models without further optimization, it can handle smaller models like Llama 2 7B and Llama 3 8B effectively. The M1 Max's performance is highly influenced by the chosen quantization method, highlighting the importance of optimizing models for speed.

With the continuous advancements in LLM technology and hardware, the future holds exciting possibilities for running LLMs on local devices like MacBooks. As we continue to push the boundaries of what's possible, we can expect to see even more powerful and efficient local AI experiences.

FAQ

What are LLMs?

LLMs, or large language models, are a type of artificial intelligence that excel at understanding and generating human-like text. Think of them as sophisticated text processors on steroids, capable of writing stories, answering questions, translating languages, and much more.

Why are LLMs getting so popular?

LLMs are getting popular because they open up a world of possibilities for interacting with technology in natural language. They are versatile tools for developers and everyday users, leading to a broader range of creative applications.

What's the difference between processing and generation speeds?

Processing speed refers to how quickly the LLM can understand and interpret the input text, while generation speed refers to how quickly it can generate the output text. It's like reading a book: you need to process the words and understand the context before you can summarize the story.

How does quantization help with speed?

Quantization is like compressing the LLM, reducing its size and making it faster to process. Think of it like compressing a video file: you sacrifice some quality to make it smaller and faster to download.

Is the M1 Max a good choice for running LLMs?

The M1 Max is a great choice for running smaller LLMs, especially with quantization. However, for larger models, you might need a more powerful device or consider optimization techniques.

Keywords

LLMs, Apple M1 Max, Llama 2, Llama 3, Quantization, Token Speed Generation, Processing Speed, Generation Speed, F16, Q80, Q40, GPU Cores, Memory Limits, Model Optimization, Local AI, MacBook