Running Large LLMs on NVIDIA RTX A6000 48GB: Avoiding Out of Memory Errors

Introduction

The world of Large Language Models (LLMs) is buzzing with excitement, and for good reason! These powerful AI models can generate human-quality text, translate languages, write different kinds of creative content, and answer your questions in an informative way. But, if you're eager to run these models on your own machine, you might encounter a pesky problem: out-of-memory errors. LLMs are like hungry, data-loving monsters - they need a lot of memory (RAM) to function.

This article focuses on the NVIDIA RTX A6000 48GB, a powerful graphics card designed for demanding tasks like artificial intelligence and machine learning. We'll dive into the common concerns of running various LLM models on this GPU, focusing on how to avoid those dreaded out-of-memory errors. Buckle up, because it's time to tame the language model behemoths!

Understanding Out-of-Memory Errors

Imagine your computer's RAM as a giant warehouse stocked with building blocks. When you run an LLM, it's like building a massive castle with these blocks. But if the warehouse doesn't have enough blocks, you'll run out of resources and the castle will collapse. That's essentially what happens with an out-of-memory error: your computer's RAM can't handle the demands of the LLM.

There are a few factors that contribute to these errors:

- Model size: Larger models are like more complex castles. They require more building blocks (i.e., RAM).

- Batch size: If you're processing a large amount of text at once (like a whole book), you'll need more building blocks to hold it all.

- Quantization: Quantization is a cool technique that shrinks the size of the model by reducing the precision of its numbers. It's like using smaller building blocks, but you might lose some detail.

NVIDIA RTX A6000 48GB: A Powerful Ally

The NVIDIA RTX A6000 48GB is a beast of a GPU. It's equipped with 48GB of HBM2e memory, which is like a super-sized warehouse for your building blocks. This makes it a great choice for running LLMs, especially the larger ones. It can handle a 70 billion parameter model, which is like constructing a castle that would make Kings Landing jealous!

Llama3 on RTX A6000 48GB: Performance and Considerations

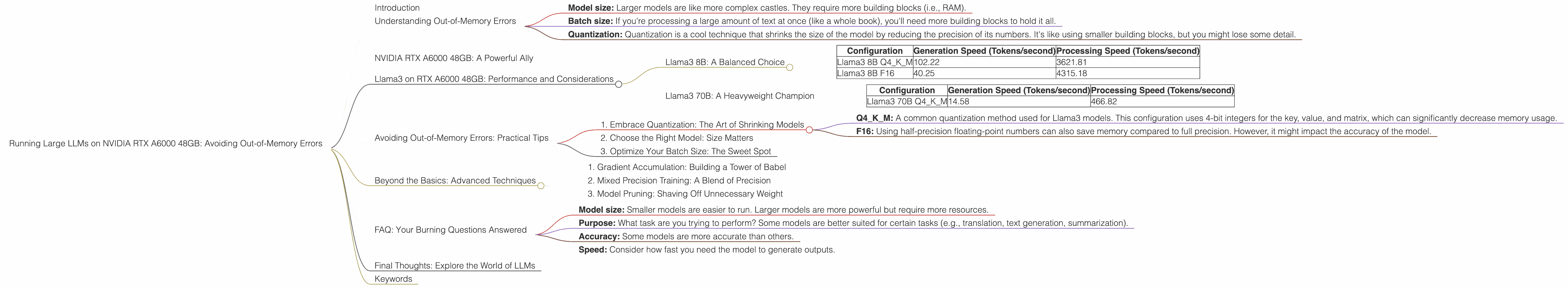

Let's analyze the performance of different Llama3 models on the RTX A6000 48GB. We'll use the following metrics:

- Generation Speed (Tokens/second): This measures how fast the LLM can generate text.

- Processing Speed (Tokens/second): This measures how fast the LLM can process input text.

Important note: The data presented below is based on the following sources (links to external sources): * Performance of llama.cpp on various devices (https://github.com/ggerganov/llama.cpp/discussions/4167) by ggerganov * GPU Benchmarks on LLM Inference (https://github.com/XiongjieDai/GPU-Benchmarks-on-LLM-Inference) by XiongjieDai

Please note: Not all model configurations have been tested on this GPU, so data for specific models and configurations may be missing.

Llama3 8B: A Balanced Choice

| Configuration | Generation Speed (Tokens/second) | Processing Speed (Tokens/second) |

|---|---|---|

| Llama3 8B Q4KM | 102.22 | 3621.81 |

| Llama3 8B F16 | 40.25 | 4315.18 |

The Llama3 8B, a popular 8-billion parameter model, performs well on the RTX A6000 48GB.

- The Q4KM configuration (quantized with 4-bit integers for the key, value, and matrix) is a good balance between speed and accuracy.

- The F16 configuration (using half-precision floating-point numbers) is faster for processing but may sacrifice some accuracy.

Llama3 70B: A Heavyweight Champion

| Configuration | Generation Speed (Tokens/second) | Processing Speed (Tokens/second) |

|---|---|---|

| Llama3 70B Q4KM | 14.58 | 466.82 |

The Llama3 70B model, an impressive 70-billion parameter giant, also runs well on the NVIDIA RTX A6000 48GB.

- The Q4KM configuration is the only one we have data for, and it shows solid performance for both generation and processing.

Avoiding Out-of-Memory Errors: Practical Tips

Now that you have a better understanding of how LLMs perform on the RTX A6000 48GB, let's discuss how to dodge those out-of-memory errors.

1. Embrace Quantization: The Art of Shrinking Models

Quantization, as we mentioned earlier, is a technique that reduces the size of the model by using less precise numbers. It's like using smaller building blocks, which can save you a lot of memory.

- Q4KM: A common quantization method used for Llama3 models. This configuration uses 4-bit integers for the key, value, and matrix, which can significantly decrease memory usage.

- F16: Using half-precision floating-point numbers can also save memory compared to full precision. However, it might impact the accuracy of the model.

2. Choose the Right Model: Size Matters

It's true, size does matter. Pick the model that fits your needs best. If you're working with a smaller task, a smaller model might be sufficient. If you need the power of a behemoth, then a larger model is the way to go. However, keep an eye on memory limitations.

3. Optimize Your Batch Size: The Sweet Spot

The batch size refers to the amount of text you process at once. Think of it as the number of building blocks you're using to construct your castle. Increasing the batch size can lead to faster processing but also increases memory consumption. Finding the sweet spot is key. Experiment with different batch sizes to see what works best for your model and your system's memory capacity.

Beyond the Basics: Advanced Techniques

For the hardcore geeks out there, there are a few more tricks up our sleeves:

1. Gradient Accumulation: Building a Tower of Babel

Gradient accumulation is a technique that can be used to train models with larger batch sizes without running into memory issues. Imagine building a tall tower with a limited number of building blocks. You can stack the blocks in smaller batches, but eventually you'll have to move the tower to a new location to keep building. Gradient accumulation works similarly. It allows you to process larger batches by accumulating gradients over multiple smaller batches. This way, you can build a bigger tower without exhausting all your resources in one go.

2. Mixed Precision Training: A Blend of Precision

Mixed precision training is another fancy technique that can reduce memory usage and boost speed. Instead of using full precision floating-point numbers for all calculations, you can use lower precision (e.g. F16) for some parts of the model. This is like using a mix of building blocks with different sizes: you can use smaller blocks for parts that don't require as much detail, and larger blocks for parts that need more precision.

3. Model Pruning: Shaving Off Unnecessary Weight

Model pruning is a technique used to remove unnecessary connections in a neural network. It's like removing unused building blocks from your castle. This can reduce the size of the model and improve its speed, but it might also impact its accuracy.

FAQ: Your Burning Questions Answered

Let's tackle some of your most common questions about LLMs and the RTX A6000 48GB.

Q: Can I run a 13B model on the RTX A6000 48GB?

A: It's possible, but you might need to use quantization to reduce the model's memory footprint. Remember, a 13B model is a large model, so you might hit out-of-memory errors without proper optimization.

Q: What are the limitations of running LLMs on a GPU?

A: The main limitation is the GPU's memory capacity. Even with a powerful card like the RTX A6000 48GB, you might encounter limitations with very large models. You'll need to carefully choose models and configure them to fit within your memory constraints.

Q: What are some alternative GPUs that I can use for running LLMs?

A: While the RTX A6000 48GB is a great choice, other GPUs like the NVIDIA RTX 4090 and the AMD Radeon RX 7900 XT are also capable of supporting LLMs.

Q: How do I choose the right LLM for my needs?

A: Consider the following:

- Model size: Smaller models are easier to run. Larger models are more powerful but require more resources.

- Purpose: What task are you trying to perform? Some models are better suited for certain tasks (e.g., translation, text generation, summarization).

- Accuracy: Some models are more accurate than others.

- Speed: Consider how fast you need the model to generate outputs.

Final Thoughts: Explore the World of LLMs

We've covered a lot of ground, from understanding out-of-memory errors to mastering quantization and optimization techniques. The RTX A6000 48GB is a powerful tool for running LLMs, but it's essential to choose the right model and optimize it carefully. Remember, the world of LLMs is vast and exciting. Experiment, discover, and let your imagination run wild!

Keywords

LLMs, large language models, NVIDIA, RTX A6000 48GB, GPU, memory, out-of-memory errors, quantization, Llama3, model size, batch size, gradient accumulation, mixed precision training, model pruning, performance, speed, tokens/second, data, processing, generation, AI, machine learning, deep learning, inference, optimization, resources, limitations, alternatives, GPU, FAQ, tips, tricks, conversational, friendly, geeky, funny, accessible, explain, descriptive labels, external links, comprehensive, engaging.