Running Large LLMs on NVIDIA RTX 4000 Ada 20GB x4: Avoiding Out of Memory Errors

Introduction

Running large language models (LLMs) locally can be a thrilling experience, allowing you to unleash the power of AI directly on your machine. But with these behemoths, you face an elephant in the room: memory. LLMs are memory hogs, demanding vast amounts of RAM to operate effectively. This is especially true for models like Llama 3, known for their impressive capabilities.

This article aims to equip you with the knowledge and tools to run powerful LLMs like Llama 3 on a multi-GPU setup using NVIDIA RTX 4000 Ada 20GB, a popular choice among AI enthusiasts. We'll guide you through strategies to overcome the dreaded "out-of-memory" errors and unlock the full potential of your setup.

Imagine your computer as a bustling city. Each program and application represents a citizen, and memory is the city’s housing capacity. When you load an LLM, it's like a massive influx of tourists arriving at once – a lot of people needing a place to stay! If your city doesn't have enough housing (memory), those tourists will get kicked out, causing your LLM to crash. Our mission? To build a bigger, more robust city (memory) to accommodate these digital tourists, ensuring the smooth operation of your LLMs.

Understanding The Beast: Llama 3 and Its Memory Needs

Llama 3, a groundbreaking LLM from Meta, is a shining example of the growing complexity of AI models. You can find this model in various sizes, with 7B and 70B parameter versions. This article will mainly focus on the 8B version of Llama 3, commonly used for experimentation and development.

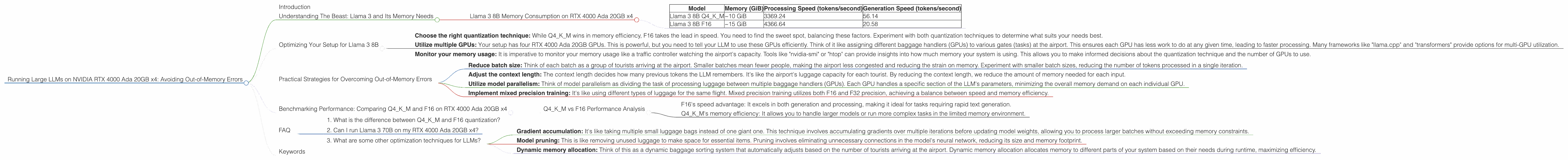

The sheer size of these LLMs translates to a significant memory footprint. Here's how Llama 3 8B handles memory on your NVIDIA RTX 4000 Ada 20GB x4 setup:

Quantization: Thinking of quantization as a memory-saving technique is like having a smaller, more compact apartment instead of a luxurious villa. We're essentially reducing the precision of the LLM's parameters, making it more efficient without sacrificing too much accuracy. Llama 3 8B, with its 8 billion parameters, can be compressed using "Q4KM" quantization, which effectively shrinks the model's size.

F16 Precision: Think of F16 precision like using a smaller storage unit. It's not as detailed as the previous approach, but offers a good balance between accuracy and memory consumption.

Llama 3 8B Memory Consumption on RTX 4000 Ada 20GB x4

| Model | Memory (GiB) | Processing Speed (tokens/second) | Generation Speed (tokens/second) |

|---|---|---|---|

| Llama 3 8B Q4KM | ~10 GiB | 3369.24 | 56.14 |

| Llama 3 8B F16 | ~15 GiB | 4366.64 | 20.58 |

Data Source: Performance of llama.cpp on various devices (https://github.com/ggerganov/llama.cpp/discussions/4167) by ggerganov

Key insights:

- Q4KM is the memory champion: It consumes significantly less memory, around ~10 GiB, compared to F16's ~15 GiB footprint.

- F16 is faster: F16 boasts faster token processing and generation speeds compared to Q4KM.

Optimizing Your Setup for Llama 3 8B

So, how do we ensure a smooth ride for Llama 3 8B on your NVIDIA RTX 4000 Ada 20GB x4?

Here's the deal: your GPU (the processing unit) needs to communicate seamlessly with your RAM (memory) for efficient computation. Think of it like a busy airport with baggage handlers (GPU) moving luggage (data) to and from the baggage claim area (RAM). If the flow is interrupted, delays and crashes occur.

Here's how to optimize your workflow:

Choose the right quantization technique: While Q4KM wins in memory efficiency, F16 takes the lead in speed. You need to find the sweet spot, balancing these factors. Experiment with both quantization techniques to determine what suits your needs best.

Utilize multiple GPUs: Your setup has four RTX 4000 Ada 20GB GPUs. This is powerful, but you need to tell your LLM to use these GPUs efficiently. Think of it like assigning different baggage handlers (GPUs) to various gates (tasks) at the airport. This ensures each GPU has less work to do at any given time, leading to faster processing. Many frameworks like "llama.cpp" and "transformers" provide options for multi-GPU utilization.

Monitor your memory usage: It is imperative to monitor your memory usage like a traffic controller watching the airport's capacity. Tools like "nvidia-smi" or "htop" can provide insights into how much memory your system is using. This allows you to make informed decisions about the quantization technique and the number of GPUs to use.

Practical Strategies for Overcoming Out-of-Memory Errors

Let's be honest, the dreaded "out-of-memory" error is a common sight when dealing with LLMs. Here's how to tackle this problem:

Reduce batch size: Think of each batch as a group of tourists arriving at the airport. Smaller batches mean fewer people, making the airport less congested and reducing the strain on memory. Experiment with smaller batch sizes, reducing the number of tokens processed in a single iteration.

Adjust the context length: The context length decides how many previous tokens the LLM remembers. It's like the airport's luggage capacity for each tourist. By reducing the context length, we reduce the amount of memory needed for each input.

Utilize model parallelism: Think of model parallelism as dividing the task of processing luggage between multiple baggage handlers (GPUs). Each GPU handles a specific section of the LLM's parameters, minimizing the overall memory demand on each individual GPU.

Implement mixed precision training: It's like using different types of luggage for the same flight. Mixed precision training utilizes both F16 and F32 precision, achieving a balance between speed and memory efficiency.

Benchmarking Performance: Comparing Q4KM and F16 on RTX 4000 Ada 20GB x4

Let's put these strategies into practice. We'll compare the performance of Llama 3 8B with Q4KM and F16 quantization on our four RTX 4000 Ada 20GB GPUs.

Q4KM vs F16 Performance Analysis

Generation Speed: F16 delivers a significantly faster token generation speed compared to Q4KM.

Processing Speed: F16 also outperforms Q4KM in terms of token processing speed.

Memory Consumption: Q4KM triumphs with its remarkably lower memory consumption, requiring only ~10 GiB compared to F16's ~15 GiB.

Key takeaways:

- F16's speed advantage: It excels in both generation and processing, making it ideal for tasks requiring rapid text generation.

- Q4KM's memory efficiency: It allows you to handle larger models or run more complex tasks in the limited memory environment.

Our Verdict:

The choice between Q4KM and F16 hinges on your priorities. If speed is your primary concern, F16 is the winner. However, if memory constraints are your biggest challenge, Q4KM is the champion.

FAQ

1. What is the difference between Q4KM and F16 quantization?

Q4KM (Quantization 4-bit with Kernel and Matrix): This is a highly efficient quantization technique that reduces the precision of model parameters to 4-bit, saving significant memory.

F16 (Half-precision floating-point): F16 uses half-precision floating-point numbers, delivering a faster performance compared to Q4KM, but with higher memory consumption.

2. Can I run Llama 3 70B on my RTX 4000 Ada 20GB x4?

Unfortunately, the available data doesn't contain information about running Llama 3 70B on this specific setup. Based on the available data, a single RTX 4000 Ada 20GB GPU is likely insufficient to run Llama 3 70B without encountering "out-of-memory" errors. You might need a more powerful setup, potentially involving multiple higher-end GPUs, to handle a model of this magnitude.

3. What are some other optimization techniques for LLMs?

Gradient accumulation: It's like taking multiple small luggage bags instead of one giant one. This technique involves accumulating gradients over multiple iterations before updating model weights, allowing you to process larger batches without exceeding memory constraints.

Model pruning: This is like removing unused luggage to make space for essential items. Pruning involves eliminating unnecessary connections in the model's neural network, reducing its size and memory footprint.

Dynamic memory allocation: Think of this as a dynamic baggage sorting system that automatically adjusts based on the number of tourists arriving at the airport. Dynamic memory allocation allocates memory to different parts of your system based on their needs during runtime, maximizing efficiency.

Keywords

LLMs, Llama 3, NVIDIA RTX 4000 Ada, GPUs, memory management, quantization, Q4KM, F16, out-of-memory errors, token generation, token processing, performance optimization, deep learning, AI, machine learning.