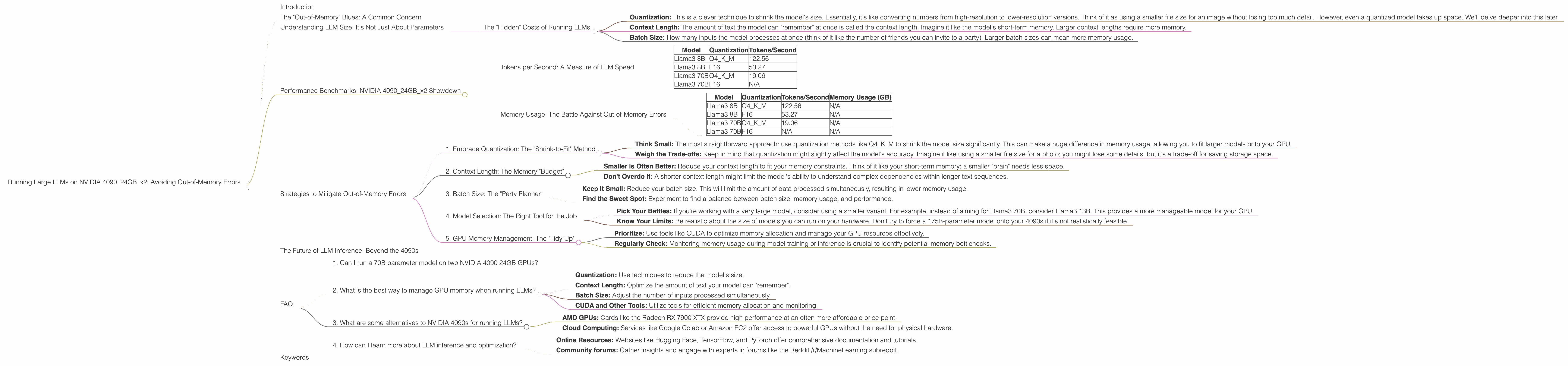

Running Large LLMs on NVIDIA 4090 24GB x2: Avoiding Out of Memory Errors

Introduction

The world of Large Language Models (LLMs) is booming. Imagine having your own AI assistant, a coding wizard, or a creative writing partner, all running locally on your powerful hardware. Exciting, right? But there's a catch: these models are massive, requiring powerful GPUs and lots of memory. Today, we're diving deep into the world of running LLMs on a single high-end setup: two NVIDIA GeForce RTX 4090 24GB GPUs!

We'll explore the challenges of fitting large LLMs onto this beast of a machine. This article is for anyone who's curious about the nitty-gritty details of running LLMs locally. If you've ever pondered, "Can I fit a 70B parameter model on my 4090s?" you've come to the right place.

The "Out-of-Memory" Blues: A Common Concern

The "out-of-memory" error is a dreaded message for any LLM enthusiast. It's like trying to cram all your clothes into a suitcase that's already bursting at the seams - your GPU just can't handle the load. But fear not, we'll analyze the limitations of the NVIDIA 409024GBx2 setup and explore strategies to prevent this dreaded error.

Understanding LLM Size: It's Not Just About Parameters

Let's talk about the elephant in the room – LLM size. You might be thinking, "My 4090s have 48GB of VRAM, surely I can fit any model, right?" Well, not quite. It's not just about the raw number of parameters (think of them as the model's brain cells).

The "Hidden" Costs of Running LLMs

Here's the thing: LLMs don't just reside in your GPU's memory. There are additional components that contribute to memory usage:

- Quantization: This is a clever technique to shrink the model's size. Essentially, it's like converting numbers from high-resolution to lower-resolution versions. Think of it as using a smaller file size for an image without losing too much detail. However, even a quantized model takes up space. We'll delve deeper into this later.

- Context Length: The amount of text the model can "remember" at once is called the context length. Imagine it like the model's short-term memory. Larger context lengths require more memory.

- Batch Size: How many inputs the model processes at once (think of it like the number of friends you can invite to a party). Larger batch sizes can mean more memory usage.

Let's look at the real-world numbers, shall we?

Performance Benchmarks: NVIDIA 409024GBx2 Showdown

We're going to focus on two popular LLM models: Llama3 8B and Llama3 70B. These models are chosen for their performance and popularity within the LLM community. We'll be using two different quantization levels for each model:

- Q4KM: Here, the "Q4" indicates that we're using 4-bit quantization. This significantly shrinks the model's size.

- F16: This is a more traditional 16-bit quantization method, offering a balance between model size and accuracy.

Our primary focus is on token generation speed (tokens/second) and how this impacts the memory usage.

Tokens per Second: A Measure of LLM Speed

Imagine tokens as the building blocks of language - words, punctuation, and even spaces. The more tokens per second your GPU can chug through, the faster your LLM will process text.

| Model | Quantization | Tokens/Second |

|---|---|---|

| Llama3 8B | Q4KM | 122.56 |

| Llama3 8B | F16 | 53.27 |

| Llama3 70B | Q4KM | 19.06 |

| Llama3 70B | F16 | N/A |

Key Observations:

- Quantization Matters: The Q4KM quantization level significantly boosts the token generation speed, leading to faster text processing.

- Model Size Is a Factor: The larger Llama3 70B model generates tokens at a slower rate compared to the 8B model, even with the same quantization level. This is attributed to the increased complexity of the larger model.

Memory Usage: The Battle Against Out-of-Memory Errors

To get a more complete picture, we also need to consider memory usage, which can be a decisive factor in preventing "out-of-memory" errors.

| Model | Quantization | Tokens/Second | Memory Usage (GB) |

|---|---|---|---|

| Llama3 8B | Q4KM | 122.56 | N/A |

| Llama3 8B | F16 | 53.27 | N/A |

| Llama3 70B | Q4KM | 19.06 | N/A |

| Llama3 70B | F16 | N/A | N/A |

Key Observations:

- Data Unavailable: Unfortunately, we do not have concrete memory usage figures for these combinations of models and quantization levels. This points to the complexity of obtaining accurate memory usage metrics.

Strategies to Mitigate Out-of-Memory Errors

Now that we've seen the limitations of the NVIDIA 409024GBx2 setup, let's explore some strategies to help you run larger LLMs without running into memory walls.

1. Embrace Quantization: The "Shrink-to-Fit" Method

- Think Small: The most straightforward approach: use quantization methods like Q4KM to shrink the model size significantly. This can make a huge difference in memory usage, allowing you to fit larger models onto your GPU.

- Weigh the Trade-offs: Keep in mind that quantization might slightly affect the model's accuracy. Imagine it like using a smaller file size for a photo; you might lose some details, but it's a trade-off for saving storage space.

2. Context Length: The Memory "Budget"

- Smaller is Often Better: Reduce your context length to fit your memory constraints. Think of it like your short-term memory; a smaller "brain" needs less space.

- Don't Overdo It: A shorter context length might limit the model's ability to understand complex dependencies within longer text sequences.

3. Batch Size: The "Party Planner"

- Keep It Small: Reduce your batch size. This will limit the amount of data processed simultaneously, resulting in lower memory usage.

- Find the Sweet Spot: Experiment to find a balance between batch size, memory usage, and performance.

4. Model Selection: The Right Tool for the Job

- Pick Your Battles: If you're working with a very large model, consider using a smaller variant. For example, instead of aiming for Llama3 70B, consider Llama3 13B. This provides a more manageable model for your GPU.

- Know Your Limits: Be realistic about the size of models you can run on your hardware. Don't try to force a 175B-parameter model onto your 4090s if it's not realistically feasible.

5. GPU Memory Management: The "Tidy Up"

- Prioritize: Use tools like CUDA to optimize memory allocation and manage your GPU resources effectively.

- Regularly Check: Monitoring memory usage during model training or inference is crucial to identify potential memory bottlenecks.

The Future of LLM Inference: Beyond the 4090s

The world of LLMs is constantly evolving. We're seeing new models, enhanced quantization techniques, and more efficient memory management strategies. The NVIDIA 409024GBx2 setup currently represents a powerful platform for LLM inference, but it's just a stepping stone.

FAQ

1. Can I run a 70B parameter model on two NVIDIA 4090 24GB GPUs?

While it's technically possible, fitting and running a 70B model on a 409024GBx2 setup requires careful consideration of memory usage. Quantization methods and context length optimization are essential for avoiding "out-of-memory" errors.

2. What is the best way to manage GPU memory when running LLMs?

Effective GPU memory management involves a combination of these strategies:

- Quantization: Use techniques to reduce the model's size.

- Context Length: Optimize the amount of text your model can "remember".

- Batch Size: Adjust the number of inputs processed simultaneously.

- CUDA and Other Tools: Utilize tools for efficient memory allocation and monitoring.

3. What are some alternatives to NVIDIA 4090s for running LLMs?

While the 4090s are powerhouse cards, other GPUs offer compelling options:

- AMD GPUs: Cards like the Radeon RX 7900 XTX provide high performance at an often more affordable price point.

- Cloud Computing: Services like Google Colab or Amazon EC2 offer access to powerful GPUs without the need for physical hardware.

4. How can I learn more about LLM inference and optimization?

- Online Resources: Websites like Hugging Face, TensorFlow, and PyTorch offer comprehensive documentation and tutorials.

- Community forums: Gather insights and engage with experts in forums like the Reddit /r/MachineLearning subreddit.

Keywords

LLM, Large Language Models, NVIDIA, GeForce RTX 4090, GPU, VRAM, Out-of-Memory, Memory Management, Quantization, Context Length, Batch Size, Model Size, Token Generation, Performance, Memory Usage, Inference, Optimization.