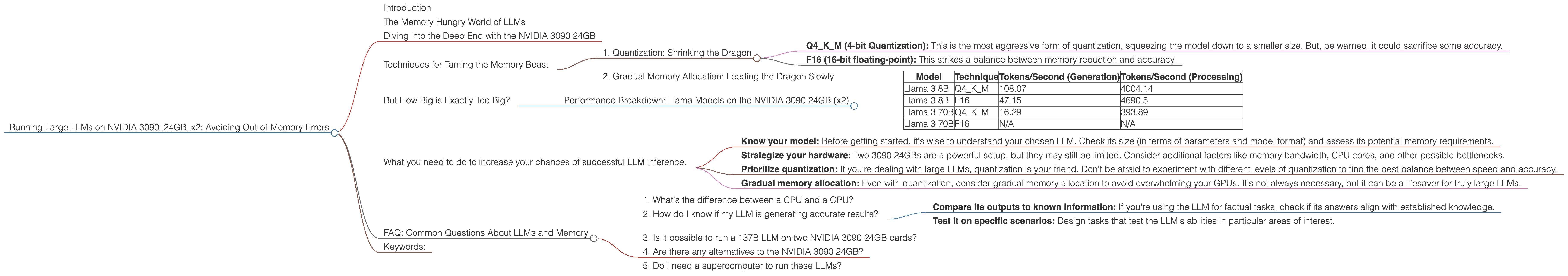

Running Large LLMs on NVIDIA 3090 24GB x2: Avoiding Out of Memory Errors

Introduction

Welcome to the world of local LLMs! If you're a developer or just a curious soul who's eager to explore the fascinating capabilities of these powerful language models, you've probably stumbled upon the question: "How do I run these beasts on my hardware without running out of memory?" And, if you're lucky enough to be rocking an NVIDIA 3090 24GB, you're probably wondering if you can push the limits even further.

This article is your guide to navigating the tricky waters of large language models, specifically focusing on the popular NVIDIA 3090 24GB card (and even two of them!). We'll dive into the challenges of running these models locally and uncover how to avoid those dreaded Out-of-Memory errors.

The Memory Hungry World of LLMs

Large Language Models (LLMs) are like the super-powered brains of the AI world. They can generate text, translate languages, write different kinds of creative content, and answer your questions in an informative way. But, just like our own brains, they require a lot of computational resources.

Imagine your LLM as a massive library filled with knowledge. The bigger the library, the more information it holds, and the more complex it becomes. This "knowledge" translates to parameters in the LLM world. The more parameters an LLM has, the more powerful it is, but also the more hungry it becomes for memory.

Think of it like a big, hungry dragon. If you don't give it enough memory, it'll throw a tantrum (or, in the case of LLMs, crash your program).

Diving into the Deep End with the NVIDIA 3090 24GB

Now, let's talk about the NVIDIA 3090 24GB. This beast of a graphics card is like a high-performance sports car for your computer. It packs 24GB of GDDR6X memory, which is a ton of space compared to other GPUs.

With all that memory, you'd expect it to handle any LLM you throw at it, right? Well, not quite. While the 3090 24GB is a powerful card, the size of LLMs has been growing at an exponential rate. Even with two of them, you might face some memory limitations with the largest models.

The bigger challenge isn't just the raw size of these LLMs but also how they’re run.

Techniques for Taming the Memory Beast

There are a few tricks up our sleeve to help us fit these hungry LLMs into our 3090 24GB (x2) memory and avoid running out of space. Let's break down a couple of methods:

1. Quantization: Shrinking the Dragon

Quantization is like giving the dragon a diet. It reduces the size of the model by squeezing the information into smaller containers (think of it as swapping your bulky library for an e-book). It's a way to make the LLM more compact and easier to fit in your memory without sacrificing too much accuracy.

There are different levels of quantization:

- Q4KM (4-bit Quantization): This is the most aggressive form of quantization, squeezing the model down to a smaller size. But, be warned, it could sacrifice some accuracy.

- F16 (16-bit floating-point): This strikes a balance between memory reduction and accuracy.

2. Gradual Memory Allocation: Feeding the Dragon Slowly

You can't just shove the whole dragon into your memory at once. You need to feed it slowly, bit by bit. This technique, called "gradual memory allocation," helps the dragon digest the information without feeling overwhelmed. It's like slowly feeding a baby.

How do you do it? You divide the LLM into smaller chunks and then gradually load each chunk into memory as needed. It's a bit like reading a book one page at a time.

But How Big is Exactly Too Big?

Now, let's see how these techniques perform with different LLMs on our trusty NVIDIA 3090 24GB (x2) duo. We'll look at Llama models, a popular choice for local inference.

Performance Breakdown: Llama Models on the NVIDIA 3090 24GB (x2)

| Model | Technique | Tokens/Second (Generation) | Tokens/Second (Processing) |

|---|---|---|---|

| Llama 3 8B | Q4KM | 108.07 | 4004.14 |

| Llama 3 8B | F16 | 47.15 | 4690.5 |

| Llama 3 70B | Q4KM | 16.29 | 393.89 |

| Llama 3 70B | F16 | N/A | N/A |

Observations:

- Speed vs. Size: The Llama 3 8B model runs significantly faster than the Llama 3 70B, both in generation and processing speeds. This is no surprise. The smaller model is much easier to handle for our GPUs, especially with Q4KM quantization.

- Quantization Impact: Using Q4KM quantization provides a substantial performance boost over F16 for the Llama 3 8B model. However, we don't have data for Llama 3 70B with F16, so we can't draw any conclusions about the effectiveness of quantization (F16) for that model.

- Memory Limitations: It's important to note that we lack data for the Llama 3 70B with F16. Even with two 3090 24GB cards, running the full-size model (without quantization) might lead to OOM errors. It's likely too big for our combined memory.

What you need to do to increase your chances of successful LLM inference:

- Know your model: Before getting started, it's wise to understand your chosen LLM. Check its size (in terms of parameters and model format) and assess its potential memory requirements.

- Strategize your hardware: Two 3090 24GBs are a powerful setup, but they may still be limited. Consider additional factors like memory bandwidth, CPU cores, and other possible bottlenecks.

- Prioritize quantization: If you're dealing with large LLMs, quantization is your friend. Don't be afraid to experiment with different levels of quantization to find the best balance between speed and accuracy.

- Gradual memory allocation: Even with quantization, consider gradual memory allocation to avoid overwhelming your GPUs. It's not always necessary, but it can be a lifesaver for truly large LLMs.

FAQ: Common Questions About LLMs and Memory

1. What's the difference between a CPU and a GPU?

The CPU is like the "brain" of your computer. It handles the general tasks, like running your web browser. The GPU is like a specialized "brain" for handling graphics. It's incredibly good at parallel processing, making it ideal for complex calculations and data manipulation, which is what makes it perfect for running LLMs.

2. How do I know if my LLM is generating accurate results?

This is a trickier question. It's not always obvious if an LLM is producing accurate outputs, especially for creative tasks like writing stories or generating code.

You can:

- Compare its outputs to known information: If you're using the LLM for factual tasks, check if its answers align with established knowledge.

- Test it on specific scenarios: Design tasks that test the LLM's abilities in particular areas of interest.

3. Is it possible to run a 137B LLM on two NVIDIA 3090 24GB cards?

It's possible but challenging. It would depend on factors like model format, quantization, and memory allocation.

With quantization and careful memory management, you might be able to run a 137B LLM, but you'll likely see a significant impact on performance.

4. Are there any alternatives to the NVIDIA 3090 24GB?

Of course! The NVIDIA 3090 isn't the only option. The NVIDIA 4090 offers even more memory and processing power. You can also explore GPUs from AMD like the Radeon RX 7900 XTX, which also provides large amounts of memory.

5. Do I need a supercomputer to run these LLMs?

No, you definitely don't need a supercomputer to run LLMs. Even a decent gaming PC with a dedicated GPU can handle smaller LLMs.

However, running larger models effectively will require a more powerful PC and potentially a more powerful GPU.

Keywords:

Large Language Models, LLMs, NVIDIA 3090 24GB, GPU, Memory, Out-of-Memory, OOM, Quantization, Q4KM, F16, Token, Generation, Processing, Performance, Inference, Llama, Llama 3, Llama 3 8B, Llama 3 70B, CPU, Memory Allocation, Gradient Accumulation, Supercomputer, Hardware, Software, Deep Learning, AI, Machine Learning, NLP, Natural Language Processing, Data Science, Large Language Model Inference, Local Large Language Models