ROI Analysis: Justifying the Investment in NVIDIA RTX A6000 48GB for AI Workloads

Introduction: Embracing the Power of Local LLMs

The world of artificial intelligence (AI) is abuzz with the excitement of large language models (LLMs). These powerful tools are transforming industries from healthcare to finance, offering unprecedented capabilities for text generation, translation, and even coding. While cloud-based LLMs like ChatGPT are accessible to everyone, running LLMs locally offers significant advantages, including lower latency, improved privacy, and greater control.

This article explores the Return on Investment (ROI) of the NVIDIA RTX A6000 48GB GPU for local LLM workloads, specifically focusing on the Llama 3 family of models. We'll delve into the performance metrics, highlighting the benefits of this powerful hardware for unleashing the full potential of your AI projects.

Llama 3: A New Era of Local Language Models

The Llama 3 series of open-source LLMs is a game changer for anyone looking to run AI models locally. With its impressive performance and availability in various sizes, Llama 3 is becoming the go-to choice for developers and enthusiasts alike. Imagine having a ChatGPT-like experience without relying on cloud services, or running AI-powered chatbots and applications right on your own machine.

NVIDIA RTX A6000 48GB: The Powerhouse for Local LLMs

Now, let's dive into the heart of this investment: the NVIDIA RTX A6000 48GB. This powerhouse of a graphics card is designed for demanding workloads, including AI and machine learning. With its 48GB of ultra-fast GDDR6 memory and the powerful Ampere architecture, the RTX A6000 48GB is a perfect match for running large language models locally.

Token Generation Speed: The Power of Local Processing

Token generation speed refers to how fast a computer can process language in terms of "tokens," which are the fundamental units of language. Think of it like the speed of a car driving through text. The higher the number of tokens per second, the faster your AI model can generate text, translate languages, or complete other tasks.

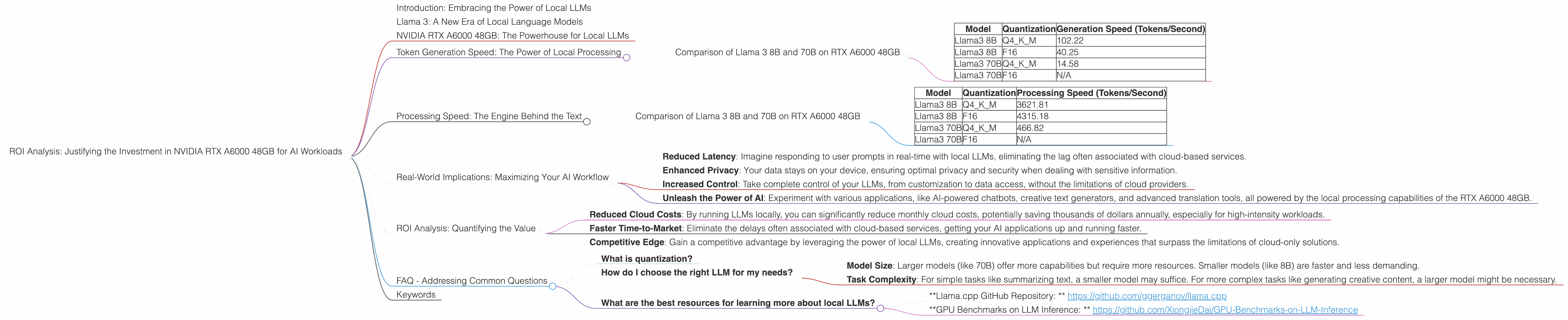

Comparison of Llama 3 8B and 70B on RTX A6000 48GB

Let's analyze the token generation speed of the Llama 3 8B and 70B models on the RTX A6000 48GB. Data is based on tests conducted by ggerganov and XiongjieDai.

| Model | Quantization | Generation Speed (Tokens/Second) |

|---|---|---|

| Llama3 8B | Q4KM | 102.22 |

| Llama3 8B | F16 | 40.25 |

| Llama3 70B | Q4KM | 14.58 |

| Llama3 70B | F16 | N/A |

Key Observations:

- Quantization: The Llama 3 8B model shows significantly faster generation speed with Q4KM quantization compared to F16. This is because Q4KM reduces the size of the model, allowing faster computations, essentially fitting more text into the same space. Think of it like compressing a video file to make it load faster.

- Model Size Matters: The 8B model performs significantly better than the 70B model in terms of generation speed. This is expected, as the larger model has many more parameters to process.

- Hardware Efficiency: While generation speed is slower for the 70B model, the RTX A6000 48GB handles it remarkably well. This highlights the importance of using powerful hardware when working with large language models.

Processing Speed: The Engine Behind the Text

Now, let's look at another crucial performance metric: processing speed. This measures how quickly your AI model can handle the massive amounts of data involved in text generation, translation, and other tasks.

Comparison of Llama 3 8B and 70B on RTX A6000 48GB

| Model | Quantization | Processing Speed (Tokens/Second) |

|---|---|---|

| Llama3 8B | Q4KM | 3621.81 |

| Llama3 8B | F16 | 4315.18 |

| Llama3 70B | Q4KM | 466.82 |

| Llama3 70B | F16 | N/A |

Key Observations:

- Processing Powerhouse: The RTX A6000 48GB shines in processing speed, demonstrating impressive performance for both the 8B and 70B models. This makes it ideal for tasks involving large amounts of text, like generating long articles or translating extensive documents.

- Quantization's Impact: While the 8B model performs better with F16 quantization, the 70B model doesn't show a significant difference in speed.

Real-World Implications: Maximizing Your AI Workflow

These performance metrics reveal the significant advantages of using the NVIDIA RTX A6000 48GB for running Llama 3 models locally. Faster token generation and processing speeds translate into tangible benefits for your AI applications:

- Reduced Latency: Imagine responding to user prompts in real-time with local LLMs, eliminating the lag often associated with cloud-based services.

- Enhanced Privacy: Your data stays on your device, ensuring optimal privacy and security when dealing with sensitive information.

- Increased Control: Take complete control of your LLMs, from customization to data access, without the limitations of cloud providers.

- Unleash the Power of AI: Experiment with various applications, like AI-powered chatbots, creative text generators, and advanced translation tools, all powered by the local processing capabilities of the RTX A6000 48GB.

ROI Analysis: Quantifying the Value

The RTX A6000 48GB is a significant investment, but its performance and the benefits it brings to local LLM workloads make it a wise choice for developers and businesses alike.

Consider the following:

- Reduced Cloud Costs: By running LLMs locally, you can significantly reduce monthly cloud costs, potentially saving thousands of dollars annually, especially for high-intensity workloads.

- Faster Time-to-Market: Eliminate the delays often associated with cloud-based services, getting your AI applications up and running faster.

- Competitive Edge: Gain a competitive advantage by leveraging the power of local LLMs, creating innovative applications and experiences that surpass the limitations of cloud-only solutions.

FAQ - Addressing Common Questions

What is quantization?

Quantization is a technique used to reduce the size of a model by reducing the number of bits used to represent each value. Imagine having a picture with millions of different colors, and then simplifying it to use just a few basic colors. Quantization does a similar thing for AI models, making them smaller and faster.

How do I choose the right LLM for my needs?

The right LLM depends on your specific application and requirements. Consider factors like:

- Model Size: Larger models (like 70B) offer more capabilities but require more resources. Smaller models (like 8B) are faster and less demanding.

- Task Complexity: For simple tasks like summarizing text, a smaller model may suffice. For more complex tasks like generating creative content, a larger model might be necessary.

What are the best resources for learning more about local LLMs?

- *Llama.cpp GitHub Repository: * https://github.com/ggerganov/llama.cpp

- *GPU Benchmarks on LLM Inference: * https://github.com/XiongjieDai/GPU-Benchmarks-on-LLM-Inference

Keywords

NVIDIA RTX A6000, RTX A6000 48GB, LLM, Large Language Model, Llama 3, Llama 3 8B, Llama 3 70B, Token Generation Speed, Processing Speed, Quantization, Q4KM, F16, AI Workloads, Local LLMs, ROI, Return on Investment, AI, Artificial Intelligence, GPU, Graphics Processing Unit, Performance Metrics