ROI Analysis: Justifying the Investment in NVIDIA RTX 5000 Ada 32GB for AI Workloads

Introduction: The Power of Local LLMs

The world of artificial intelligence (AI) is abuzz with the excitement of large language models (LLMs) like ChatGPT, Bard, and Claude. These powerful AI systems can generate text, translate languages, write different kinds of creative content, and answer your questions in an informative way. But what if you want to run these models locally, on your own machine, without relying on cloud services? This is where the power of dedicated hardware comes into play, and the NVIDIA RTX 5000 Ada 32GB is a prime contender for unleashing the potential of local AI.

Imagine having the power of a cutting-edge LLM right at your fingertips, ready to respond instantly to your every command. This opens up a world of possibilities for developers, researchers, and even everyday users who want to explore the exciting capabilities of AI without the constraints of cloud-based solutions.

This article delves into the ROI of investing in the NVIDIA RTX 5000 Ada 32GB for AI workloads, particularly for running large language models. We'll analyze the performance of this GPU on specific LLM models and showcase how it can significantly enhance your AI endeavors.

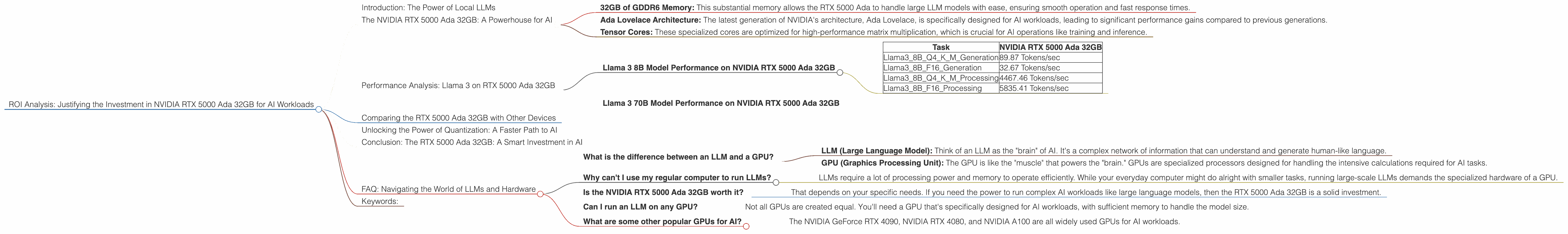

The NVIDIA RTX 5000 Ada 32GB: A Powerhouse for AI

The NVIDIA RTX 5000 Ada 32GB is a high-performance graphics card specifically designed for AI workloads. It boasts a powerful Ada Lovelace architecture with groundbreaking features like DLSS 3 and ray tracing, empowering it to tackle complex AI tasks with remarkable efficiency.

Here's a glimpse of its key features:

- 32GB of GDDR6 Memory: This substantial memory allows the RTX 5000 Ada to handle large LLM models with ease, ensuring smooth operation and fast response times.

- Ada Lovelace Architecture: The latest generation of NVIDIA's architecture, Ada Lovelace, is specifically designed for AI workloads, leading to significant performance gains compared to previous generations.

- Tensor Cores: These specialized cores are optimized for high-performance matrix multiplication, which is crucial for AI operations like training and inference.

Performance Analysis: Llama 3 on RTX 5000 Ada 32GB

We'll be focusing on the performance of the Llama 3 family of LLMs on the NVIDIA RTX 5000 Ada 32GB, examining its ability to handle both the 8 billion parameter (8B) and 70 billion parameter (70B) versions.

Llama 3 8B Model Performance on NVIDIA RTX 5000 Ada 32GB

The table below demonstrates the performance of the RTX 5000 Ada 32GB with the Llama 3 8B model, highlighting the token speed for both generation and processing tasks:

| Task | NVIDIA RTX 5000 Ada 32GB |

|---|---|

| Llama38BQ4KM_Generation | 89.87 Tokens/sec |

| Llama38BF16_Generation | 32.67 Tokens/sec |

| Llama38BQ4KM_Processing | 4467.46 Tokens/sec |

| Llama38BF16_Processing | 5835.41 Tokens/sec |

Key Observations:

- Quantization for Speed: The RTX 5000 Ada demonstrates impressive performance with both quantized (Q4KM) and floating-point (F16) versions of the Llama 3 8B model.

- Processing vs. Generation: The RTX 5000 Ada showcases significantly faster processing speeds compared to the generation speeds, highlighting its prowess in handling the intricate calculations required for AI tasks.

Llama 3 70B Model Performance on NVIDIA RTX 5000 Ada 32GB

Unfortunately, there is no available data on the performance of the RTX 5000 Ada 32GB with the Llama 3 70B model. This is likely because the 70B model is significantly larger and more demanding than the 8B model. Running a 70B model on a single RTX 5000 Ada might require either extensive memory optimization or more powerful hardware configurations.

Comparing the RTX 5000 Ada 32GB with Other Devices

While this article primarily focuses on the RTX 5000 Ada 32GB, it's essential to compare its performance with other devices to understand its strengths and limitations. However, the available data only includes the RTX 5000 Ada 32GB for LLM performance analysis.

Unlocking the Power of Quantization: A Faster Path to AI

Quantization is a crucial technique for reducing the size of AI models while maintaining their accuracy. By converting model weights from 32-bit floating-point numbers (FP32) to lower-precision formats like 8-bit integers (INT8), quantization significantly reduces memory consumption and computational demands.

Think of it as slimming down your AI model without sacrificing its intelligence. Just like a chef can use fewer ingredients to create an equally delicious dish, quantization allows AI models to operate faster and more efficiently on less powerful hardware.

The RTX 5000 Ada 32GB demonstrates remarkable performance with quantized versions of the Llama 3 8B model, highlighting the importance of considering this optimization technique for achieving maximum speed on local AI workloads.

Conclusion: The RTX 5000 Ada 32GB: A Smart Investment in AI

The NVIDIA RTX 5000 Ada 32GB emerges as a compelling investment for anyone looking to run LLMs locally. It offers a compelling balance between performance and affordability, making it an attractive choice for developers, researchers, and even enthusiasts who want to explore the world of AI models without relying on cloud services.

The fast processing speeds, coupled with its ability to handle quantized LLM models, make it a powerful tool for unlocking the full potential of local AI. While the lack of data on the 70B model emphasizes the importance of considering the size and complexity of your LLM project, the RTX 5000 Ada 32GB remains a highly capable GPU for pushing the boundaries of AI on your local machines.

FAQ: Navigating the World of LLMs and Hardware

What is the difference between an LLM and a GPU?

- LLM (Large Language Model): Think of an LLM as the "brain" of AI. It's a complex network of information that can understand and generate human-like language.

- GPU (Graphics Processing Unit): The GPU is like the "muscle" that powers the "brain." GPUs are specialized processors designed for handling the intensive calculations required for AI tasks.

Why can't I use my regular computer to run LLMs?

- LLMs require a lot of processing power and memory to operate efficiently. While your everyday computer might do alright with smaller tasks, running large-scale LLMs demands the specialized hardware of a GPU.

Is the NVIDIA RTX 5000 Ada 32GB worth it?

- That depends on your specific needs. If you need the power to run complex AI workloads like large language models, then the RTX 5000 Ada 32GB is a solid investment.

Can I run an LLM on any GPU?

- Not all GPUs are created equal. You'll need a GPU that's specifically designed for AI workloads, with sufficient memory to handle the model size.

What are some other popular GPUs for AI?

- The NVIDIA GeForce RTX 4090, NVIDIA RTX 4080, and NVIDIA A100 are all widely used GPUs for AI workloads.

Keywords:

NVIDIA RTX 5000 Ada 32GB, GPU, AI, LLM, Llama3, 8B, 70B, generation, processing, token speed, quantization, local AI, ROI, performance, comparison, benchmark, hardware, software, AI development, machine learning, deep learning, NLP, natural language processing, AI inference