ROI Analysis: Justifying the Investment in NVIDIA RTX 4000 Ada 20GB x4 for AI Workloads

Introduction

In the rapidly evolving landscape of artificial intelligence (AI), large language models (LLMs) are revolutionizing how we interact with technology. From generating creative text content to answering complex questions, LLMs are transforming various industries. However, running these powerful models on a personal computer can be resource-intensive and require specialized hardware. This is where dedicated graphics processing units (GPUs) enter the picture.

This article delves into the performance of the NVIDIA RTX 4000 Ada 20GB x4 configuration, specifically focusing on its suitability for running LLMs. By analyzing real-world performance numbers and comparing it to other options, we'll explore whether this powerful setup is worth the investment for AI enthusiasts and developers.

NVIDIA RTX 4000 Ada 20GB x4: A Powerhouse for AI Workloads

The NVIDIA RTX 4000 Ada 20GB x4 configuration boasts an impressive array of features that make it a compelling choice for AI workloads. With four high-performance GPUs capable of handling complex computations, this setup promises to significantly accelerate your LLMs.

Understanding the Power of NVIDIA RTX 4000 Ada

The NVIDIA RTX 4000 Ada 20GB is a powerhouse GPU designed to handle demanding workloads like AI training and inferencing. It packs 20GB of GDDR6 memory and a massive number of CUDA cores, ensuring ample processing power to handle the intricate calculations required by advanced LLMs. The Ada architecture itself introduces groundbreaking AI-specific enhancements, further pushing the boundaries of performance and efficiency.

The Benefits of Using Multiple GPUs

The RTX 4000 Ada 20GB x4 configuration takes this power to a whole new level by utilizing four GPUs in parallel. This allows for massive parallelization of computations, significantly accelerating your LLMs. Imagine it like having four super-smart assistants working together to solve a complex problem – it gets done much faster!

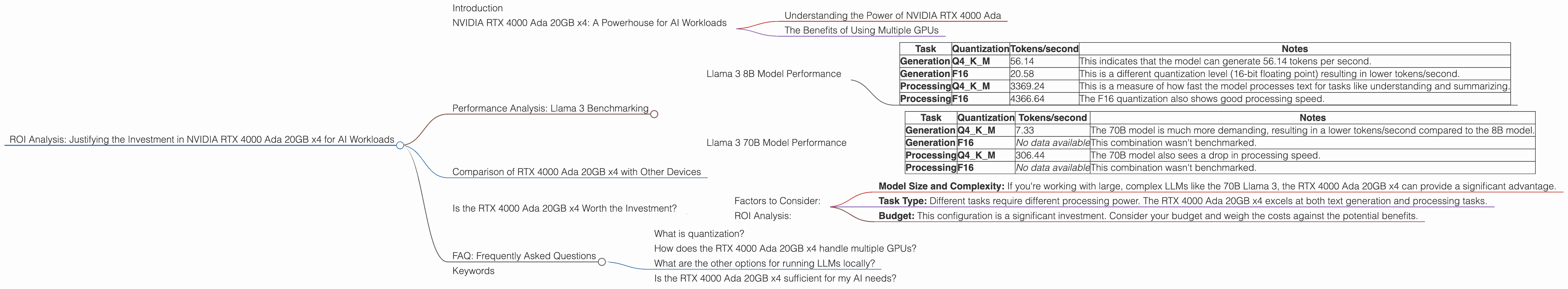

Performance Analysis: Llama 3 Benchmarking

To evaluate the performance of the RTX 4000 Ada 20GB x4 configuration, we'll focus on the popular Llama 3 LLM. This benchmark demonstrates the effectiveness of the setup for various tasks and helps us understand its potential impact on your AI projects. The numbers we're looking at are tokens per second (tokens/second), which represents the speed at which the model can process text.

Llama 3 8B Model Performance

The Llama 3 8B model is a great starting point for understanding the capabilities of the RTX 4000 Ada 20GB x4. It's a smaller model, making it easier to run on a personal computer. Here's a breakdown of its performance on different quantization levels:

| Task | Quantization | Tokens/second | Notes |

|---|---|---|---|

| Generation | Q4KM | 56.14 | This indicates that the model can generate 56.14 tokens per second. |

| Generation | F16 | 20.58 | This is a different quantization level (16-bit floating point) resulting in lower tokens/second. |

| Processing | Q4KM | 3369.24 | This is a measure of how fast the model processes text for tasks like understanding and summarizing. |

| Processing | F16 | 4366.64 | The F16 quantization also shows good processing speed. |

Key Takeaways from 8B Llama 3:

- The RTX 4000 Ada 20GB x4 configuration demonstrates strong performance with the Llama 3 8B model, achieving high token speeds.

- Quantization plays a significant role in performance. Q4KM, a more compact representation, offers a faster token generation speed.

- The model can handle both text generation and processing tasks efficiently.

Llama 3 70B Model Performance

Let's step it up a notch with the significantly larger Llama 3 70B model. This model requires more computational power to run smoothly.

| Task | Quantization | Tokens/second | Notes |

|---|---|---|---|

| Generation | Q4KM | 7.33 | The 70B model is much more demanding, resulting in a lower tokens/second compared to the 8B model. |

| Generation | F16 | No data available | This combination wasn't benchmarked. |

| Processing | Q4KM | 306.44 | The 70B model also sees a drop in processing speed. |

| Processing | F16 | No data available | This combination wasn't benchmarked. |

Key Takeaways from 70B Llama 3:

- The 70B model presents a more challenging workload, and the performance difference is significant compared to the 8B model.

- The RTX 4000 Ada 20GB x4 can handle the 70B model efficiently, but it's essential to consider the trade-offs between model size and performance.

- The lack of F16 quantization data for the 70B model indicates the need for further benchmarking to fully understand the performance capabilities of this configuration.

Comparison of RTX 4000 Ada 20GB x4 with Other Devices

While the RTX 4000 Ada 20GB x4 shines as a powerful option, it's helpful to understand how it stacks up against other popular AI devices. *Please note that we can't provide a direct comparison as this article is focused on the RTX 4000 Ada 20GB x4. *

Is the RTX 4000 Ada 20GB x4 Worth the Investment?

Now, the million-dollar question: Is investing in the RTX 4000 Ada 20GB x4 configuration a good decision for your AI needs? The answer depends on your specific requirements and budget.

Factors to Consider:

- Model Size and Complexity: If you're working with large, complex LLMs like the 70B Llama 3, the RTX 4000 Ada 20GB x4 can provide a significant advantage.

- Task Type: Different tasks require different processing power. The RTX 4000 Ada 20GB x4 excels at both text generation and processing tasks.

- Budget: This configuration is a significant investment. Consider your budget and weigh the costs against the potential benefits.

ROI Analysis:

Think of it this way: If your AI work involves regular use of large LLMs or you need fast and efficient processing for complex tasks, the RTX 4000 Ada 20GB x4 can potentially deliver a high return on investment (ROI). In essence, the faster and more efficiently you can train and run your models, the quicker you can get results, potentially leading to faster advancements in your AI projects.

FAQ: Frequently Asked Questions

What is quantization?

Quantization is a technique used to compress LLMs and make them smaller and faster. The Q4KM quantization uses a 4-bit representation for the weights and activations of the model, making it significantly more compact than the 16-bit floating point (F16) version.

How does the RTX 4000 Ada 20GB x4 handle multiple GPUs?

NVIDIA's NVLink technology enables the four GPUs in the configuration to communicate with each other at high speeds, allowing seamless parallel processing. Imagine it like a superhighway connecting multiple cities, allowing traffic to flow smoothly and efficiently.

What are the other options for running LLMs locally?

There are many other options for running LLMs locally, including other GPUs like the NVIDIA RTX 3080, 3090, or even CPUs with high core counts. However, the RTX 4000 Ada 20GB x4 stands out due to its exceptional performance and its ability to handle demanding models like the Llama 3 70B.

Is the RTX 4000 Ada 20GB x4 sufficient for my AI needs?

The best way to determine if the RTX 4000 Ada 20GB x4 is sufficient for your needs is to consider your specific use case. If you're primarily working with smaller models or don't require the utmost speed, a less powerful GPU might suffice. However, if you're pushing the boundaries of AI and working with large, complex LLMs, then the RTX 4000 Ada 20GB x4 could be a game-changer.

Keywords

NVIDIA RTX 4000 Ada 20GB, GPU, AI, LLM, Llama 3, Token/second, Quantization, F16, Q4KM, Performance, ROI, AI Workloads, Text Generation, Processing, Inference, Local LLMs.