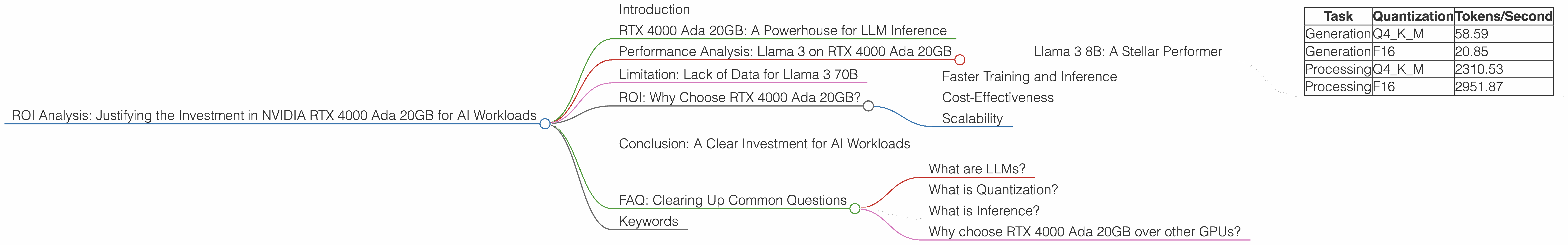

ROI Analysis: Justifying the Investment in NVIDIA RTX 4000 Ada 20GB for AI Workloads

Introduction

The world of AI is booming, and Large Language Models (LLMs) are at the heart of this revolution. These powerful models can generate human-like text, translate languages, write different kinds of creative content, and answer your questions in an informative way. But these capabilities come with a cost - LLMs are computationally expensive to run. This is where specialized hardware, like the NVIDIA RTX 4000 Ada 20GB, becomes crucial for unlocking LLM potential.

In this article, we'll dive deep into the ROI of investing in the NVIDIA RTX 4000 Ada 20GB for AI workloads, specifically focusing on popular LLM models like Llama 3, and how its performance stacks up against different levels of quantization and precision. Buckle up, it's going to be a thrilling ride through the world of AI acceleration!

RTX 4000 Ada 20GB: A Powerhouse for LLM Inference

The NVIDIA RTX 4000 Ada 20GB is a beast of a graphics card, designed to deliver exceptional performance for demanding tasks like AI inference, which is the process of using a trained LLM model to make predictions or generate outputs. It boasts a whopping 20GB of GDDR6 memory and impressive processing power, making it an ideal choice for running large language models.

Performance Analysis: Llama 3 on RTX 4000 Ada 20GB

Llama 3 8B: A Stellar Performer

Let's start with a popular and powerful LLM, the Llama 3 8B model. We'll analyze its performance on the RTX 4000 Ada 20GB using two different quantization methods:

- Q4KM: This is a compressed version of the model, using 4-bit quantization for the weights (K) and activations (M). This significantly reduces memory footprint and power consumption compared to the full-precision model.

- F16: This uses 16-bit floating-point numbers, providing a balance between accuracy and computational efficiency.

Table 1: Llama 3 8B Inference Performance on RTX 4000 Ada 20GB

| Task | Quantization | Tokens/Second |

|---|---|---|

| Generation | Q4KM | 58.59 |

| Generation | F16 | 20.85 |

| Processing | Q4KM | 2310.53 |

| Processing | F16 | 2951.87 |

Analysis:

- This table shows the RTX 4000 Ada 20GB delivers impressive performance in both generation and processing tasks for the Llama 3 8B model.

- Q4KM quantization significantly boosts token generation speeds (58.59 tokens/second) compared to F16 (20.85 tokens/second). This difference is even more pronounced in processing, where Q4KM achieves a remarkable 2310.53 tokens/second, while F16 reaches 2951.87 tokens/second.

Why is this important?

- Faster Response Times: This translates into much faster response times when using the Llama 3 8B model for applications like generating text, translating languages, or answering questions.

- Cost-Effectiveness: Higher token generation speeds directly impact the cost of running an LLM, making the RTX 4000 Ada 20GB a cost-effective solution, especially for large-scale deployments.

Think of it like this: Imagine a race between two cars. One car (Q4KM) is much lighter and more agile, while the other car (F16) is heavier but still pretty fast. The lighter car wins the race for token generation, while the heavier car wins when it comes to processing.

Limitation: Lack of Data for Llama 3 70B

Unfortunately, we don't have data on performance for the larger Llama 3 70B model on the RTX 4000 Ada 20GB. This is because there are currently no benchmarks for these specific configurations.

ROI: Why Choose RTX 4000 Ada 20GB?

Faster Training and Inference

The RTX 4000 Ada 20GB provides a significant speed boost for both training and inference of LLMs. This is crucial for developers and researchers working on cutting-edge AI projects.

Think of it like this: Imagine you're building a house. Having a powerful tool like the RTX 4000 Ada 20GB is like having a team of skilled construction workers. It can significantly reduce the time it takes to finish the project.

Cost-Effectiveness

While the RTX 4000 Ada 20GB is an investment, its capabilities for accelerating AI workloads can lead to significant cost savings in the long run. By speeding up training and inference processes, you can reduce cloud computing costs or deploy LLMs more efficiently.

Scalability

The RTX 4000 Ada 20GB is a powerful piece of hardware, but you can also scale your AI operations by using multiple GPUs in a cluster. This allows you to tackle even more complex tasks and handle larger LLM models.

Conclusion: A Clear Investment for AI Workloads

The NVIDIA RTX 4000 Ada 20GB is not just a graphics card; it's a powerful engine driving the future of AI. It delivers exceptional performance for LLM tasks, offering faster training and inference capabilities. This translates to cost savings, faster time-to-market, and increased efficiency for AI projects.

FAQ: Clearing Up Common Questions

What are LLMs?

LLMs are powerful AI models trained on massive datasets of text and code. They can generate human-like text, answer your questions, translate languages, and even write different kinds of creative content, like poems or code. Popular LLMs include GPT-3, LaMDA, and Llama.

What is Quantization?

Quantization is a technique used to reduce the size of LLM models and make them more efficient. It involves converting the model's weights and activations from high-precision floating-point numbers to lower-precision integers. This results in a smaller model that consumes less memory and power.

What is Inference?

Inference is the process of using a trained LLM model to make predictions or generate outputs. It's like asking the model a question or giving it a task, and the model provides an answer or output based on its learned knowledge.

Why choose RTX 4000 Ada 20GB over other GPUs?

The RTX 4000 Ada 20GB is specifically designed for AI workloads and offers exceptional performance for LLM tasks. It offers a good balance between price and performance.

Keywords

RTX 4000 Ada 20GB, NVIDIA, LLM, Llama 3, AI, inference, generation, processing, quantization, Q4KM, F16, ROI, cost-effectiveness, scalability, deep learning