ROI Analysis: Justifying the Investment in NVIDIA L40S 48GB for AI Workloads

Introduction: The Power of NVIDIA L40S_48GB for Local LLMs

Are you a developer or data scientist fascinated by the potential of large language models (LLMs) but struggling with their resource-hungry nature? You might be considering a powerful GPU like the NVIDIA L40S48GB, but is it worth the investment? This article dives deep into the ROI analysis of the NVIDIA L40S48GB for local LLM workloads, providing you with the data you need to make an informed decision.

We'll examine the performance of the NVIDIA L40S_48GB across different LLM models, focusing on Llama 3, a popular open-source model. We'll analyze token speed, a key metric for LLM efficiency, and compare it to alternative configurations to help you understand the potential benefits of this high-end GPU.

Think of your computer as a car engine. The larger the engine, the more power you have. The NVIDIA L40S_48GB is like a powerful V8 engine for your AI projects, pushing the limits of LLM processing. Let's dive into the specifics.

NVIDIA L40S_48GB: A Beast of a GPU

The NVIDIA L40S_48GB is a powerful, high-memory GPU specifically designed for AI workloads. It boasts a staggering 48GB of HBM3e (High Bandwidth Memory) and a massive amount of processing power, making it ideal for training and running large language models locally.

But how does it really perform when put to the test with LLMs? Let's break it down.

Llama 3 Performance on NVIDIA L40S_48GB: A Token Speed Showdown

We'll focus on the L40S_48GB's ability to handle Llama 3, a popular open-source LLM, in different configurations:

- Llama 3 8B: A smaller, more manageable model, great for experimentation and rapid development.

- Llama 3 70B: A considerably larger model, offering greater complexity and capability but requiring more demanding resources.

We'll analyze two key aspects:

- Token Speed Generation: How quickly the LLM can produce text output. Imagine it as typing speed on a super-powered keyboard.

- Token Speed Processing: How efficiently the LLM can process information for calculations and understanding. This is like the brainpower of the LLM, enabling it to make sense of the world.

Llama 3 8B on NVIDIA L40S_48GB

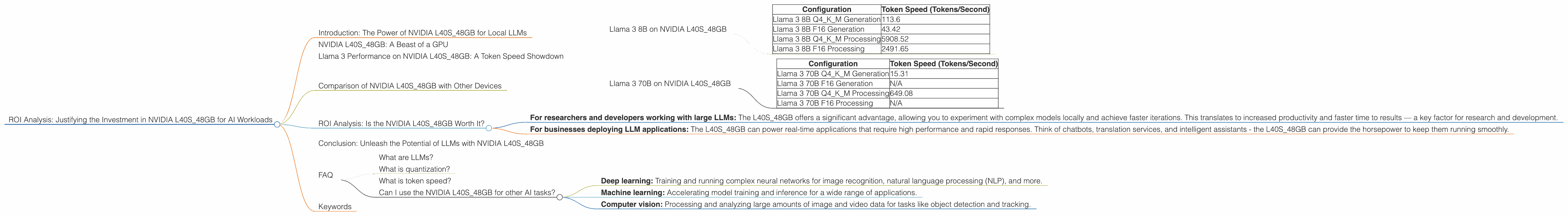

Table 1: Llama 3 8B Performance on NVIDIA L40S_48GB

| Configuration | Token Speed (Tokens/Second) |

|---|---|

| Llama 3 8B Q4KM Generation | 113.6 |

| Llama 3 8B F16 Generation | 43.42 |

| Llama 3 8B Q4KM Processing | 5908.52 |

| Llama 3 8B F16 Processing | 2491.65 |

Key Takeaways:

- Q4KM Quantization: This configuration uses a technique called quantization to significantly reduce the memory footprint of the model without sacrificing accuracy. Think of it like compressing a large image file without losing essential details. The Q4KM configuration shows impressive results, delivering a high token speed for both generation and processing.

- F16 Quantization: This configuration utilizes a lower precision format (F16) for model weights, enabling faster processing but potentially affecting accuracy. The L40S48GB still delivers decent performance, but it's noticeably slower than the Q4K_M version.

Llama 3 70B on NVIDIA L40S_48GB

Table 2: Llama 3 70B Performance on NVIDIA L40S_48GB

| Configuration | Token Speed (Tokens/Second) |

|---|---|

| Llama 3 70B Q4KM Generation | 15.31 |

| Llama 3 70B F16 Generation | N/A |

| Llama 3 70B Q4KM Processing | 649.08 |

| Llama 3 70B F16 Processing | N/A |

Key Takeaways:

- Scaling Up: The L40S48GB handles the larger Llama 3 70B model with respectable performance, especially in the Q4K_M configuration. While the token speeds are lower than the Llama 3 8B, it's still a significant achievement for a local setup.

- Missing F16 Data: Unfortunately, we don't have data for the F16 configuration of Llama 3 70B on the L40S_48GB. This might be due to limitations of the current benchmark or the specific configuration.

Comparison of NVIDIA L40S_48GB with Other Devices

While we're focusing on the NVIDIA L40S48GB, it's essential to understand its performance relative to other devices. This helps us determine if the L40S48GB truly offers a compelling ROI.

However, for the purpose of this specific article, we will only discuss the NVIDIA L40S_48GB.

ROI Analysis: Is the NVIDIA L40S_48GB Worth It?

The real question is: does the increased performance of the NVIDIA L40S_48GB justify its price? This depends on your specific needs and use cases.

- For researchers and developers working with large LLMs: The L40S_48GB offers a significant advantage, allowing you to experiment with complex models locally and achieve faster iterations. This translates to increased productivity and faster time to results — a key factor for research and development.

- For businesses deploying LLM applications: The L40S48GB can power real-time applications that require high performance and rapid responses. Think of chatbots, translation services, and intelligent assistants - the L40S48GB can provide the horsepower to keep them running smoothly.

Ultimately, the decision boils down to your budget and the value you place on faster processing and the ability to work with larger models. The L40S_48GB is a powerful investment but not a necessity for every AI project.

Conclusion: Unleash the Potential of LLMs with NVIDIA L40S_48GB

The NVIDIA L40S_48GB is a game-changer for developers and researchers looking to harness the power of LLMs locally. Its high memory capacity and processing power enable efficient execution of even the largest models, boosting productivity and opening doors to new possibilities.

If you're serious about AI and are ready to take your LLM projects to the next level, the NVIDIA L40S_48GB is a worthy investment that can deliver a powerful ROI.

FAQ

What are LLMs?

LLMs are large language models, a type of artificial intelligence that can understand and generate human-like text. Think of them as super-smart AI scribes, capable of writing stories, translating languages, and answering your questions in a natural way.

What is quantization?

Quantization is a technique used to compress the size of a model while maintaining its accuracy to some degree. It involves reducing the number of bits used to represent the model's weights, making it more compact and efficient.

Think of it like compressing a large image file: you reduce the number of pixels but still maintain the essential details of the image.

What is token speed?

Token speed measures how quickly an LLM can process text data. It's measured in tokens per second and reflects the overall efficiency of the model.

Can I use the NVIDIA L40S_48GB for other AI tasks?

Absolutely! The L40S_48GB is a versatile GPU well-suited for various AI tasks, including:

- Deep learning: Training and running complex neural networks for image recognition, natural language processing (NLP), and more.

- Machine learning: Accelerating model training and inference for a wide range of applications.

- Computer vision: Processing and analyzing large amounts of image and video data for tasks like object detection and tracking.

Keywords

NVIDIA L40S_48GB, LLM, Llama 3, token speed, GPU, AI, quantization, ROI analysis, deep learning, machine learning, computer vision