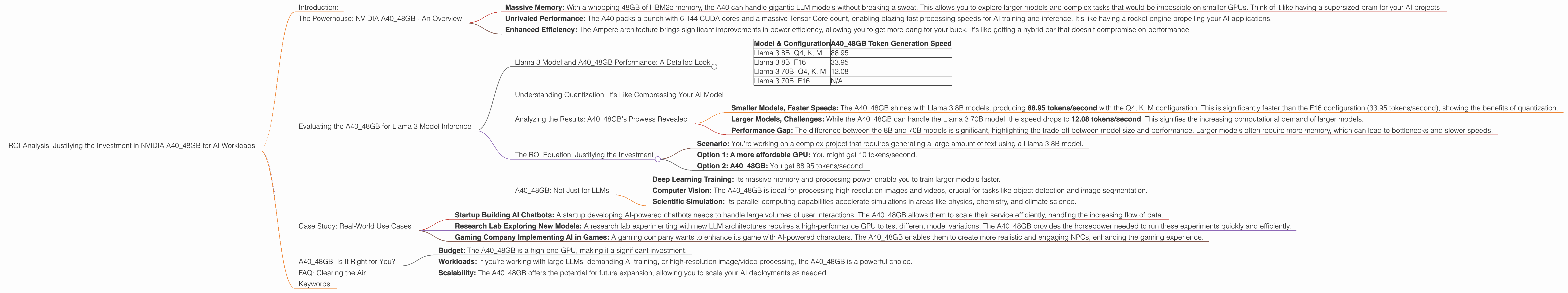

ROI Analysis: Justifying the Investment in NVIDIA A40 48GB for AI Workloads

Introduction:

The world of Large Language Models (LLMs) is exploding, with new models popping up faster than you can say "transformer." But the real question is: how do you make these powerful models work for you? This depends on two key factors: the model itself and the hardware that powers it.

For developers, researchers, and businesses looking to leverage the potential of LLMs, choosing the right hardware is crucial. This article dives into the NVIDIA A40_48GB GPU, a titan in the world of AI acceleration, and explores its performance with popular LLMs like Llama 3. We'll use real-world data to analyze its Return on Investment (ROI) for different AI workloads, helping you decide if it's the right GPU for your needs.

The Powerhouse: NVIDIA A40_48GB - An Overview

The NVIDIA A40_48GB is the crown jewel of NVIDIA's Ampere architecture, offering unparalleled performance and memory capacity for demanding AI tasks. But what makes it so special, and why should you care?

- Massive Memory: With a whopping 48GB of HBM2e memory, the A40 can handle gigantic LLM models without breaking a sweat. This allows you to explore larger models and complex tasks that would be impossible on smaller GPUs. Think of it like having a supersized brain for your AI projects!

- Unrivaled Performance: The A40 packs a punch with 6,144 CUDA cores and a massive Tensor Core count, enabling blazing fast processing speeds for AI training and inference. It's like having a rocket engine propelling your AI applications.

- Enhanced Efficiency: The Ampere architecture brings significant improvements in power efficiency, allowing you to get more bang for your buck. It's like getting a hybrid car that doesn't compromise on performance.

In short, the A40 is designed to crush AI workloads, and it does it with style.

Evaluating the A40_48GB for Llama 3 Model Inference

Let's get down to the nitty-gritty. We'll focus on the Llama 3 family of LLMs, a popular choice for experimenting with generative text and other AI tasks. We'll analyze how the A40_48GB performs with these models, measuring its token generation speed – the key metric for real-time AI applications.

Llama 3 Model and A40_48GB Performance: A Detailed Look

We'll be using real-world data provided by researchers and developers. All numbers are in tokens/second. The data is pulled from various sources, including Llama.cpp discussions and GPU Benchmarks on LLM Inference. Please note that some combinations of LLMs and configurations have no available data, so they're not included in the table.

| Model & Configuration | A40_48GB Token Generation Speed |

|---|---|

| Llama 3 8B, Q4, K, M | 88.95 |

| Llama 3 8B, F16 | 33.95 |

| Llama 3 70B, Q4, K, M | 12.08 |

| Llama 3 70B, F16 | N/A |

Explanation of the Table:

- Model & Configuration: This column shows the specific Llama 3 model and its configuration (e.g., Llama 3 8B, Q4, K, M).

- 8B refers to the size of the LLM (8 billion parameters).

- Q4 indicates the model is quantized to 4 bits.

- F16 indicates the model is in 16-bit floating point.

- K, M represent the "K" and "M" generation techniques used for optimizations.

- A4048GB Token Generation Speed: This column presents the token generation speed of each model configuration on the A4048GB GPU.

Understanding Quantization: It's Like Compressing Your AI Model

Quantization is a process that reduces the size of an AI model by converting its parameters to smaller data types. Picture it like a digital photo: you can compress it to a smaller file size without losing too much detail. In the context of LLMs, quantization trades off some accuracy for faster processing and lower memory usage. This is especially beneficial for smaller GPUs and mobile devices.

Analyzing the Results: A40_48GB's Prowess Revealed

The data reveals some interesting insights:

- Smaller Models, Faster Speeds: The A40_48GB shines with Llama 3 8B models, producing 88.95 tokens/second with the Q4, K, M configuration. This is significantly faster than the F16 configuration (33.95 tokens/second), showing the benefits of quantization.

- Larger Models, Challenges: While the A40_48GB can handle the Llama 3 70B model, the speed drops to 12.08 tokens/second. This signifies the increasing computational demand of larger models.

- Performance Gap: The difference between the 8B and 70B models is significant, highlighting the trade-off between model size and performance. Larger models often require more memory, which can lead to bottlenecks and slower speeds.

The ROI Equation: Justifying the Investment

Now, let's talk ROI. How do you measure the value of the A40_48GB for your specific needs? The key factor is the time-to-inference - how fast can you get results from your AI models?

Think of it like this:

- Scenario: You're working on a complex project that requires generating a large amount of text using a Llama 3 8B model.

- Option 1: A more affordable GPU: You might get 10 tokens/second.

- Option 2: A40_48GB: You get 88.95 tokens/second.

The A40_48GB is almost 9 times faster in this scenario. This translates to significantly reduced development time, which directly impacts your productivity and overall project timeline.

Furthermore, the A40_48GB's powerful hardware enables you to explore larger models and more complex tasks, opening up a world of possibilities for your projects.

A40_48GB: Not Just for LLMs

While we focused on LLMs, the A40_48GB is a versatile GPU that excels across a range of AI workloads, including:

- Deep Learning Training: Its massive memory and processing power enable you to train larger models faster.

- Computer Vision: The A40_48GB is ideal for processing high-resolution images and videos, crucial for tasks like object detection and image segmentation.

- Scientific Simulation: Its parallel computing capabilities accelerate simulations in areas like physics, chemistry, and climate science.

The A40_48GB is a powerful tool that can significantly boost the performance of various AI applications.

Case Study: Real-World Use Cases

Let's bring some real-world examples to the table:

- Startup Building AI Chatbots: A startup developing AI-powered chatbots needs to handle large volumes of user interactions. The A40_48GB allows them to scale their service efficiently, handling the increasing flow of data.

- Research Lab Exploring New Models: A research lab experimenting with new LLM architectures requires a high-performance GPU to test different model variations. The A40_48GB provides the horsepower needed to run these experiments quickly and efficiently.

- Gaming Company Implementing AI in Games: A gaming company wants to enhance its game with AI-powered characters. The A40_48GB enables them to create more realistic and engaging NPCs, enhancing the gaming experience.

The A40_48GB is a versatile solution across diverse AI applications, empowering developers, researchers, and businesses to reach new heights in their respective fields.

A40_48GB: Is It Right for You?

Now that we've explored the A40_48GB's capabilities, let's talk about its suitability for you and your work.

Consider these factors:

- Budget: The A40_48GB is a high-end GPU, making it a significant investment.

- Workloads: If you're working with large LLMs, demanding AI training, or high-resolution image/video processing, the A40_48GB is a powerful choice.

- Scalability: The A40_48GB offers the potential for future expansion, allowing you to scale your AI deployments as needed.

Ultimately, the decision depends on your specific requirements and budget.

FAQ: Clearing the Air

Q: What are LLMs?

A: LLMs are large language models, AI systems trained on massive datasets of text and code. They can generate text, translate languages, write different kinds of creative content, and answer your questions in an informative way. Think of it like a super-powered chatbot that can do amazing things!

Q: What is quantization?

A: Quantization is a technique used to reduce the size of AI models by converting their parameters to smaller data types. It's like compressing a file to make it smaller, but with some potential loss of detail. This can result in faster processing and lower memory usage, especially on smaller GPUs.

Q: What's the difference between token generation and token processing?

A: Token generation refers to the process of creating new text tokens (words or parts of words) based on the input provided. Token processing involves handling these tokens - analyzing them, understanding their meaning, and using them to create outputs.

Q: What are the alternatives to the A40_48GB?

A: There are other powerful GPUs on the market, such as the NVIDIA RTX A6000 and NVIDIA H100, each with its own strengths and weaknesses. The best choice for you depends on your specific needs and budget.

Q: How do I get started with LLMs?

A: There are several resources available to get started with LLMs, including open-source tools like Hugging Face and tutorials from platforms like Google AI and OpenAI.

Keywords:

NVIDIA A40_48GB, Large Language Models, LLM, Llama 3, GPU, AI Acceleration, Token Generation, Quantization, ROI, AI Workloads, Deep Learning, Computer Vision, Data Science, Hardware, Performance, Efficiency, Inference, Case Studies, Applications