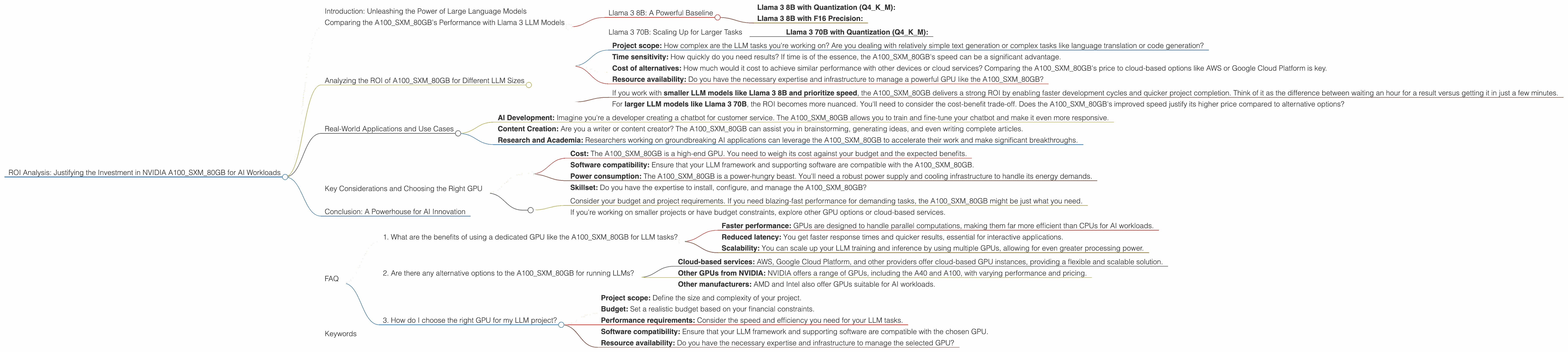

ROI Analysis: Justifying the Investment in NVIDIA A100 SXM 80GB for AI Workloads

Introduction: Unleashing the Power of Large Language Models

The world of artificial intelligence is buzzing with excitement over large language models (LLMs). These powerful AI systems can generate text, translate languages, write different kinds of creative content, and answer your questions in an informative way. Imagine having access to a super-smart AI assistant right on your desk, able to tackle tasks that would normally take hours or even days. That's the promise of LLMs.

But these LLMs are computationally hungry. They need powerful hardware to run smoothly. Think of it like this: a tiny, basic calculator can handle simple math problems, but you need a supercomputer to crunch complex equations for rocket science.

This is where NVIDIA's A100SXM80GB comes in. This powerful graphics processing unit (GPU) is designed to tackle the massive workloads of AI applications, including the training and execution of LLMs. But is it worth the investment? What are the real-world benefits? In this article, we'll dive into the performance metrics of the A100SXM80GB with specific LLM models, analyzing its return on investment (ROI) in detail.

Comparing the A100SXM80GB's Performance with Llama 3 LLM Models

Let's start with some specific examples. We'll focus on the Llama 3 family of LLMs, specifically Llama 3 8B and Llama 3 70B. Why Llama 3? It's a popular and powerful choice for developers, offering impressive performance for its size.

Llama 3 8B: A Powerful Baseline

Imagine Llama 3 8B as a marathon runner. It's fast, accurate, and generally considered a good starting point for many AI developers.

Llama 3 8B with Quantization (Q4KM):

The A100SXM80GB demonstrates impressive speed when running Llama 3 8B with quantization. Quantization is a bit like simplifying a complex mathematical formula. It reduces the size of the LLM without sacrificing too much accuracy. This process allows the A100SXM80GB to handle the calculations more efficiently. The A100SXM80GB can generate 133.38 tokens/second with Llama 3 8B (quantized with Q4KM).

Llama 3 8B with F16 Precision:

Now let's switch to F16 precision. Think of this as using a higher-resolution image for your work. It provides more detail though it requires more computational power. With Llama 3 8B in F16, the A100SXM80GB achieves a respectable speed of 53.18 tokens/second.

Llama 3 70B: Scaling Up for Larger Tasks

Moving on to Llama 3 70B, think of this as upgrading from a compact car to a spacious SUV. It's built for larger tasks and more complex projects.

Llama 3 70B with Quantization (Q4KM):

Even with the larger Llama 3 70B model, the A100SXM80GB still performs admirably. It can generate 24.33 tokens/second with quantized Llama 3 70B (Q4KM).

Analyzing the ROI of A100SXM80GB for Different LLM Sizes

Let's get down to the nitty-gritty: the return on investment (ROI).

How do you calculate the ROI of an AI device?

It's not as simple as dividing the cost of the A100SXM80GB by the amount of tokens it can generate. You need to consider the following factors:

- Project scope: How complex are the LLM tasks you're working on? Are you dealing with relatively simple text generation or complex tasks like language translation or code generation?

- Time sensitivity: How quickly do you need results? If time is of the essence, the A100SXM80GB's speed can be a significant advantage.

- Cost of alternatives: How much would it cost to achieve similar performance with other devices or cloud services? Comparing the A100SXM80GB's price to cloud-based options like AWS or Google Cloud Platform is key.

- Resource availability: Do you have the necessary expertise and infrastructure to manage a powerful GPU like the A100SXM80GB?

Here's a simplified view of the ROI:

- If you work with smaller LLM models like Llama 3 8B and prioritize speed, the A100SXM80GB delivers a strong ROI by enabling faster development cycles and quicker project completion. Think of it as the difference between waiting an hour for a result versus getting it in just a few minutes.

- For larger LLM models like Llama 3 70B, the ROI becomes more nuanced. You'll need to consider the cost-benefit trade-off. Does the A100SXM80GB's improved speed justify its higher price compared to alternative options?

Real-World Applications and Use Cases

Let's explore how the A100SXM80GB can be a valuable asset in various real-world scenarios:

- AI Development: Imagine you're a developer creating a chatbot for customer service. The A100SXM80GB allows you to train and fine-tune your chatbot and make it even more responsive.

- Content Creation: Are you a writer or content creator? The A100SXM80GB can assist you in brainstorming, generating ideas, and even writing complete articles.

- Research and Academia: Researchers working on groundbreaking AI applications can leverage the A100SXM80GB to accelerate their work and make significant breakthroughs.

Key Considerations and Choosing the Right GPU

Before rushing to buy an A100SXM80GB, there are some important factors to consider:

- Cost: The A100SXM80GB is a high-end GPU. You need to weigh its cost against your budget and the expected benefits.

- Software compatibility: Ensure that your LLM framework and supporting software are compatible with the A100SXM80GB.

- Power consumption: The A100SXM80GB is a power-hungry beast. You'll need a robust power supply and cooling infrastructure to handle its energy demands.

- Skillset: Do you have the expertise to install, configure, and manage the A100SXM80GB?

Choosing the right GPU is a balancing act:

- Consider your budget and project requirements. If you need blazing-fast performance for demanding tasks, the A100SXM80GB might be just what you need.

- If you're working on smaller projects or have budget constraints, explore other GPU options or cloud-based services.

Conclusion: A Powerhouse for AI Innovation

The NVIDIA A100SXM80GB is a powerful and versatile GPU capable of handling the demanding workloads of AI, especially for running large language models. Its performance metrics speak for themselves, but remember to carefully analyze factors like cost, power consumption, and your personal skills.

FAQ

1. What are the benefits of using a dedicated GPU like the A100SXM80GB for LLM tasks?

- Faster performance: GPUs are designed to handle parallel computations, making them far more efficient than CPUs for AI workloads.

- Reduced latency: You get faster response times and quicker results, essential for interactive applications.

- Scalability: You can scale up your LLM training and inference by using multiple GPUs, allowing for even greater processing power.

2. Are there any alternative options to the A100SXM80GB for running LLMs?

- Cloud-based services: AWS, Google Cloud Platform, and other providers offer cloud-based GPU instances, providing a flexible and scalable solution.

- Other GPUs from NVIDIA: NVIDIA offers a range of GPUs, including the A40 and A100, with varying performance and pricing.

- Other manufacturers: AMD and Intel also offer GPUs suitable for AI workloads.

3. How do I choose the right GPU for my LLM project?

- Project scope: Define the size and complexity of your project.

- Budget: Set a realistic budget based on your financial constraints.

- Performance requirements: Consider the speed and efficiency you need for your LLM tasks.

- Software compatibility: Ensure that your LLM framework and supporting software are compatible with the chosen GPU.

- Resource availability: Do you have the necessary expertise and infrastructure to manage the selected GPU?

Keywords

AI, LLM, GPU, NVIDIA, A100SXM80GB, Llama 3, Llama 3 8B , Llama 3 70B, Quantization, Q4KM, F16, Token/second, ROI, Cost, Performance, Software, Power Consumption, Cloud, AWS, GCP, AMD, Intel.