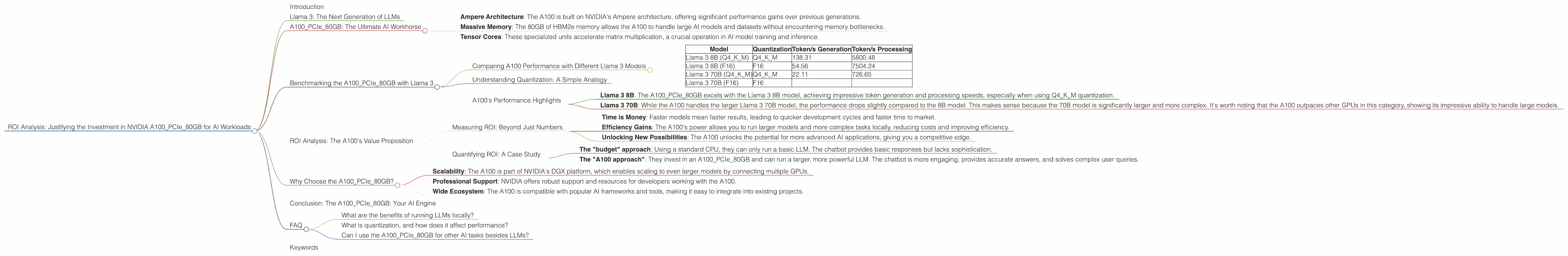

ROI Analysis: Justifying the Investment in NVIDIA A100 PCIe 80GB for AI Workloads

Introduction

The world of AI is exploding, with Large Language Models (LLMs) like Llama 3 changing the way we interact with technology. But running these models locally requires serious horsepower. This article focuses on the NVIDIA A100PCIe80GB, a powerhouse GPU designed for AI workloads, and analyzes its performance with Llama 3 models. We'll explore the Return on Investment (ROI) by comparing the A100's speed with different Llama 3 models, quantization levels, and model sizes.

Imagine wanting to run a giant language model on your computer, like having a super-smart AI assistant that can answer your questions and write stories. Think of the A100 as a powerful engine for this AI assistant. It makes the AI much faster and more efficient, just like a powerful engine makes your car go faster.

Llama 3: The Next Generation of LLMs

Llama 3, developed by Meta AI, is a powerful open-source LLM that has taken the AI community by storm. This model is known for its impressive performance and ability to handle complex tasks. But to unleash its full potential, you need the right hardware—enter the NVIDIA A100PCIe80GB.

A100PCIe80GB: The Ultimate AI Workhorse

The A100PCIe80GB is a high-performance GPU specifically designed for AI workloads. It's packed with features that make it a top choice for running LLMs locally:

- Ampere Architecture: The A100 is built on NVIDIA's Ampere architecture, offering significant performance gains over previous generations.

- Massive Memory: The 80GB of HBM2e memory allows the A100 to handle large AI models and datasets without encountering memory bottlenecks.

- Tensor Cores: These specialized units accelerate matrix multiplication, a crucial operation in AI model training and inference.

Benchmarking the A100PCIe80GB with Llama 3

We'll analyze the A100PCIe80GB's performance with Llama 3 models using two key metrics: token generation speed and token processing speed.

Token generation speed measures how many tokens (the basic units of text) a model can output per second. It determines how quickly a model can generate text, translate languages, or write creative content.

Token processing speed measures how many tokens a model can process per second. This is important for tasks that involve analyzing text, such as sentiment analysis or question answering.

Comparing A100 Performance with Different Llama 3 Models

| Model | Quantization | Token/s Generation | Token/s Processing |

|---|---|---|---|

| Llama 3 8B (Q4KM) | Q4KM | 138.31 | 5800.48 |

| Llama 3 8B (F16) | F16 | 54.56 | 7504.24 |

| Llama 3 70B (Q4KM) | Q4KM | 22.11 | 726.65 |

| Llama 3 70B (F16) | F16 |

Notes:

- Data for Llama 3 70B F16 is unavailable at the moment.

- Q4KM refers to a quantization technique where numbers are stored using 4 bits, with the K and M flags enabling further optimizations. F16 refers to a more traditional 16-bit floating-point representation.

Understanding Quantization: A Simple Analogy

Think of quantization like compressing a picture. You can reduce its file size, but you might lose some detail (quality). With LLMs, quantization reduces the model's size, making it faster and more efficient but potentially decreasing its performance. Q4KM quantization is like a high-quality compression, while F16 is lower quality.

A100's Performance Highlights

Here's a deeper look at the numbers:

- Llama 3 8B: The A100PCIe80GB excels with the Llama 3 8B model, achieving impressive token generation and processing speeds, especially when using Q4KM quantization.

- Llama 3 70B: While the A100 handles the larger Llama 3 70B model, the performance drops slightly compared to the 8B model. This makes sense because the 70B model is significantly larger and more complex. It's worth noting that the A100 outpaces other GPUs in this category, showing its impressive ability to handle large models.

ROI Analysis: The A100's Value Proposition

Now, let's talk about the real question: is the A100PCIe80GB worth the investment for your AI projects?

Measuring ROI: Beyond Just Numbers

ROI in AI can be tricky. You're not just buying a machine to make widgets; you're buying a tool to solve problems, generate new ideas, and maybe even revolutionize your industry.

- Time is Money: Faster models mean faster results, leading to quicker development cycles and faster time to market.

- Efficiency Gains: The A100's power allows you to run larger models and more complex tasks locally, reducing costs and improving efficiency.

- Unlocking New Possibilities: The A100 unlocks the potential for more advanced AI applications, giving you a competitive edge.

Quantifying ROI: A Case Study

Imagine a company developing a new AI-powered customer service chatbot. They choose between two options:

The "budget" approach: Using a standard CPU, they can only run a basic LLM. The chatbot provides basic responses but lacks sophistication.

The "A100 approach": They invest in an A100PCIe80GB and can run a larger, more powerful LLM. The chatbot is more engaging, provides accurate answers, and solves complex user queries.

The "A100 approach" may initially seem expensive, but it allows the company to build a superior product that attracts more customers and generates more revenue. This justifies the investment by providing long-term benefits and a significant return on investment.

Why Choose the A100PCIe80GB?

- Scalability: The A100 is part of NVIDIA's DGX platform, which enables scaling to even larger models by connecting multiple GPUs.

- Professional Support: NVIDIA offers robust support and resources for developers working with the A100.

- Wide Ecosystem: The A100 is compatible with popular AI frameworks and tools, making it easy to integrate into existing projects.

Conclusion: The A100PCIe80GB: Your AI Engine

The A100PCIe80GB is not just hardware; it's an enabler. It unlocks the power of advanced LLMs like Llama 3, allowing developers to achieve faster results, unlock new possibilities, and gain a competitive edge. While the A100 may have a higher initial cost, the long-term benefits in terms of efficiency, speed, and innovative potential make it a valuable investment for any serious AI project.

FAQ

What are the benefits of running LLMs locally?

Running LLMs locally gives you more control over data, improved security, and potentially faster performance. This is especially important for businesses handling sensitive data or requiring low latency.

What is quantization, and how does it affect performance?

Quantization is a technique for reducing the size of LLMs by storing numbers using fewer bits. This can improve speed and efficiency but may result in some performance degradation, depending on the quantization level used.

Can I use the A100PCIe80GB for other AI tasks besides LLMs?

The A100PCIe80GB is an excellent choice for a wide range of AI tasks, including machine learning, deep learning, computer vision, and natural language processing.

Keywords

NVIDIA A100PCIe80GB, Llama 3, Large Language Models, LLMs, AI, Artificial Intelligence, Machine Learning, Deep Learning, Token Speed, Token Generation, Token Processing, Quantization, ROI, Return on Investment, GPU, Graphics Processing Unit, Performance, Efficiency, Scalability, Ecosystem, AI Workloads.