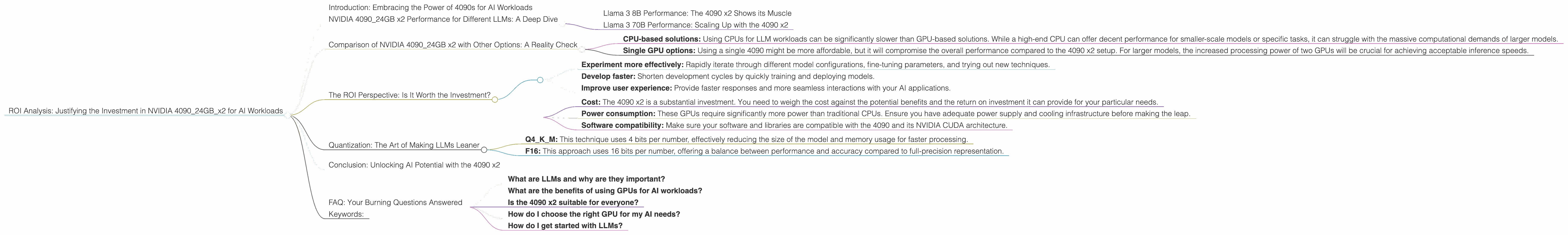

ROI Analysis: Justifying the Investment in NVIDIA 4090 24GB x2 for AI Workloads

Introduction: Embracing the Power of 4090s for AI Workloads

The world of large language models (LLMs) is buzzing with excitement. These powerful AI models are transforming the way we interact with computers, providing unprecedented capabilities in natural language processing, code generation, and more. But unleashing the full potential of LLMs requires serious computational horsepower. This is where the NVIDIA 4090, a beast of a graphics card, steps into the spotlight.

This article dives deep into the ROI (Return on Investment) of deploying two NVIDIA 4090_24GB GPUs for your AI workloads. We'll analyze performance data for various LLM models and explore how this setup can significantly accelerate your AI projects. By the end, you'll have a clear understanding of whether this investment makes sense for your specific needs.

NVIDIA 4090_24GB x2 Performance for Different LLMs: A Deep Dive

Let's get down to the nitty-gritty. We'll focus on the Llama 3 family of models, specifically the 8B and 70B versions, which are popular choices for both research and practical applications. Remember, these results are based on specific setups and configurations. Your mileage may vary, so consider these as benchmarks rather than absolute guarantees.

Llama 3 8B Performance: The 4090 x2 Shows its Muscle

Q4KM Quantization:

This setup excels at handling 8B models with quantization. With Q4 quantization (using 4 bits per number), the two 4090s churn through tokens at a rate of 122.56 tokens per second for generation and 8545 tokens per second for processing. That's a lot of text being processed in a blink of an eye!

F16 Quantization:

Even with F16 quantization (using 16 bits per number), the 4090s maintain impressive performance: 53.27 tokens per second for generation and 11094.51 tokens per second for processing.

To put this into perspective: Imagine you're working with a very large document. The 4090s can process this document many times faster than a typical CPU, giving you the speed you need to experiment with different models and parameters.

Llama 3 70B Performance: Scaling Up with the 4090 x2

Q4KM Quantization:

While the 4090 x2 is a beast with LLMs, it doesn't effortlessly handle every task. For 70B models, the performance drops, but remains respectable. The 4090s achieve 19.06 tokens per second for generation and 905.38 tokens per second for processing with Q4KM quantization.

F16 Quantization:

We lack data for F16 quantization for the 70B model, but we do know that the 4090s are designed to handle F16 precision. For this model, it's expected that the 4090s could provide performance close to the Q4KM levels for a smoother experience.

Comparison of NVIDIA 4090_24GB x2 with Other Options: A Reality Check

While the 4090 x2 is a powerhouse, it's important to consider other options and their performance. This will help you make informed decisions about your hardware investment. We lack data for other devices in this specific setup, so we'll be focusing only on the two 4090s for this comparison.

- CPU-based solutions: Using CPUs for LLM workloads can be significantly slower than GPU-based solutions. While a high-end CPU can offer decent performance for smaller-scale models or specific tasks, it can struggle with the massive computational demands of larger models.

- Single GPU options: Using a single 4090 might be more affordable, but it will compromise the overall performance compared to the 4090 x2 setup. For larger models, the increased processing power of two GPUs will be crucial for achieving acceptable inference speeds.

The ROI Perspective: Is It Worth the Investment?

The beauty of the 4090 x2 is its versatility. This setup excels at handling a wide range of AI tasks, from text generation to code completion, and image and audio processing. The increased performance allows you to:

- Experiment more effectively: Rapidly iterate through different model configurations, fine-tuning parameters, and trying out new techniques.

- Develop faster: Shorten development cycles by quickly training and deploying models.

- Improve user experience: Provide faster responses and more seamless interactions with your AI applications.

Key Considerations:

- Cost: The 4090 x2 is a substantial investment. You need to weigh the cost against the potential benefits and the return on investment it can provide for your particular needs.

- Power consumption: These GPUs require significantly more power than traditional CPUs. Ensure you have adequate power supply and cooling infrastructure before making the leap.

- Software compatibility: Make sure your software and libraries are compatible with the 4090 and its NVIDIA CUDA architecture.

Quantization: The Art of Making LLMs Leaner

One of the key factors influencing the performance of the 4090s is quantization. This technique involves reducing the precision of numbers used in the LLM model. It might sound counterintuitive, but this reduction can significantly boost performance without sacrificing much accuracy.

Imagine you're storing a number like 3.14159. You could either store the full value with all its digits (like a full-resolution image) or store a simplified version (like a low-resolution image). The simplified version would be smaller and easier to process, while still retaining the core information.

Types of Quantization:

- Q4KM: This technique uses 4 bits per number, effectively reducing the size of the model and memory usage for faster processing.

- F16: This approach uses 16 bits per number, offering a balance between performance and accuracy compared to full-precision representation.

The Trade-off:

While quantization can enhance speed, it might impact the model's accuracy slightly. It's a trade-off between performance and accuracy. Carefully consider your application's requirements and choose the appropriate quantization level.

Conclusion: Unlocking AI Potential with the 4090 x2

The 4090 x2 is a game-changer for AI enthusiasts and professionals. Its exceptional performance, supported by a robust ecosystem, empowers you to unleash the true potential of LLMs. By accelerating your AI projects, this investment can lead to faster development cycles, improved results, and a more rewarding journey into the world of AI.

While this article focused on the 4090 x2, the world of AI hardware is continuously evolving. Stay tuned for future developments and advancements that will further shape the landscape of AI research and development.

FAQ: Your Burning Questions Answered

What are LLMs and why are they important?

LLMs are powerful AI models capable of understanding and generating human-like text. They are revolutionizing how we interact with computers, enabling tasks like writing creative content, translating languages, and even writing code.

What are the benefits of using GPUs for AI workloads?

GPUs are designed for parallel processing, making them ideal for handling the massive computational demands of AI models. They can significantly speed up tasks like model training and inference, leading to faster results and improved efficiency.

Is the 4090 x2 suitable for everyone?

The 4090 x2 is a powerful setup that can be overkill for smaller-scale AI projects. However, it's an excellent investment if you work with large models or prioritize speed and efficiency.

How do I choose the right GPU for my AI needs?

Consider the size of your models, the type of tasks you're performing, and your budget when choosing a GPU.

How do I get started with LLMs?

There are various resources and tutorials available online to help you get started with LLMs. Look for resources on platforms like GitHub, Hugging Face, and Google Colab.

Keywords:

NVIDIA 4090, 409024GBx2, GPU, AI, LLM, Large Language Model, Llama, Llama 3, Llama 3 8B, Llama 3 70B, Q4KM, F16, Quantization, Token per Second, GPU Performance, AI Hardware, AI Workloads, ROI, Return on Investment, AI Development, AI Research, AI Applications.