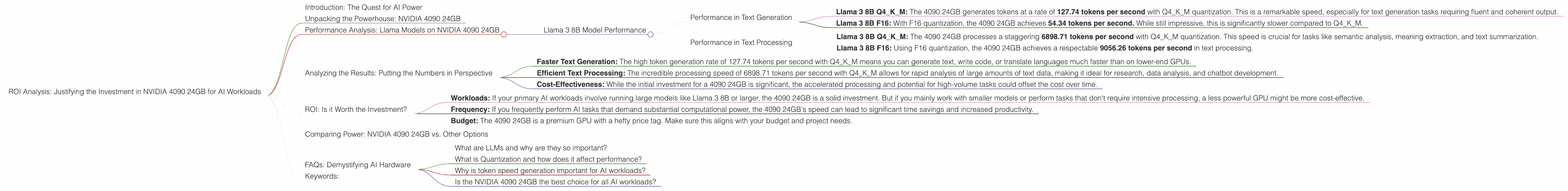

ROI Analysis: Justifying the Investment in NVIDIA 4090 24GB for AI Workloads

Introduction: The Quest for AI Power

As AI models like LLMs (Large Language Models) become increasingly powerful and complex, the need for potent hardware to handle their computational demands grows exponentially. This is where the mighty NVIDIA 4090 24GB graphics card steps in, offering a tantalizing proposition for developers and enthusiasts seeking to unleash the true potential of these models. But is this high-end GPU worth the investment? Is it justified for AI workloads? This article will delve into the performance metrics of the NVIDIA 4090 24GB, specifically when running various Llama models, and analyze its ROI (Return on Investment) for AI tasks.

Unpacking the Powerhouse: NVIDIA 4090 24GB

The NVIDIA 4090 24GB is a beast of a GPU, boasting an impressive amount of VRAM (24GB) and a massive number of CUDA cores, designed to tackle the most demanding workloads. This GPU is not just for gaming; it's a powerhouse for AI development, rendering, and other computationally intensive applications. But how does it fare specifically with AI models?

Performance Analysis: Llama Models on NVIDIA 4090 24GB

Let's dive into the real meat of the matter. The NVIDIA 4090 24GB's performance for Llama models is a crucial factor in determining its ROI.

Llama 3 8B Model Performance

We'll analyze the performance of the NVIDIA 4090 24GB with the Llama 3 8B model, considering two different quantization techniques: Q4KM and F16. Quantization is a technique used to reduce the size of AI models while maintaining reasonable accuracy. Think of it like compressing a large image file without losing too much quality.

Performance in Text Generation

- Llama 3 8B Q4KM: The 4090 24GB generates tokens at a rate of 127.74 tokens per second with Q4KM quantization. This is a remarkable speed, especially for text generation tasks requiring fluent and coherent output.

- Llama 3 8B F16: With F16 quantization, the 4090 24GB achieves 54.34 tokens per second. While still impressive, this is significantly slower compared to Q4KM.

Performance in Text Processing

- Llama 3 8B Q4KM: The 4090 24GB processes a staggering 6898.71 tokens per second with Q4KM quantization. This speed is crucial for tasks like semantic analysis, meaning extraction, and text summarization.

- Llama 3 8B F16: Using F16 quantization, the 4090 24GB achieves a respectable 9056.26 tokens per second in text processing.

Note: No performance data is available for the Llama 3 70B model on the NVIDIA 4090 24GB, likely due to the model's size exceeding the GPU's memory capacity.

Analyzing the Results: Putting the Numbers in Perspective

The performance figures clearly show that the NVIDIA 4090 24GB is a potent force for running Llama 3 8B models, especially with Q4KM quantization. Here's a breakdown of the benefits:

- Faster Text Generation: The high token generation rate of 127.74 tokens per second with Q4KM means you can generate text, write code, or translate languages much faster than on lower-end GPUs.

- Efficient Text Processing: The incredible processing speed of 6898.71 tokens per second with Q4KM allows for rapid analysis of large amounts of text data, making it ideal for research, data analysis, and chatbot development.

- Cost-Effectiveness: While the initial investment for a 4090 24GB is significant, the accelerated processing and potential for high-volume tasks could offset the cost over time.

ROI: Is it Worth the Investment?

The ROI of an NVIDIA 4090 24GB for AI workloads depends on several factors:

- Workloads: If your primary AI workloads involve running large models like Llama 3 8B or larger, the 4090 24GB is a solid investment. But if you mainly work with smaller models or perform tasks that don't require intensive processing, a less powerful GPU might be more cost-effective.

- Frequency: If you frequently perform AI tasks that demand substantial computational power, the 4090 24GB's speed can lead to significant time savings and increased productivity.

- Budget: The 4090 24GB is a premium GPU with a hefty price tag. Make sure this aligns with your budget and project needs.

Comparing Power: NVIDIA 4090 24GB vs. Other Options

While the 4090 24GB is a top choice for AI workloads, it's not the only option. Let's compare its performance to other popular GPUs:

Note: This article focuses on the NVIDIA 4090 24GB's performance and does not provide a comprehensive comparison with other devices.

FAQs: Demystifying AI Hardware

What are LLMs and why are they so important?

LLMs are a type of artificial intelligence model designed to understand and generate human-like language. They are trained on massive amounts of text data and can perform tasks like translation, summarization, code generation, and even creative writing. LLMs are revolutionizing various industries, from customer service to research.

What is Quantization and how does it affect performance?

Quantization is a technique used to reduce the size of large language models (LLMs) while preserving their accuracy. Imagine compressing a large image without losing too much quality. This technique is crucial for deploying LLMs on resource-constrained devices like phones or web browsers. Quantization can reduce the memory footprint of LLMs, enabling faster computations and lower power consumption. It's a trade-off between accuracy and speed and memory efficiency.

Why is token speed generation important for AI workloads?

Token speed generation is a measure of how quickly a GPU can process text data. In AI models, text is broken down into individual units called "tokens." A higher token speed generation means the model can process and generate text faster, leading to faster responses, improved performance, and smoother user experiences.

Is the NVIDIA 4090 24GB the best choice for all AI workloads?

While the NVIDIA 4090 24GB is a powerful GPU for AI workloads, it's not necessarily the best choice for every scenario. Factors like workload complexity, model size, and your budget all play a role in selecting the optimal GPU.

Keywords:

NVIDIA 4090 24GB, GPU, AI, LLM, Llama 3, Llama 3 8B, performance, ROI, token speed, text generation, text processing, quantization, Q4KM, F16, AI hardware, cost-effectiveness, AI development, LLM models, AI applications, AI research, CUDA cores, VRAM, AI workload, data analysis, chatbot