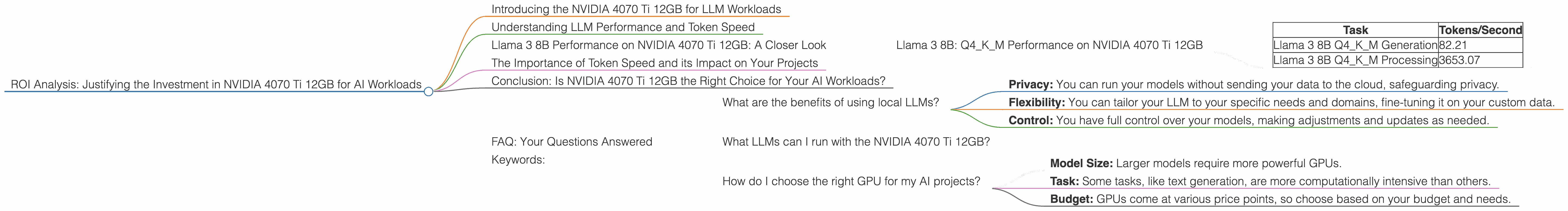

ROI Analysis: Justifying the Investment in NVIDIA 4070 Ti 12GB for AI Workloads

Introducing the NVIDIA 4070 Ti 12GB for LLM Workloads

You're a developer, a geek, and you want to dive into the world of local Large Language Models (LLMs). Now, you're probably facing a major dilemma: which GPU should you choose to power your AI adventures? Enter the NVIDIA 4070 Ti 12GB, a beast of a graphics card that's not just for gaming. But is it the right choice for your AI needs? Let's break it down, with numbers and insights, to see if this graphics card is worth the investment.

Understanding LLM Performance and Token Speed

Think of an LLM as a super smart chatbot, a digital wizard that understands and generates text. But how do we measure its power? We use tokens, the building blocks of text. The more tokens your GPU can process per second, the faster your LLM will generate responses. We'll see how the NVIDIA 4070 Ti 12GB handles various LLMs using tokens per second as our metric.

Llama 3 8B Performance on NVIDIA 4070 Ti 12GB: A Closer Look

Let's start with the popular Llama 3 8B model. This model strikes a good balance between size and performance, making it an excellent choice for many LLM applications. We'll examine its performance with two popular techniques:

- Quantization: Think of quantization as squeezing a giant file into a smaller one without losing too much detail.

- Q4KM: This method uses 4-bit precision for the model weights, significantly reducing the memory footprint and speeding up the processing.

- F16: This format uses 16-bit floating-point numbers, offering a balance between precision and performance.

Llama 3 8B: Q4KM Performance on NVIDIA 4070 Ti 12GB

| Task | Tokens/Second |

|---|---|

| Llama 3 8B Q4KM Generation | 82.21 |

| Llama 3 8B Q4KM Processing | 3653.07 |

What does this mean for you?

The NVIDIA 4070 Ti 12GB shines with the Llama 3 8B Q4KM model. It can process a whopping 3653 tokens per second for processing tasks, which involves analyzing text and understanding context. This means you'll experience amazingly smooth and fast responses from your LLM. For text generation, the GPU still manages a respectable 82.21 tokens per second, making it a solid performer even with this challenging task.

Remember: we don't have data for F16 accuracy on the 4070 Ti 12GB. However, you can explore other resources like the GPU Benchmarks on LLM Inference repository to investigate its potential performance.

The Importance of Token Speed and its Impact on Your Projects

Think about it this way: with super fast token processing, you can train your LLMs faster, make them respond quicker to your prompts, and enjoy a smoother AI experience.

For example, imagine you're building a chatbot for customer service. With a faster GPU, you can handle more customer interactions simultaneously, ensuring a seamless experience for your users. Or, if you're developing a creative writing tool, you can generate text at lightning speed, sparking your imagination and unlocking new creative possibilities.

Conclusion: Is NVIDIA 4070 Ti 12GB the Right Choice for Your AI Workloads?

The NVIDIA 4070 Ti 12GB proves itself as a capable and efficient GPU for AI work with the Llama 3 8B model, especially with Q4KM quantization.

But, remember, the ideal GPU choice is unique to your needs. Consider the models you'll be working with, the specific tasks you'll perform, and the budget you have. It's all about finding the perfect balance between performance, cost, and energy efficiency.

FAQ: Your Questions Answered

What are the benefits of using local LLMs?

Local LLMs offer several advantages:

- Privacy: You can run your models without sending your data to the cloud, safeguarding privacy.

- Flexibility: You can tailor your LLM to your specific needs and domains, fine-tuning it on your custom data.

- Control: You have full control over your models, making adjustments and updates as needed.

What LLMs can I run with the NVIDIA 4070 Ti 12GB?

The NVIDIA 4070 Ti 12GB is a versatile GPU, capable of running various LLMs. However, the best performance will depend on the model's size and complexity. If you're working with larger models (like Llama 3 70B), you might consider a more powerful GPU.

How do I choose the right GPU for my AI projects?

Consider these factors:

- Model Size: Larger models require more powerful GPUs.

- Task: Some tasks, like text generation, are more computationally intensive than others.

- Budget: GPUs come at various price points, so choose based on your budget and needs.

Keywords:

NVIDIA 4070 Ti, GPU, AI, LLM, Llama 3, 8B, 70B, Q4KM, Quantization, Token Speed, Token/Second, Processing, Generation, Performance, ROI, Investment, Local LLMs, Privacy, Flexibility, Control, Budget, AI Workloads, Developer, Geek, Tech, Computer Science, Machine Learning, Deep Learning