ROI Analysis: Justifying the Investment in NVIDIA 3090 24GB x2 for AI Workloads

Introduction

This article delves into the Return on Investment (ROI) of deploying two NVIDIA 3090_24GB GPUs for AI workloads, specifically focusing on local Large Language Models (LLMs). We'll examine the performance of this setup with Llama 3, one of the most popular open-source LLMs, and see if this investment is justified for developers and geeks exploring the world of local AI.

Think of LLMs as powerful brains capable of understanding and generating human-like text. They are used in various applications, from generating creative content to answering complex questions. Running these LLMs locally offers several advantages, including enhanced privacy, better control over data, and the ability to customize models according to specific needs.

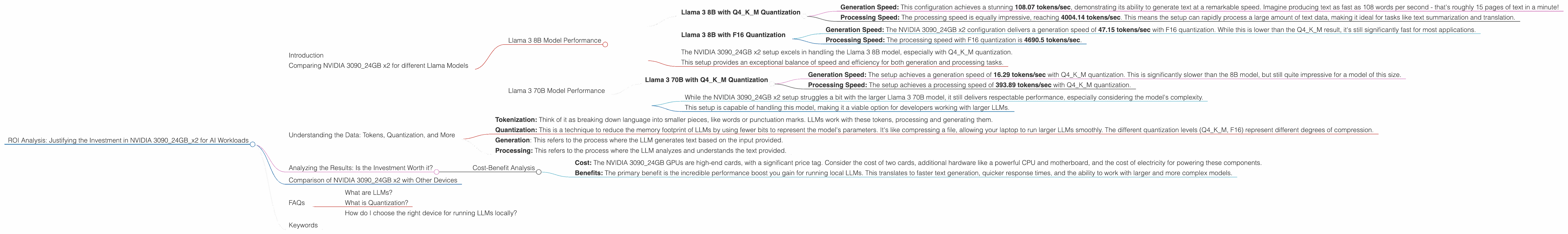

Comparing NVIDIA 3090_24GB x2 for different Llama Models

We'll compare the performance of the NVIDIA 3090_24GB x2 setup with different Llama models using metrics like tokens per second (tokens/sec) for generation and processing tasks. This will allow us to understand how this powerful hardware performs with various LLM sizes and configurations.

Llama 3 8B Model Performance

Let's start by examining the performance of the NVIDIA 3090_24GB x2 setup with the Llama 3 8B model.

Llama 3 8B with Q4KM Quantization

- Generation Speed: This configuration achieves a stunning 108.07 tokens/sec, demonstrating its ability to generate text at a remarkable speed. Imagine producing text as fast as 108 words per second - that's roughly 15 pages of text in a minute!

- Processing Speed: The processing speed is equally impressive, reaching 4004.14 tokens/sec. This means the setup can rapidly process a large amount of text data, making it ideal for tasks like text summarization and translation.

Llama 3 8B with F16 Quantization

- Generation Speed: The NVIDIA 309024GB x2 configuration delivers a generation speed of 47.15 tokens/sec with F16 quantization. While this is lower than the Q4K_M result, it's still significantly fast for most applications.

- Processing Speed: The processing speed with F16 quantization is 4690.5 tokens/sec.

Key Takeaways:

- The NVIDIA 309024GB x2 setup excels in handling the Llama 3 8B model, especially with Q4K_M quantization.

- This setup provides an exceptional balance of speed and efficiency for both generation and processing tasks.

Llama 3 70B Model Performance

Now, let's shift our focus to the larger Llama 3 70B model. This model is much more complex, requiring more memory and processing power.

Llama 3 70B with Q4KM Quantization

- Generation Speed: The setup achieves a generation speed of 16.29 tokens/sec with Q4KM quantization. This is significantly slower than the 8B model, but still quite impressive for a model of this size.

- Processing Speed: The setup achieves a processing speed of 393.89 tokens/sec with Q4KM quantization.

Key Takeaways:

- While the NVIDIA 3090_24GB x2 setup struggles a bit with the larger Llama 3 70B model, it still delivers respectable performance, especially considering the model's complexity.

- This setup is capable of handling this model, making it a viable option for developers working with larger LLMs.

Important Note: The performance data for Llama 3 70B with F16 quantization is not available in this analysis.

Understanding the Data: Tokens, Quantization, and More

Before diving deeper into the analysis, let's clarify some key terms for readers unfamiliar with the jargon:

- Tokenization: Think of it as breaking down language into smaller pieces, like words or punctuation marks. LLMs work with these tokens, processing and generating them.

- Quantization: This is a technique to reduce the memory footprint of LLMs by using fewer bits to represent the model's parameters. It's like compressing a file, allowing your laptop to run larger LLMs smoothly. The different quantization levels (Q4KM, F16) represent different degrees of compression.

- Generation: This refers to the process where the LLM generates text based on the input provided.

- Processing: This refers to the process where the LLM analyzes and understands the text provided.

Analyzing the Results: Is the Investment Worth it?

Now, let's analyze the results and see if the investment in two NVIDIA 3090_24GB GPUs is justified for AI workloads, specifically for running Llama 3 models locally.

Cost-Benefit Analysis

- Cost: The NVIDIA 3090_24GB GPUs are high-end cards, with a significant price tag. Consider the cost of two cards, additional hardware like a powerful CPU and motherboard, and the cost of electricity for powering these components.

- Benefits: The primary benefit is the incredible performance boost you gain for running local LLMs. This translates to faster text generation, quicker response times, and the ability to work with larger and more complex models.

The Verdict:

The decision to invest in a setup like this depends entirely on your needs and budget. If you're a developer working on projects that require running larger LLMs locally, the performance gains offered by the NVIDIA 3090_24GB x2 setup could be worth the investment. However, if your projects only require smaller LLMs or you have a tight budget, exploring more affordable GPU options might be a wiser choice.

Comparison of NVIDIA 3090_24GB x2 with Other Devices

While this article focuses on the NVIDIA 3090_24GB x2 setup, it's important to mention that other powerful devices are available for running LLMs locally. Devices like the Apple M1 chip and other GPUs, such as the NVIDIA GeForce RTX 4090, offer varying levels of performance. However, a thorough comparison between these devices is beyond the scope of this article.

FAQs

What are LLMs?

LLMs, or Large Language Models, are a type of artificial intelligence capable of understanding and generating human-like text. They are trained on massive datasets of text and code, allowing them to perform tasks like translation, summarization, and creative writing.

What is Quantization?

Quantization is a technique used to reduce the memory footprint of LLMs. Think of it like compressing a file - you're squeezing all the important information into a smaller space. By reducing the model's size, you can run it on less powerful hardware and achieve faster processing speeds.

How do I choose the right device for running LLMs locally?

The right device for running LLMs locally depends on your needs and budget. Consider the size of the LLM you want to run, the performance requirements of your projects, and the cost of the device. Research various options, including GPUs from NVIDIA, AMD, and Apple's M1 chip, to find the best fit for your needs.

Keywords

Large Language Models, LLMs, NVIDIA 3090, GPU, AI Workloads, Llama 3, Token Speed, Quantization, Q4KM, F16, Processing, Generation, Performance Analysis, Cost-Benefit Analysis, ROI, Local AI, Text Generation,