ROI Analysis: Justifying the Investment in NVIDIA 3090 24GB for AI Workloads

Introduction

The world of artificial intelligence (AI) is rapidly evolving, driven by advancements in large language models (LLMs). LLMs, like the popular GPT-3 and BLOOM, have revolutionized natural language processing (NLP) by enabling tasks like text generation, translation, and summarization. But these powerful models require significant computing resources, particularly GPUs, to operate efficiently.

This begs the question: Is the NVIDIA 309024GB worth the investment for AI workloads, specifically for running LLMs? This article delves into the performance of the 309024GB for popular LLMs using real-world benchmarks, providing valuable insights to help you make an informed decision about your AI hardware investments.

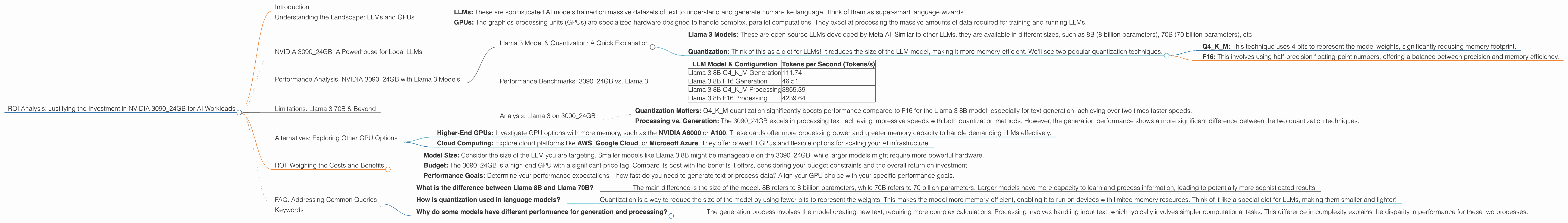

Understanding the Landscape: LLMs and GPUs

Let's start by understanding the core components involved:

- LLMs: These are sophisticated AI models trained on massive datasets of text to understand and generate human-like language. Think of them as super-smart language wizards.

- GPUs: The graphics processing units (GPUs) are specialized hardware designed to handle complex, parallel computations. They excel at processing the massive amounts of data required for training and running LLMs.

Think of it this way: LLMs are like fancy chefs who need high-end equipment – that's where the GPUs step in. They are the ovens, blenders, and other appliances that make the magic happen.

Now, let's dive into the specifics of the NVIDIA 3090_24GB and its performance with various LLMs.

NVIDIA 3090_24GB: A Powerhouse for Local LLMs

The NVIDIA 3090_24GB is a beast of a GPU, boasting phenomenal performance and a generous 24GB of GDDR6X memory. This combination makes it a popular choice for AI applications, including running LLMs locally.

Performance Analysis: NVIDIA 3090_24GB with Llama 3 Models

We'll focus on the Llama 3 LLM family, showcasing the 3090_24GB's capabilities with different model sizes and configurations.

Llama 3 Model & Quantization: A Quick Explanation

- Llama 3 Models: These are open-source LLMs developed by Meta AI. Similar to other LLMs, they are available in different sizes, such as 8B (8 billion parameters), 70B (70 billion parameters), etc.

- Quantization: Think of this as a diet for LLMs! It reduces the size of the LLM model, making it more memory-efficient. We'll see two popular quantization techniques:

- Q4KM: This technique uses 4 bits to represent the model weights, significantly reducing memory footprint.

- F16: This involves using half-precision floating-point numbers, offering a balance between precision and memory efficiency.

Performance Benchmarks: 3090_24GB vs. Llama 3

| LLM Model & Configuration | Tokens per Second (Tokens/s) |

|---|---|

| Llama 3 8B Q4KM Generation | 111.74 |

| Llama 3 8B F16 Generation | 46.51 |

| Llama 3 8B Q4KM Processing | 3865.39 |

| Llama 3 8B F16 Processing | 4239.64 |

Notes:

- Generation: This refers to the speed at which the model generates text output.

- Processing: This refers to the speed at which the model processes input text.

Analysis: Llama 3 on 3090_24GB

Key Observations:

- Quantization Matters: Q4KM quantization significantly boosts performance compared to F16 for the Llama 3 8B model, especially for text generation, achieving over two times faster speeds.

- Processing vs. Generation: The 3090_24GB excels in processing text, achieving impressive speeds with both quantization methods. However, the generation performance shows a more significant difference between the two quantization techniques.

Conclusion: For the Llama 3 8B model, the 309024GB proves to be a powerful machine, particularly when using Q4K_M quantization. This efficiency translates to faster text generation and processing times, making it a valuable asset for developers and researchers working with smaller LLMs.

Limitations: Llama 3 70B & Beyond

Unfortunately, the available benchmark data doesn't include performance numbers for the Llama 3 70B model on the 309024GB. This suggests that the 309024GB might not be suitable for running these larger models locally. Larger LLMs demand more memory and processing power, potentially straining the capacity of the 3090_24GB.

Alternatives: Exploring Other GPU Options

While the 3090_24GB is a potent GPU, it might not be the ideal choice for all AI workloads. For larger LLMs like Llama 3 70B, consider:

- Higher-End GPUs: Investigate GPU options with more memory, such as the NVIDIA A6000 or A100. These cards offer more processing power and greater memory capacity to handle demanding LLMs effectively.

- Cloud Computing: Explore cloud platforms like AWS, Google Cloud, or Microsoft Azure. They offer powerful GPUs and flexible options for scaling your AI infrastructure.

ROI: Weighing the Costs and Benefits

The decision to invest in a specific GPU depends on:

- Model Size: Consider the size of the LLM you are targeting. Smaller models like Llama 3 8B might be manageable on the 3090_24GB, while larger models might require more powerful hardware.

- Budget: The 3090_24GB is a high-end GPU with a significant price tag. Compare its cost with the benefits it offers, considering your budget constraints and the overall return on investment.

- Performance Goals: Determine your performance expectations – how fast do you need to generate text or process data? Align your GPU choice with your specific performance goals.

FAQ: Addressing Common Queries

- What is the difference between Llama 8B and Llama 70B?

- The main difference is the size of the model. 8B refers to 8 billion parameters, while 70B refers to 70 billion parameters. Larger models have more capacity to learn and process information, leading to potentially more sophisticated results.

- How is quantization used in language models?

- Quantization is a way to reduce the size of the model by using fewer bits to represent the weights. This makes the model more memory-efficient, enabling it to run on devices with limited memory resources. Think of it like a special diet for LLMs, making them smaller and lighter!

- Why do some models have different performance for generation and processing?

- The generation process involves the model creating new text, requiring more complex calculations. Processing involves handling input text, which typically involves simpler computational tasks. This difference in complexity explains the disparity in performance for these two processes.

Keywords

NVIDIA 309024GB, GPU, LLM, Llama 3, AI, Machine Learning, Deep Learning, Text Generation, Processing, Quantization, Q4K_M, F16, Performance, Benchmarks, ROI, Cloud Computing, AWS, Google Cloud, Microsoft Azure, Memory, GPU Cores, Tokens Per Second, NLP, Natural Language Processing.