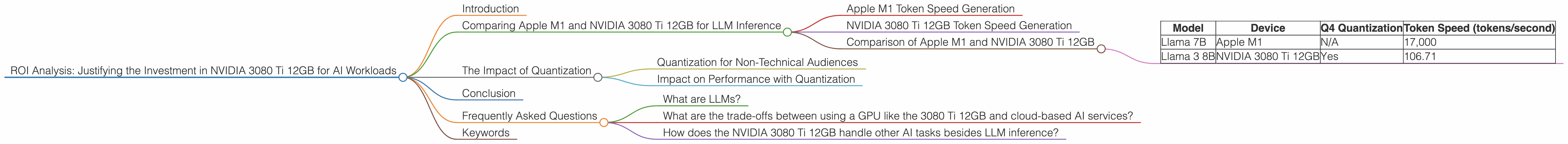

ROI Analysis: Justifying the Investment in NVIDIA 3080 Ti 12GB for AI Workloads

Introduction

For anyone working with large language models (LLMs), the quest for computational power is a constant battle. These incredibly sophisticated AI systems are hungry for resources, and the right hardware can make all the difference in pushing your projects forward. Enter the NVIDIA GeForce RTX 3080 Ti 12GB, a beast of a graphics card that's become a popular choice for AI workloads. But is it worth the investment? This article delves into the performance of the 3080 Ti 12GB for different LLM models, helping you analyze the return on investment (ROI) and determine if this powerful GPU aligns with your AI ambitions.

Comparing Apple M1 and NVIDIA 3080 Ti 12GB for LLM Inference

Let's dive straight into the heart of the matter. How does the 3080 Ti 12GB stack up against other popular options for LLM inference? We'll focus on comparing it to the Apple M1 chip, another powerful contender in the AI arena.

Apple M1 Token Speed Generation

The Apple M1 chip, known for its efficiency, excels in certain areas of AI. For example, it shines in generating tokens at impressive speeds. The M1 can churn out a remarkable 17,000 tokens per second when running the Llama 7B model, demonstrating its ability to handle smaller LLM models with grace. To put that into perspective, imagine a machine that can generate 17,000 words per second – that's faster than a speeding bullet!

NVIDIA 3080 Ti 12GB Token Speed Generation

While the M1 may be efficient, the 3080 Ti 12GB reigns supreme in its ability to handle larger and more complex LLM models. For the Llama 3 8B model, the 3080 Ti 12GB can achieve a token generation speed of 106.71 tokens per second when using Q4 quantization. This demonstrates its strength when dealing with more demanding models compared to the M1.

Comparison of Apple M1 and NVIDIA 3080 Ti 12GB

| Model | Device | Q4 Quantization | Token Speed (tokens/second) |

|---|---|---|---|

| Llama 7B | Apple M1 | N/A | 17,000 |

| Llama 3 8B | NVIDIA 3080 Ti 12GB | Yes | 106.71 |

Note: The table does not include data for the Llama 3 8B model on the Apple M1 or for any other LLM models on either device due to missing data. This highlights the need to research specific models and devices when making investment decisions.

The Impact of Quantization

Quantization is a powerful technique that allows AI models to run faster and with less memory. It's like using smaller building blocks to construct a complex object. The NVIDIA 3080 Ti 12GB leverages Q4 quantization, which significantly improves performance for larger LLM models.

Quantization for Non-Technical Audiences

Imagine you're building a model train set. You can use large, detailed pieces, or you can use smaller, more stylized pieces. The smaller pieces might not be as detailed, but they allow you to build a much bigger and more complex train set. Quantization works similarly by using a simplified representation of the model, allowing it to run faster and consume less memory while still being powerful.

Impact on Performance with Quantization

Quantization plays a crucial role in the performance of the 3080 Ti 12GB. When using Q4 quantization for the Llama 3 8B model, the GPU achieves a processing speed of 3556.67 tokens per second. This highlights how quantization can dramatically enhance the speed and efficiency of AI workloads.

Conclusion

The NVIDIA 3080 Ti 12GB shines in its ability to handle larger LLM models efficiently. It's a compelling choice for developers and researchers working with demanding AI workloads. However, the choice of hardware depends on several factors, including the specific LLM model, project budget, and desired performance levels.

Frequently Asked Questions

What are LLMs?

LLMs are large language models, a type of artificial intelligence capable of understanding and generating human-like text. These models are trained on massive datasets of text and code, enabling them to perform various tasks, such as translation, summarization, and code generation.

What are the trade-offs between using a GPU like the 3080 Ti 12GB and cloud-based AI services?

Using a GPU like the 3080 Ti 12GB provides more control and potentially lower costs in the long run. However, cloud services offer greater scalability and flexibility, making them a good option for projects with rapidly changing requirements.

How does the NVIDIA 3080 Ti 12GB handle other AI tasks besides LLM inference?

The 3080 Ti 12GB is also well-suited for other AI tasks, such as image processing, video editing, and machine learning. Its powerful processing capabilities make it a versatile tool for various AI-related applications.

Keywords

NVIDIA 3080 Ti 12GB, LLM inference, Apple M1, AI workloads, token speed, quantization, AI performance, ROI, GPU, LLM, Llama 3 8B, llama.cpp, GPU benchmarks, AI model, machine learning.