ROI Analysis: Justifying the Investment in NVIDIA 3080 10GB for AI Workloads

Introduction

The world of large language models (LLMs) is booming! These powerful AI systems can generate text, translate languages, write different kinds of creative content, and answer your questions in an informative way. But running these LLMs locally requires a powerful GPU, which can be a significant investment.

This article dives into the ROI (Return on Investment) of the NVIDIA 3080_10GB GPU when used for running LLMs. We'll focus on the popular open-source Llama model and analyze the speed and efficiency of this powerful card.

Whether you're a developer working on AI projects or just a curious tech enthusiast, understanding how a GPU like the 3080_10GB impacts LLM performance can be fascinating. So, buckle up, and let's delve into the numbers!

The NVIDIA 3080_10GB: A Powerhouse for LLMs

The NVIDIA 3080_10GB is a popular choice for gamers and AI enthusiasts alike. Its ample VRAM and high processing power make it an excellent option for handling the demands of AI workloads. But does it live up to the hype for local LLM operations?

Let's explore the performance metrics of the 3080_10GB for Llama models, focusing on speed and efficiency, to see if this GPU is worth the investment.

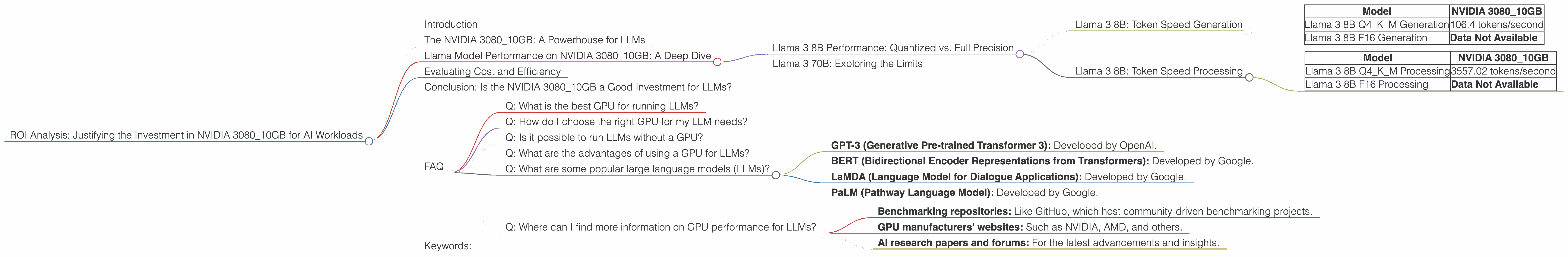

Llama Model Performance on NVIDIA 3080_10GB: A Deep Dive

Llama 3 8B Performance: Quantized vs. Full Precision

We'll start our analysis by focusing on the Llama 3 8B model, a popular choice for its balance of performance and efficiency. Our data focuses on two primary scenarios:

- Quantized: This refers to the use of techniques like quantization to reduce the size of the model's weights, making it faster to load and process. We're looking at Q4KM, which represents quantizing the model with 4-bit precision, with the K and M representing the specific quantization methods used.

- Full Precision: This involves using the model with full 16-bit precision, leading to higher accuracy but potentially slower processing.

Llama 3 8B: Token Speed Generation

| Model | NVIDIA 3080_10GB |

|---|---|

| Llama 3 8B Q4KM Generation | 106.4 tokens/second |

| Llama 3 8B F16 Generation | Data Not Available |

As you can see, the 308010GB shines when running the Llama 3 8B model with Q4K_M quantization, delivering an impressive 106.4 tokens per second. This means that the GPU can generate over 100 tokens every second, making it exceptionally efficient for text generation tasks.

What is Quantization? Imagine you have a massive book filled with details about every word in a language. That's like a full-precision model. Quantization is like summarizing the book into a smaller, more manageable version while still retaining key information. This smaller version is faster to read (process) and doesn't take up as much space (memory).

Note: We lack data for the Llama 3 8B model running with full 16-bit precision (F16) on the 3080_10GB. This could be because the model might be too demanding for the GPU's memory, or it hasn't been benchmarked yet.

Llama 3 8B: Token Speed Processing

| Model | NVIDIA 3080_10GB |

|---|---|

| Llama 3 8B Q4KM Processing | 3557.02 tokens/second |

| Llama 3 8B F16 Processing | Data Not Available |

The 3080_10GB excels in processing tokens with the quantized Llama 3 8B model. It achieves a remarkable 3557.02 tokens per second, indicating its ability to handle large amounts of text data very efficiently.

What is Token Processing? Think of text as a string of beads. Each bead represents a token, a meaningful unit of language. Token processing is like quickly sorting and organizing the beads within a string.

Note: Again, we lack data for F16 processing. This could indicate a similar issue as with generation: full precision might be too demanding for this GPU.

Llama 3 70B: Exploring the Limits

Let's now venture to the larger Llama 3 70B model. This beast packs a punch with its massive size, but it also demands more resources. How does the 3080_10GB fare with this larger model?

Important Note: The 308010GB doesn't have benchmarks for the Llama 3 70B model, both for quantized and full precision. This suggests that the 308010GB might face memory limitations and potentially even performance bottlenecks when handling the larger 70B model.

Key Takeaway: For those looking to run the Llama 3 70B model, the 308010GB might not be the ideal choice. The 308010GB excels with the 8B model, but handling a model of this size might require a more powerful GPU, or adjustments to the model (e.g., using even more aggressive quantization methods).

Evaluating Cost and Efficiency

The cost of running a GPU-powered LLM locally can be a significant factor in deciding if the investment is worthwhile. The 3080_10GB is a relatively affordable GPU, especially compared to high-end options, making it attractive for cost-conscious users.

However, the lack of 70B model data suggests potential limitations. If you plan to work with larger LLMs, you might need to consider upgrading to a higher-tier GPU in the future.

Think of it this way: Imagine you're building a house. The 3080_10GB is like a sturdy foundation for a comfortable, manageable home. It works well for smaller families. But if your family grows significantly, you might need to expand the foundation and build more rooms – just like upgrading your GPU for larger models.

Conclusion: Is the NVIDIA 3080_10GB a Good Investment for LLMs?

The NVIDIA 3080_10GB offers an efficient and powerful solution for local LLM development and experimentation, particularly for the Llama 3 8B model. Its performance with quantization is impressive.

However, its ability to handle larger models like the Llama 3 70B is uncertain. It might be a good option for an initial investment, but you might need to upgrade later for more complex AI projects.

FAQ

Q: What is the best GPU for running LLMs?

A: The "best" GPU depends on your budget and the specific LLM you want to run. For smaller models like Llama 3 8B, the 3080_10GB can be an excellent choice. For larger models like 70B or even larger, you might need a higher-end GPU or potentially a more powerful workstation.

Q: How do I choose the right GPU for my LLM needs?

A: Consider the size of the model you want to run, your budget, and the performance requirements of your project. Research benchmarks and reviews to compare different GPU options and see which one best suits your needs.

Q: Is it possible to run LLMs without a GPU?

A: Yes, but it will be much slower. You can run LLMs on a CPU, but it's not recommended for anything but basic experimentation. GPUs are significantly faster due to their parallel processing capabilities.

Q: What are the advantages of using a GPU for LLMs?

A: GPUs are optimized for parallel processing, which is essential for the complex mathematical operations involved in LLM training and inference. They provide significantly faster speeds compared to CPUs, making them ideal for working with large language models.

Q: What are some popular large language models (LLMs)?

A: Some of the most popular LLMs include: * GPT-3 (Generative Pre-trained Transformer 3): Developed by OpenAI. * BERT (Bidirectional Encoder Representations from Transformers): Developed by Google. * LaMDA (Language Model for Dialogue Applications): Developed by Google. * PaLM (Pathway Language Model): Developed by Google.

Q: Where can I find more information on GPU performance for LLMs?

A: Several online resources can provide detailed information on GPU performance for LLMs, including: * Benchmarking repositories: Like GitHub, which host community-driven benchmarking projects. * GPU manufacturers' websites: Such as NVIDIA, AMD, and others. * AI research papers and forums: For the latest advancements and insights.

Keywords:

NVIDIA 3080_10GB, GPU, LLM, Llama 3 8B, Llama 3 70B, AI workloads, performance benchmarks, token speed generation, token speed processing, quantization, ROI, cost analysis, efficiency, open-source models, deep learning, machine learning, AI development, text generation, natural language processing, data processing, GPU selection, GPU cost, AI software, AI hardware, NLP, AI tools, AI trends.