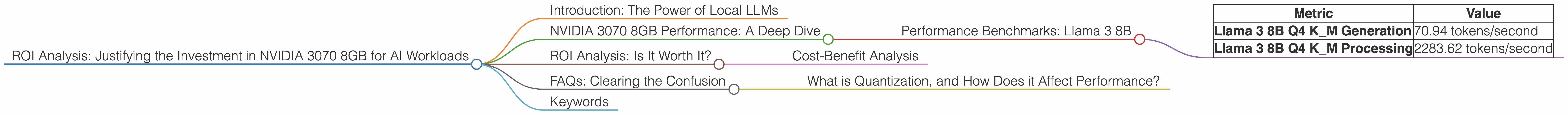

ROI Analysis: Justifying the Investment in NVIDIA 3070 8GB for AI Workloads

Introduction: The Power of Local LLMs

The world of Artificial Intelligence (AI) is buzzing with excitement, and a key driver of this enthusiasm is the rise of Large Language Models (LLMs). These powerful AI systems can generate creative text, translate languages, write different kinds of creative content, and answer your questions in an informative way – think ChatGPT and Bard. But deploying these models often requires powerful hardware, and here's where the NVIDIA GeForce RTX 3070 8GB steps into the spotlight.

This article delves into the ROI of investing in an NVIDIA 3070 8GB for running local LLMs. We'll explore the performance of this GPU in generating text with various LLM models, analyze the data, and help you decide if this investment is right for your AI projects.

NVIDIA 3070 8GB Performance: A Deep Dive

The NVIDIA GeForce RTX 3070 8GB packs a punch, with 8GB of GDDR6 memory and 5888 CUDA cores. This makes it a popular choice for AI tasks, especially for those who want to run LLMs locally and enjoy the benefits of low latency and privacy. But how does it fare in practice?

Performance Benchmarks: Llama 3 8B

We'll start by examining the performance of the NVIDIA 3070 8GB with the Llama 3 8B model. This model, with its 8 billion parameters, is a popular choice for those wanting a balance of performance and resource usage. We'll consider two key metrics:

- Token Speed Generation: This refers to how many tokens per second the GPU can process, affecting the speed of text generation.

- Processing Speed: This metric reflects how quickly the GPU can process the underlying computations involved in generating text.

The following table summarizes the performance of the NVIDIA 3070 8GB with the Llama 3 8B model.

| Metric | Value |

|---|---|

| Llama 3 8B Q4 K_M Generation | 70.94 tokens/second |

| Llama 3 8B Q4 K_M Processing | 2283.62 tokens/second |

Notes:

- The Llama 3 8B model is tested with Q4 quantization, a technique that reduces the size of the model by representing each number with fewer bits, leading to faster processing and lower memory requirements. This is like using a simpler vocabulary to express the same information, but with a smaller dictionary.

- We unfortunately don't have data for the NVIDIA 3070 8GB with Llama 3 8B using F16 quantization, which uses half the memory but may slightly impact performance.

These numbers show that the NVIDIA 3070 8GB can handle the Llama 3 8B model with reasonable performance. While this GPU might not be the absolute fastest option for larger models, its performance is still quite respectable for a mid-range GPU.

ROI Analysis: Is It Worth It?

Now, let's talk about the million-dollar question: Is investing in an NVIDIA 3070 8GB worthwhile for your AI workload? The answer depends on a few key factors:

- Your specific use case: The speed and accuracy you need for your AI tasks will influence your decision. If you're dealing with complex tasks, like generating long-form content or running a business chatbot, a more powerful GPU might be necessary.

- Budget: The NVIDIA 3070 8GB offers a good balance of performance and price, but there are cheaper options available. If you're on a tighter budget, you might consider other GPUs or explore cloud-based solutions.

- Your level of technical expertise: Setting up and configuring local LLMs can be technically involved. If you're new to this, consider the learning curve and potential support needs.

Cost-Benefit Analysis

Think of it this way: the NVIDIA 3070 8GB is like having your own AI assistant readily available, without the need for constant internet access. This can save you time and money on cloud computing costs. However, if your needs are very simple and you're only occasionally using LLMs, a less powerful GPU or a free cloud-based option might be a better fit.

FAQs: Clearing the Confusion

What is Quantization, and How Does it Affect Performance?

Quantization is a technique used to compress the size of neural networks. It involves reducing the number of bits used to represent each number, allowing the model to run faster and use less memory. Think of it as using a more compact language to describe the same information.

Here's an analogy: Imagine describing a picture using only a few words. You might say "a red car, green grass, blue sky." This is like using a low-bit quantization, with limited information. Now imagine describing the picture in detail, with every color, shape, and texture. That's similar to using a higher bit quantization, with more information and more detail.

Keywords

NVIDIA 3070 8GB, AI Workloads, Local LLMs, Llama 3, Token Speed, GPU Performance, ROI, Cost-Benefit Analysis, Quantization, Processing Speed, NVIDIA, GPU, Llama, GPU Benchmarks, Inference, Text Generation, Large Language Models, LLMs, AI, Machine Learning, Deep Learning.