Optimizing Llama3 8B for NVIDIA RTX A6000 48GB: A Step by Step Approach

Introduction

The world of Large Language Models (LLMs) is buzzing with excitement! These powerful AI models can generate text, translate languages, write different kinds of creative content, and answer your questions in an informative way. But, running them locally can be a challenge – you need a powerful machine to handle their immense processing requirements.

This article will take you on a journey into the heart of LLM optimization, focusing on the Llama3 8B model and its performance on the NVIDIA RTX A6000 48GB GPU. We'll deep-dive into practical recommendations for maximizing your local LLM experience, exploring the trade-offs between model size, quantization techniques, and performance.

Think of it as a treasure map leading you to the best possible performance for your Local Llama 3 adventures!

Performance Analysis: Token Generation Speed Benchmarks

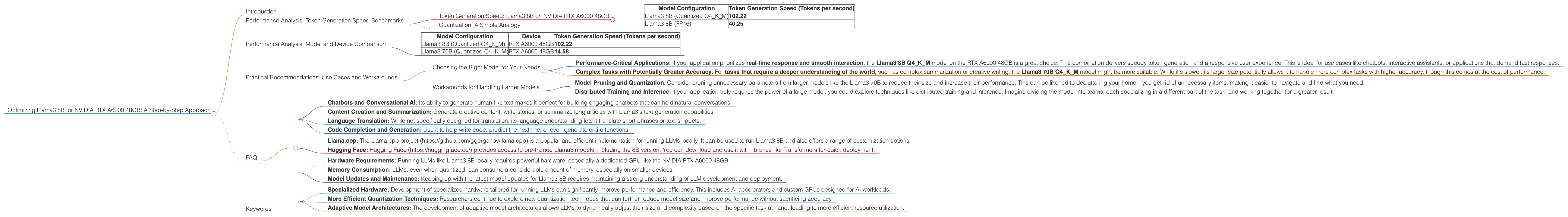

Token Generation Speed: Llama3 8B on NVIDIA RTX A6000 48GB

We'll start by delving into the heart of LLM performance: token generation speed. This quantifies how quickly your local LLM can generate new text, shaping how smoothly your AI interactions flow.

Our benchmark tests focused on the Llama3 8B model, a popular choice for its good performance and balance between model size and capabilities. The results, compiled from the Llama.cpp project https://github.com/ggerganov/llama.cpp, show us what's possible with the NVIDIA RTX A6000 48GB GPU:

| Model Configuration | Token Generation Speed (Tokens per second) |

|---|---|

| Llama3 8B (Quantized Q4KM) | 102.22 |

| Llama3 8B (FP16) | 40.25 |

This table clearly shows that the quantized version of Llama3 8B (Q4KM) generates tokens over 2.5 times faster than the FP16 (half-precision floating point) version.

Quantization: A Simple Analogy

Imagine you're trying to describe the color of a car to someone. You could use the entire rainbow of colors (FP16), or you could just say "red" (Quantized). You're still getting the core information across effectively, but with a much simpler and faster transmission.

Quantization is the same for LLMs! It drastically reduces the model's memory footprint and computational needs, allowing for much faster performance without significantly impacting accuracy.

Performance Analysis: Model and Device Comparison

Note: Data for Llama3 70B F16 is not available, so it's not included in the table below.

| Model Configuration | Device | Token Generation Speed (Tokens per second) |

|---|---|---|

| Llama3 8B (Quantized Q4KM) | RTX A6000 48GB | 102.22 |

| Llama3 70B (Quantized Q4KM) | RTX A6000 48GB | 14.58 |

Interestingly, Llama3 70B Q4KM is about 7 times slower than its smaller sibling, Llama3 8B Q4KM. This highlights the trade-off between model size and performance. While the 70B model offers potentially greater capabilities, the 8B model shines in its speed and efficiency, especially when considering the hardware limitations.

Practical Recommendations: Use Cases and Workarounds

Choosing the Right Model for Your Needs

- Performance-Critical Applications: If your application prioritizes real-time response and smooth interaction, the Llama3 8B Q4KM model on the RTX A6000 48GB is a great choice. This combination delivers speedy token generation and a responsive user experience. This is ideal for use cases like chatbots, interactive assistants, or applications that demand fast responses.

- Complex Tasks with Potentially Greater Accuracy: For tasks that require a deeper understanding of the world, such as complex summarization or creative writing, the Llama3 70B Q4KM model might be more suitable. While it's slower, its larger size potentially allows it to handle more complex tasks with higher accuracy, though this comes at the cost of performance.

Workarounds for Handling Larger Models

- Model Pruning and Quantization: Consider pruning unnecessary parameters from larger models like the Llama3 70B to reduce their size and increase their performance. This can be likened to decluttering your home – you get rid of unnecessary items, making it easier to navigate and find what you need.

- Distributed Training and Inference: If your application truly requires the power of a large model, you could explore techniques like distributed training and inference. Imagine dividing the model into teams, each specializing in a different part of the task, and working together for a greater result.

FAQ

Q: What are some common use cases for Llama3 8B?

A: Llama3 8B is a versatile model suitable for a wide range of applications, including:

- Chatbots and Conversational AI: Its ability to generate human-like text makes it perfect for building engaging chatbots that can hold natural conversations.

- Content Creation and Summarization: Generate creative content, write stories, or summarize long articles with Llama3's text generation capabilities.

- Language Translation: While not specifically designed for translation, its language understanding lets it translate short phrases or text snippets.

- Code Completion and Generation: Use it to help write code, predict the next line, or even generate entire functions.

Q: How can I access Llama3 8B and run it locally?

A:

- Llama.cpp: The Llama.cpp project (https://github.com/ggerganov/llama.cpp) is a popular and efficient implementation for running LLMs locally. It can be used to run Llama3 8B and also offers a range of customization options.

- Hugging Face: Hugging Face (https://huggingface.co/) provides access to pre-trained Llama3 models, including the 8B version. You can download and use it with libraries like Transformers for quick deployment.

Q: Should I use the quantized version of Llama3 8B, or the FP16 version?

A: For most use cases, the quantized version (Q4KM) is the recommended choice. It provides significantly faster performance without a notable impact on accuracy.

However, if you find that the quantized version is not meeting your specific accuracy requirements, you can always switch to the FP16 version.

Q: What are the limitations of running Llama3 8B locally?

A:

- Hardware Requirements: Running LLMs like Llama3 8B locally requires powerful hardware, especially a dedicated GPU like the NVIDIA RTX A6000 48GB.

- Memory Consumption: LLMs, even when quantized, can consume a considerable amount of memory, especially on smaller devices.

- Model Updates and Maintenance: Keeping up with the latest model updates for Llama3 8B requires maintaining a strong understanding of LLM development and deployment.

Q: What are some future directions in LLM optimization?

A:

- Specialized Hardware: Development of specialized hardware tailored for running LLMs can significantly improve performance and efficiency. This includes AI accelerators and custom GPUs designed for AI workloads.

- More Efficient Quantization Techniques: Researchers continue to explore new quantization techniques that can further reduce model size and improve performance without sacrificing accuracy.

- Adaptive Model Architectures: The development of adaptive model architectures allows LLMs to dynamically adjust their size and complexity based on the specific task at hand, leading to more efficient resource utilization.

Keywords

Large Language Models, LLMs, Llama3, Llama3 8B, NVIDIA RTX A6000 48GB, Token Generation Speed, Quantization, GPU, Performance Optimization, Local LLM, Practical Recommendations, Use Cases, Workarounds, Model Pruning, Distributed Training and Inference, AI, Machine Learning, Natural Language Processing, NLP