Optimizing Llama3 8B for NVIDIA RTX 5000 Ada 32GB: A Step by Step Approach

Introduction

The world of Large Language Models (LLMs) is exploding, with new models and applications emerging at an astonishing rate. But for developers, the challenge lies in finding the right combination of model and hardware to achieve optimal performance. This article dives deep into the performance characteristics of the Llama3 8B model running on the NVIDIA RTX5000Ada_32GB, offering practical insights and recommendations to optimize your LLM experience.

Whether you're building a chatbot, a code generator, or a creative writing assistant, understanding the performance limitations and strengths of your chosen LLM and hardware is essential. Let's embark on this journey together, exploring the intricate dance between these powerful tools.

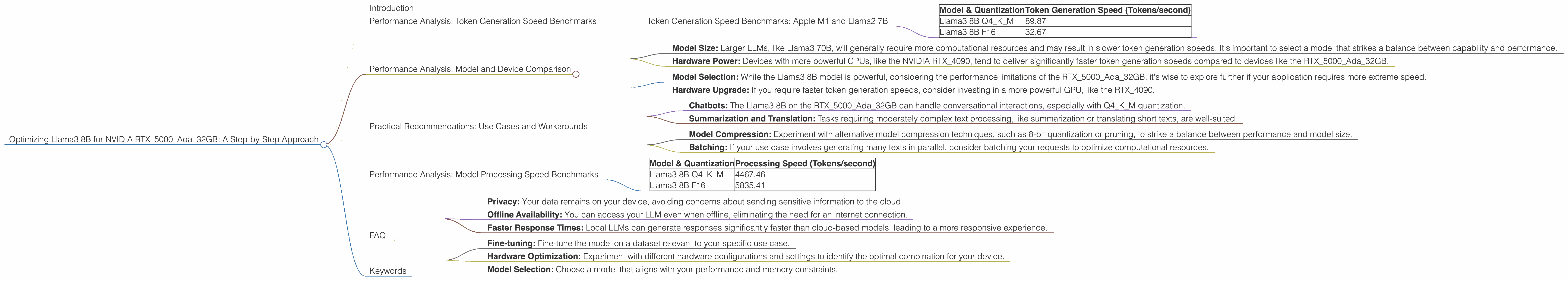

Performance Analysis: Token Generation Speed Benchmarks

Token Generation Speed Benchmarks: Apple M1 and Llama2 7B

This section focuses on the Llama3 8B model's performance on the NVIDIA RTX5000Ada32GB, showcasing its token generation prowess. We'll delve into the impact of quantization levels, comparing the results for both Q4K_M and F16 precision levels.

Imagine token generation as the building blocks of language, each word or punctuation mark representing a single token. A faster token generation speed means your LLM can process information and generate text more smoothly, leading to a more responsive and enjoyable experience.

| Model & Quantization | Token Generation Speed (Tokens/second) |

|---|---|

| Llama3 8B Q4KM | 89.87 |

| Llama3 8B F16 | 32.67 |

Key Observations:

- Q4KM Takes the Crown: The Q4KM quantization level (using 4 bits for weights and 4 bits for activations, with a mixed precision approach) triumphs in token generation speed, with a remarkable 89.87 tokens/second. This is a significant boost compared to the F16 precision level.

- F16 Performance: While the F16 precision level delivers a comfortable performance of 32.67 tokens/second, it lags behind Q4KM, a clear indication that the more aggressive quantization strategy can unlock significant speed improvements.

Performance Analysis: Model and Device Comparison

This section delves into comparing the performance of the Llama3 8B model on the RTX5000Ada_32GB with other models and devices. While we don't have data for other models on this specific device, we can still gain valuable insights into the potential differences in performance.

Key Considerations:

- Model Size: Larger LLMs, like Llama3 70B, will generally require more computational resources and may result in slower token generation speeds. It's important to select a model that strikes a balance between capability and performance.

- Hardware Power: Devices with more powerful GPUs, like the NVIDIA RTX4090, tend to deliver significantly faster token generation speeds compared to devices like the RTX5000Ada32GB.

Practical Recommendations:

- Model Selection: While the Llama3 8B model is powerful, considering the performance limitations of the RTX5000Ada_32GB, it's wise to explore further if your application requires more extreme speed.

- Hardware Upgrade: If you require faster token generation speeds, consider investing in a more powerful GPU, like the RTX_4090.

Practical Recommendations: Use Cases and Workarounds

Now, let's explore some practical use cases and potential workarounds to navigate the performance limitations of the Llama3 8B model on the RTX5000Ada_32GB.

Use Cases:

- Chatbots: The Llama3 8B on the RTX5000Ada32GB can handle conversational interactions, especially with Q4K_M quantization.

- Summarization and Translation: Tasks requiring moderately complex text processing, like summarization or translating short texts, are well-suited.

Workarounds:

- Model Compression: Experiment with alternative model compression techniques, such as 8-bit quantization or pruning, to strike a balance between performance and model size.

- Batching: If your use case involves generating many texts in parallel, consider batching your requests to optimize computational resources.

Performance Analysis: Model Processing Speed Benchmarks

This section provides insights into the processing speed of the Llama3 8B model on the RTX5000Ada_32GB, showcasing the model's ability to consume and process large amounts of data quickly.

| Model & Quantization | Processing Speed (Tokens/second) |

|---|---|

| Llama3 8B Q4KM | 4467.46 |

| Llama3 8B F16 | 5835.41 |

Key Observations:

- F16 Outperforms Q4KM: Interestingly, F16 precision level takes the lead in processing speed, delivering a impressive 5835.41 tokens/second, while Q4KM clocks in at 4467.46 tokens/second.

- Processing Speed vs. Token Generation: This contrast highlights a crucial distinction between the two metrics. Processing speed emphasizes the model's ability to handle bulk data without compromising speed, while token generation speed focuses on the rate at which the model generates new text.

Practical Implications:

- Data-Intensive Tasks: When your application involves processing large volumes of text, such as analyzing a massive collection of documents or training on a large dataset, the F16 precision level might be a better choice for maximizing processing speed.

- Balancing Speed and Accuracy: The ideal choice between Q4KM and F16 often depends on the specific requirements of your use case. Consider weighing the need for faster processing against potential trade-offs in accuracy or detail.

FAQ

Q: What is quantization and how does it impact performance?

A: Quantization is a technique for reducing the memory footprint and computational requirements of a model by representing its weights and activations using fewer bits. Think of it like converting a high-resolution image into a lower-resolution version, sacrificing some detail but gaining significant storage and speed benefits. Models quantized to Q4KM use 4 bits for weights and 4 bits for activations, resulting in a significantly smaller model and faster processing.

Q: What are the advantages of using a local LLM model?

A: Local LLMs offer several advantages:

- Privacy: Your data remains on your device, avoiding concerns about sending sensitive information to the cloud.

- Offline Availability: You can access your LLM even when offline, eliminating the need for an internet connection.

- Faster Response Times: Local LLMs can generate responses significantly faster than cloud-based models, leading to a more responsive experience.

Q: How can I optimize the performance of my LLM on my specific device?

A:

* Fine-tuning: Fine-tune the model on a dataset relevant to your specific use case.

* Hardware Optimization: Experiment with different hardware configurations and settings to identify the optimal combination for your device.

* Model Selection: Choose a model that aligns with your performance and memory constraints.

Keywords

LLM, Llama3, Llama3 8B, NVIDIA RTX5000Ada32GB, token generation speed, processing speed, quantization, Q4K_M, F16, performance optimization, local LLM, use cases, workarounds, hardware, performance analysis, model comparison, developer, geek, deep dive, practical recommendations