Optimizing Llama3 8B for NVIDIA RTX 4000 Ada 20GB x4: A Step by Step Approach

Introduction

In the world of large language models (LLMs), performance is king. You want your model to churn out text as fast as a caffeinated coder on a deadline. But achieving optimal performance requires more than just throwing hardware at the problem. It's about understanding your model's quirks, the strengths and weaknesses of your hardware, and finding the sweet spot where they meet. This article delves into the world of local LLM models, specifically Llama3 8B on the NVIDIA RTX4000Ada20GBx4 setup, guiding you through the process of optimization for maximum speed and efficiency.

Imagine you're building a chatbot for your company. It needs to respond quickly to user queries, generating engaging and informative text. Enter Llama3 8B, a powerful LLM that can handle this task with finesse. But to unleash Llama3's full potential, you need to optimize it for your hardware. This is where the RTX4000Ada20GBx4 comes in – a workhorse capable of unleashing Llama3's speed and efficiency.

Performance Analysis: Token Generation Speed Benchmarks

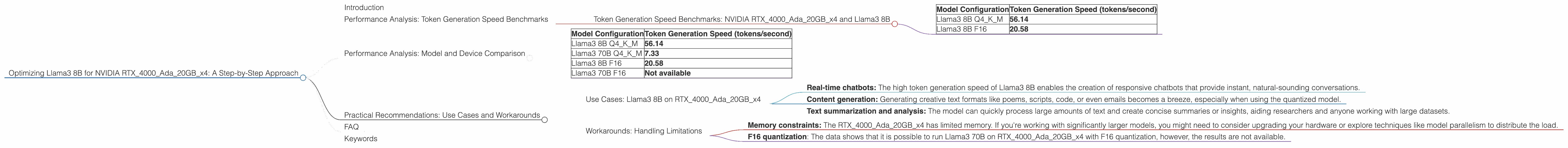

Token Generation Speed Benchmarks: NVIDIA RTX4000Ada20GBx4 and Llama3 8B

Let's dive into the performance of Llama3 8B on the RTX4000Ada20GBx4, focusing on token generation speed. This metric tells us how many tokens (words or parts of words) the model can generate per second. Think of it as the model's typing speed.

| Model Configuration | Token Generation Speed (tokens/second) |

|---|---|

| Llama3 8B Q4KM | 56.14 |

| Llama3 8B F16 | 20.58 |

Interpretation:

- Llama3 8B Q4KM: This configuration, using quantization, boasts an impressive 56.14 tokens/second! Quantization is like a clever compression technique that allows the model to run faster by taking up less memory. It's a bit like storing your clothes in a vacuum-sealed bag – more compact, more efficient.

- Llama3 8B F16: Without quantization, Llama3 8B achieves 20.58 tokens/second. While still a respectable speed, it's significantly slower compared to the quantized version.

Key takeaway: Quantization is your friend when it comes to speeding up token generation on the RTX4000Ada20GBx4.

Analogy: Imagine you have a high-speed internet connection but a slow computer. Even though the internet is fast, your computer struggles to download files quickly. Similarly, a powerful GPU like the RTX4000Ada20GBx4 can handle Llama3 8B effectively, but without quantization (like a slow computer), its full potential is hindered.

Performance Analysis: Model and Device Comparison

Let's expand our scope and compare Llama3 8B to a larger model, Llama3 70B, on the same RTX4000Ada20GBx4. We'll focus on token generation speed, again, to see how the model size affects performance.

| Model Configuration | Token Generation Speed (tokens/second) |

|---|---|

| Llama3 8B Q4KM | 56.14 |

| Llama3 70B Q4KM | 7.33 |

| Llama3 8B F16 | 20.58 |

| Llama3 70B F16 | Not available |

Interpretation:

- Smaller is faster: Llama3 8B generates tokens significantly faster than its larger counterpart, Llama3 70B. This is because Llama3 70B requires more computational power to process its larger model size.

- Quantization advantage: While the performance gap widens with the larger model, quantization still significantly improves the speed of Llama3 70B.

Key takeaway: While larger models can offer more capabilities, they often come with a performance trade-off. Smaller models, like Llama3 8B, are generally faster and more efficient, making them ideal for applications where latency is critical.

Practical Recommendations: Use Cases and Workarounds

Use Cases: Llama3 8B on RTX4000Ada20GBx4

The impressive performance of Llama3 8B on the RTX4000Ada20GBx4 opens up a range of possibilities:

- Real-time chatbots: The high token generation speed of Llama3 8B enables the creation of responsive chatbots that provide instant, natural-sounding conversations.

- Content generation: Generating creative text formats like poems, scripts, code, or even emails becomes a breeze, especially when using the quantized model.

- Text summarization and analysis: The model can quickly process large amounts of text and create concise summaries or insights, aiding researchers and anyone working with large datasets.

Workarounds: Handling Limitations

While the RTX4000Ada20GBx4 provides excellent performance for Llama3 8B, there are a few considerations to keep in mind:

- Memory constraints: The RTX4000Ada20GBx4 has limited memory. If you're working with significantly larger models, you might need to consider upgrading your hardware or explore techniques like model parallelism to distribute the load.

- F16 quantization: The data shows that it is possible to run Llama3 70B on RTX4000Ada20GBx4 with F16 quantization, however, the results are not available.

FAQ

Q: What is quantization?

A: Quantization is a technique used to make models smaller and faster by reducing the number of bits used to represent their weights. Imagine you have a photograph with millions of colors. Quantization is like reducing those colors to fewer shades while still preserving the image's essence. This makes the photo smaller, easier to store, and faster to load.

Q: How do I choose the right LLM for my application?

A: It depends on your needs. For real-time applications requiring speed, a smaller model like Llama3 8B is ideal. If you require more sophisticated capabilities, a larger model like Llama3 70B might be necessary, even with potential performance trade-offs.

Q: Can I run Llama3 70B without quantization on the RTX4000Ada20GBx4?

A: It's not possible with the current data. The memory available might not be sufficient to run the full model without quantization.

Keywords

LLM, Llama3, Llama3 8B, Llama3 70B, NVIDIA RTX4000Ada20GBx4, token generation, quantization, performance, local models, chatbots, content generation, text summarization, AI, deep learning, GPU