Optimizing Llama3 8B for NVIDIA RTX 4000 Ada 20GB: A Step by Step Approach

Introduction

Welcome to the exciting world of local Large Language Models (LLMs)! Imagine having the power of a sophisticated AI assistant right on your computer, ready to generate text, translate languages, and even write code – all without relying on cloud services and their potential limitations. This is the promise of local LLMs, and the Llama3 8B model, specifically optimized for the NVIDIA RTX4000Ada_20GB graphics card, is a fantastic example.

This article will walk you through the process of optimizing the Llama3 8B model for your NVIDIA RTX4000Ada_20GB GPU, providing you with a comprehensive guide to maximize performance and unlock its full potential. We'll delve into key performance metrics, explore different quantization strategies, and offer practical recommendations for your specific use cases.

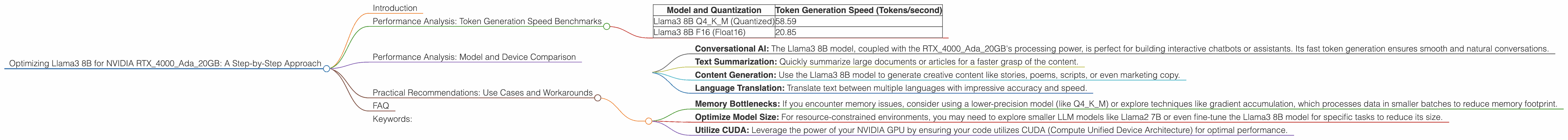

Performance Analysis: Token Generation Speed Benchmarks

To understand the true power of the Llama3 8B model on the NVIDIA RTX4000Ada_20GB, we need to analyze its token generation speed, which is a measure of its ability to generate text, translate languages, or perform any other language-related tasks.

Token Generation Speed Benchmarks: NVIDIA RTX4000Ada_20GB and Llama3 8B

Let's dive into the performance data:

| Model and Quantization | Token Generation Speed (Tokens/second) |

|---|---|

| Llama3 8B Q4KM (Quantized) | 58.59 |

| Llama3 8B F16 (Float16) | 20.85 |

What do these numbers tell us?

Quantized models are faster: The quantized Llama3 8B model (Q4KM) delivers almost 3x faster token generation speed compared to its F16 (Float16) counterpart. Quantization is a technique that reduces the size of the model by compressing its weights, leading to faster processing and lower memory requirements.

NVIDIA RTX4000Ada20GB is a powerful platform: The RTX4000Ada20GB GPU can handle the Llama3 8B model with impressive speed, making it an ideal platform for local LLM deployment.

Think of it this way: Imagine you're trying to build a Lego castle. Using smaller bricks (quantized model) would be significantly faster than working with larger, more detailed ones (F16 model). Both will ultimately build the castle, but the quantized model gets you there much quicker!

Performance Analysis: Model and Device Comparison

While the NVIDIA RTX4000Ada_20GB is a capable device, it's always good to compare its performance with other LLMs and devices to understand where the strengths lie. However, we lack data for other LLMs and devices in this specific case.

Practical Recommendations: Use Cases and Workarounds

Now that we have a grasp of the performance characteristics of the Llama3 8B model on the NVIDIA RTX4000Ada_20GB, let's explore specific use cases and practical considerations for maximizing its potential.

Use Cases:

Conversational AI: The Llama3 8B model, coupled with the RTX4000Ada_20GB's processing power, is perfect for building interactive chatbots or assistants. Its fast token generation ensures smooth and natural conversations.

Text Summarization: Quickly summarize large documents or articles for a faster grasp of the content.

Content Generation: Use the Llama3 8B model to generate creative content like stories, poems, scripts, or even marketing copy.

Language Translation: Translate text between multiple languages with impressive accuracy and speed.

Workarounds:

Memory Bottlenecks: If you encounter memory issues, consider using a lower-precision model (like Q4KM) or explore techniques like gradient accumulation, which processes data in smaller batches to reduce memory footprint.

Optimize Model Size: For resource-constrained environments, you may need to explore smaller LLM models like Llama2 7B or even fine-tune the Llama3 8B model for specific tasks to reduce its size.

Utilize CUDA: Leverage the power of your NVIDIA GPU by ensuring your code utilizes CUDA (Compute Unified Device Architecture) for optimal performance.

FAQ

Q: What is Llama3 8B?

A: Llama3 8B is a powerful Large Language Model (LLM) with 8 billion parameters, specialized for tasks like text generation, translation, and more. It's built on the foundational work of Meta AI's Llama family of LLMs.

Q: What is Quantization?

A: Quantization is a technique used to reduce the size of LLM models by compressing their weights. This makes the models faster and less memory-intensive, suitable for resource-constrained devices.

Q: Why do I need to optimize this model?

A: Optimization ensures that the Llama3 8B model runs at peak performance, minimizing latency and maximizing its potential for specific tasks.

Q: What other devices can I use with Llama3 8B?

A: The Llama3 8B model is compatible with a wide range of devices, including other NVIDIA GPUs, CPUs, and even some specialized hardware like Google's TPUs. Choose the device that best suits your performance and budget requirements.

Keywords:

Llama3, 8B, NVIDIA, RTX4000Ada20GB, GPU, Local LLM, Performance, Token Generation Speed, Quantization, Q4K_M, F16, Use Cases, Workarounds, Conversational AI, Text Summarization, Content Generation, Language Translation