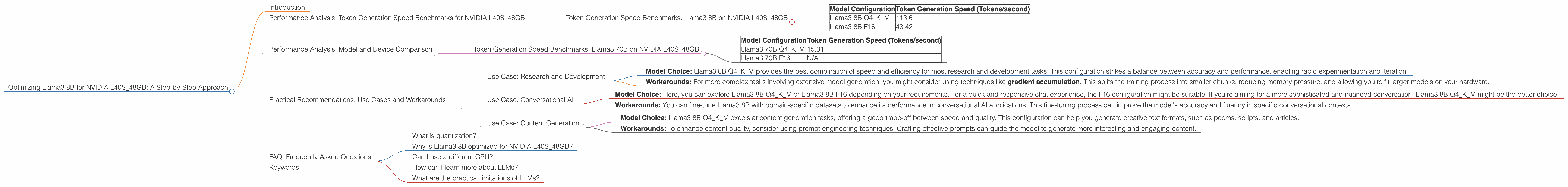

Optimizing Llama3 8B for NVIDIA L40S 48GB: A Step by Step Approach

Introduction

The world of large language models (LLMs) is growing rapidly, with new models and advancements emerging constantly. For developers and enthusiasts, running these models locally can be a rewarding experience, allowing for experimentation, customization, and even the creation of unique applications. But getting the most out of LLMs on your own hardware can be a challenge. This article delves into the optimization process for Llama3 8B on the powerful NVIDIA L40S_48GB, a popular choice for demanding AI workloads. Whether you're a seasoned AI developer or just starting your journey, this guide provides practical insights and recommendations to maximize your LLM performance.

Performance Analysis: Token Generation Speed Benchmarks for NVIDIA L40S_48GB

Understanding the speed at which your model generates text is crucial for creating seamless user experiences. This section dives into the token generation speed benchmarks for Llama3 8B on NVIDIA L40S_48GB, revealing how different model configurations impact performance.

Token Generation Speed Benchmarks: Llama3 8B on NVIDIA L40S_48GB

| Model Configuration | Token Generation Speed (Tokens/second) |

|---|---|

| Llama3 8B Q4KM | 113.6 |

| Llama3 8B F16 | 43.42 |

A quick breakdown:

- Q4KM: This refers to a specific quantization technique that reduces the model's size and memory footprint while maintaining a reasonable level of accuracy. Think of it like compressing an image file; you lose some detail, but the file is much smaller.

- F16: This configuration uses half-precision floating-point numbers, offering a trade-off between accuracy and speed.

Key Takeaways:

- Quantization is King: You can see that Llama3 8B in the Q4KM configuration achieves a significantly higher token generation speed (over 2.5 times faster) compared to the F16 configuration. This is all thanks to the reduced memory footprint.

- Bigger Isn't Always Better: Although the L40S_48GB packs a punch for larger models, the focus on optimization makes a clear difference. Even with a smaller model, the right configuration can provide impressive results.

Performance Analysis: Model and Device Comparison

It's natural to wonder how Llama3 8B compares to other LLMs and devices. While we're focused on optimizing Llama3 8B on NVIDIA L40S_48GB, it's always helpful to see how this combo stacks up against the competition.

Let's look at the performance of Llama3 70B on the same NVIDIA L40S_48GB.

Token Generation Speed Benchmarks: Llama3 70B on NVIDIA L40S_48GB

| Model Configuration | Token Generation Speed (Tokens/second) |

|---|---|

| Llama3 70B Q4KM | 15.31 |

| Llama3 70B F16 | N/A |

Whoa, Nelly! No F16 results for Llama3 70B on the L40S_48GB? Yep, this is what happens when you scale things up! The hardware might not have enough memory to handle the F16 configuration without some serious juggling.

Key Takeaways:

- Hardware Limitations: While the L40S_48GB is a beast, it has its limits. Larger models like Llama3 70B, even with quantization, can push the hardware close to its breaking point.

- Smaller Can Be Faster: Sometimes it's better to go smaller and optimize your LLM for specific use cases. Remember, a faster and smoother experience can be just as satisfying.

Practical Recommendations: Use Cases and Workarounds

This is where the rubber meets the road! Let's translate the insights we've gained into practical recommendations for optimizing Llama3 8B on NVIDIA L40S_48GB.

Use Case: Research and Development

- Model Choice: Llama3 8B Q4KM provides the best combination of speed and efficiency for most research and development tasks. This configuration strikes a balance between accuracy and performance, enabling rapid experimentation and iteration.

- Workarounds: For more complex tasks involving extensive model generation, you might consider using techniques like gradient accumulation. This splits the training process into smaller chunks, reducing memory pressure, and allowing you to fit larger models on your hardware.

Use Case: Conversational AI

- Model Choice: Here, you can explore Llama3 8B Q4KM or Llama3 8B F16 depending on your requirements. For a quick and responsive chat experience, the F16 configuration might be suitable. If you're aiming for a more sophisticated and nuanced conversation, Llama3 8B Q4KM might be the better choice.

- Workarounds: You can fine-tune Llama3 8B with domain-specific datasets to enhance its performance in conversational AI applications. This fine-tuning process can improve the model's accuracy and fluency in specific conversational contexts.

Use Case: Content Generation

- Model Choice: Llama3 8B Q4KM excels at content generation tasks, offering a good trade-off between speed and quality. This configuration can help you generate creative text formats, such as poems, scripts, and articles.

- Workarounds: To enhance content quality, consider using prompt engineering techniques. Crafting effective prompts can guide the model to generate more interesting and engaging content.

FAQ: Frequently Asked Questions

What is quantization?

Quantization is a process of reducing the precision of numbers used in a neural network model, effectively shrinking its size and lowering its memory footprint. Think of it like converting a high-resolution image to a lower-resolution one; you lose some detail but gain significant space savings.

Why is Llama3 8B optimized for NVIDIA L40S_48GB?

The L40S_48GB is known for its high memory capacity and computational power, making it a great choice for running powerful models like Llama3 8B efficiently.

Can I use a different GPU?

Yes, you can definitely use a different GPU for running Llama3 8B. Just be aware that your model's performance will depend on the GPU's capabilities.

How can I learn more about LLMs?

There are many resources available online to help you dive deeper into the world of LLMs. Check out the websites of leading research labs, such as OpenAI and Google AI, or explore online communities dedicated to LLMs.

What are the practical limitations of LLMs?

LLMs can be susceptible to biases present in the data they were trained on. Also, they might not always generate entirely coherent or truthful text, making careful evaluation and fact-checking essential.

Keywords

Llama3, 8B, NVIDIA, L40S48GB, LLM, large language model, token generation speed, quantization, Q4K_M, F16, performance benchmarks, content generation, conversational AI, research and development, practical recommendations, use cases, workarounds, FAQ, gradient accumulation, prompt engineering.