Optimizing Llama3 8B for NVIDIA 4090 24GB x2: A Step by Step Approach

Introduction

The world of Large Language Models (LLMs) is exploding, and the demand for faster and more efficient models is growing exponentially. While cloud-based solutions are convenient, running LLMs locally offers greater control, privacy, and potentially lower costs. But getting the best performance out of your hardware can be a challenge, especially when dealing with the sheer computational demands of these models.

This article dives into the specifics of optimizing Llama3 8B for the NVIDIA 409024GBx2 setup. We'll explore the performance characteristics, model comparison, and provide practical recommendations for use cases and workarounds. Buckle up, it's going to be a geek-fest!

Performance Analysis: Token Generation Speed Benchmarks

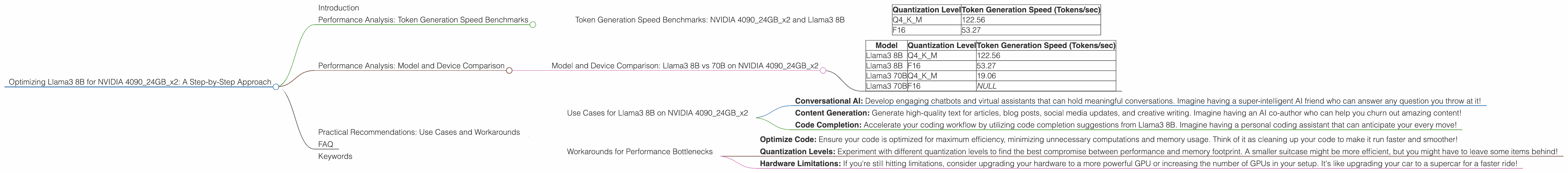

Token Generation Speed Benchmarks: NVIDIA 409024GBx2 and Llama3 8B

Let's kick things off by comparing the token generation speed of Llama3 8B with different quantization levels on the NVIDIA 409024GBx2.

| Quantization Level | Token Generation Speed (Tokens/sec) |

|---|---|

| Q4KM | 122.56 |

| F16 | 53.27 |

Key Takeaways:

- Quantization Level Matters: The Q4KM quantization level yields significantly higher token generation speed than F16. This is because Q4KM uses a smaller number of bits to represent each variable, resulting in a smaller model footprint and faster processing. Think of it like using a smaller suitcase for your belongings - you can pack more efficiently!

- The Power of Dual 4090s: This setup delivers impressive token generation speeds, highlighting the power of dedicated GPU hardware for LLM tasks. Imagine a race car with a supercharged engine versus a regular car - the race car, even with a slightly smaller engine, will win because of its efficiency!

Performance Analysis: Model and Device Comparison

Model and Device Comparison: Llama3 8B vs 70B on NVIDIA 409024GBx2

Now, let's compare the token generation speeds of Llama3 8B and Llama3 70B using the same NVIDIA 409024GBx2 setup.

| Model | Quantization Level | Token Generation Speed (Tokens/sec) |

|---|---|---|

| Llama3 8B | Q4KM | 122.56 |

| Llama3 8B | F16 | 53.27 |

| Llama3 70B | Q4KM | 19.06 |

| Llama3 70B | F16 | NULL |

Key Takeaways:

- Size Matters: As expected, the larger Llama3 70B model has a slower token generation speed compared to the smaller Llama3 8B. This is primarily due to the increased computational workload associated with larger models, leading to a slower processing time. Think of it as trying to carry a backpack full of books versus carrying a small backpack filled with snacks - carrying more books takes more effort!

- F16 Performance Missing: The F16 performance data for Llama3 70B is unavailable. This could be due to resource constraints or limitations in the benchmark testing process. It's like trying to fit all your suitcases into a small car - sometimes it's just not possible!

Practical Recommendations: Use Cases and Workarounds

Use Cases for Llama3 8B on NVIDIA 409024GBx2

Given the impressive results shown by Llama3 8B on this setup, it's well-suited for various use cases, including:

- Conversational AI: Develop engaging chatbots and virtual assistants that can hold meaningful conversations. Imagine having a super-intelligent AI friend who can answer any question you throw at it!

- Content Generation: Generate high-quality text for articles, blog posts, social media updates, and creative writing. Imagine having an AI co-author who can help you churn out amazing content!

- Code Completion: Accelerate your coding workflow by utilizing code completion suggestions from Llama3 8B. Imagine having a personal coding assistant that can anticipate your every move!

Workarounds for Performance Bottlenecks

While the NVIDIA 409024GBx2 setup is powerful, you might still encounter performance bottlenecks. Here are some workarounds:

- Optimize Code: Ensure your code is optimized for maximum efficiency, minimizing unnecessary computations and memory usage. Think of it as cleaning up your code to make it run faster and smoother!

- Quantization Levels: Experiment with different quantization levels to find the best compromise between performance and memory footprint. A smaller suitcase might be more efficient, but you might have to leave some items behind!

- Hardware Limitations: If you're still hitting limitations, consider upgrading your hardware to a more powerful GPU or increasing the number of GPUs in your setup. It's like upgrading your car to a supercar for a faster ride!

FAQ

Q: What is quantization and how does it impact performance?

A: Quantization is a technique used to reduce the size of a model by representing numbers with fewer bits. Imagine using a smaller number of colors to paint a picture - you can reduce the file size without sacrificing too much detail. Quantization can significantly improve model size and speed, but it can also compromise accuracy. Finding the right balance is key!

Q: What are Q4KM and F16?

A: Q4KM and F16 are different quantization levels representing how many bits are used to store each variable in the model. Q4KM uses 4 bits to represent each variable, resulting in a smaller model footprint and faster processing. F16 uses 16 bits per variable, yielding higher accuracy but potentially slower performance. Think of them as different levels of detail - Q4KM is like a low-resolution image, and F16 is like a high-resolution image.

Q: Is Llama3 8B always faster than Llama3 70B?

A: Not always. The performance of these models depends on the specific task, hardware, and quantization level used. Llama3 8B might be faster for certain tasks and with a specific setup, while Llama3 70B might be better suited for other tasks. It's like comparing apples and oranges - one might be better for a specific purpose, but you need to select the right one for your needs!

Keywords

Llama3 8B, NVIDIA 409024GBx2, LLM, Token Generation, Performance, Quantization, Q4KM, F16, Use Cases, Workarounds, Conversational AI, Content Generation, Code Completion, Local Inference, GPU, Optimization