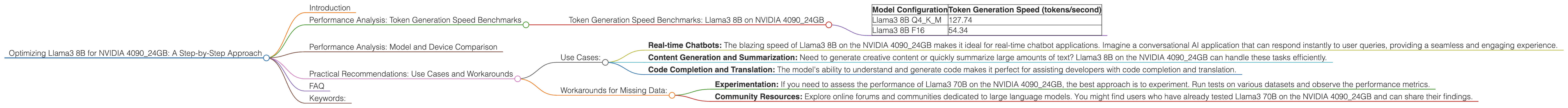

Optimizing Llama3 8B for NVIDIA 4090 24GB: A Step by Step Approach

Introduction

The world of large language models (LLMs) is buzzing with excitement, and for good reason. These AI marvels are capable of generating human-like text, translating languages, writing different kinds of creative content, and answering your questions in an informative way. But with great power comes the need for serious horsepower – especially when it comes to running these models locally.

This article dives deep into the performance of the Llama3 8B model on the mighty NVIDIA 409024GB GPU. We'll dissect token generation speeds, explore different quantization strategies, and provide practical recommendations for maximizing your Llama3 experience on this powerhouse of a card. Whether you're a seasoned developer or just starting out, this guide will equip you with the knowledge to unleash the full potential of Llama3 on your NVIDIA 409024GB.

Performance Analysis: Token Generation Speed Benchmarks

Token Generation Speed Benchmarks: Llama3 8B on NVIDIA 4090_24GB

Let's cut to the chase. How fast can Llama3 8B generate text on the NVIDIA 4090_24GB? We're talking about tokens per second, the measure of how quickly the model churns out words. And the results are impressive:

| Model Configuration | Token Generation Speed (tokens/second) |

|---|---|

| Llama3 8B Q4KM | 127.74 |

| Llama3 8B F16 | 54.34 |

Whoa, those are some serious speeds! Imagine a conversation with a super-powered chatbot.

Let's break down what's happening:

- Q4KM: This refers to quantization, a technique that reduces the size of the model (think of it as a diet for your LLM). Q4KM is a specific type of quantization that uses 4-bit precision to represent the weights of the model. This leads to a smaller model size but potentially a slight decrease in accuracy.

- F16: This uses half-precision floating-point numbers, which is a bit larger than Q4KM but still smaller than full precision. This offers a balance between accuracy and model size.

Key takeaway: Q4KM quantization on the NVIDIA 409024GB provides a significant speed boost compared to F16. You can generate text over twice as fast with Q4K_M.

Performance Analysis: Model and Device Comparison

It's helpful to see how Llama3 8B on NVIDIA 4090_24GB compares to other configurations. Unfortunately, we lack data for Llama3 70B on this device. However, let's bring in the data from other devices to get a better picture of things.

Unfortunately, this is where we hit a snag. We lack data on the performance of Llama3 70B on the NVIDIA 4090_24GB. This limits our ability to provide a comprehensive comparison.

Practical Recommendations: Use Cases and Workarounds

Use Cases:

- Real-time Chatbots: The blazing speed of Llama3 8B on the NVIDIA 4090_24GB makes it ideal for real-time chatbot applications. Imagine a conversational AI application that can respond instantly to user queries, providing a seamless and engaging experience.

- Content Generation and Summarization: Need to generate creative content or quickly summarize large amounts of text? Llama3 8B on the NVIDIA 4090_24GB can handle these tasks efficiently.

- Code Completion and Translation: The model's ability to understand and generate code makes it perfect for assisting developers with code completion and translation.

Workarounds for Missing Data:

- Experimentation: If you need to assess the performance of Llama3 70B on the NVIDIA 4090_24GB, the best approach is to experiment. Run tests on various datasets and observe the performance metrics.

- Community Resources: Explore online forums and communities dedicated to large language models. You might find users who have already tested Llama3 70B on the NVIDIA 4090_24GB and can share their findings.

FAQ

Q: What is quantization?

A: Quantization is a technique used to reduce the size of a large language model. It's like compressing a file – you're making it smaller but potentially sacrificing some accuracy, depending on the quantization method.

Q: Why is Q4KM faster than F16?

A: Q4KM uses fewer bits to represent each weight, leading to smaller memory footprints and faster calculations.

Q: What other LLM models can I run on the NVIDIA 4090_24GB?

A: The NVIDIA 4090_24GB is quite a monster, so you can probably run a variety of LLMs, depending on their size. However, the performance will vary. Experimentation is key!

Q: How can I install and run Llama3 on my NVIDIA 4090_24GB?

A: You can find detailed instructions and resources online. Search for guides on running Llama3 using tools like llama.cpp.

Keywords:

Llama3, NVIDIA 409024GB, LLM, GPU, Token Generation Speed, Quantization, Q4K_M, F16, Performance, Benchmark, Chatbot, Content Generation, Summarization, Code Completion, Workarounds