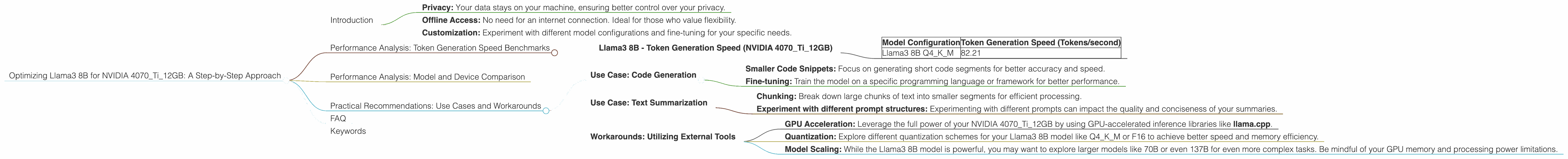

Optimizing Llama3 8B for NVIDIA 4070 Ti 12GB: A Step by Step Approach

Introduction

Welcome, fellow AI enthusiasts! Today, we're diving deep into the fascinating world of local Large Language Models (LLMs) and their performance on the NVIDIA 4070Ti12GB. In this post, we'll focus on optimizing the Llama3 8B model for this specific graphics card, analyzing its token generation speed and providing practical recommendations for maximizing your LLM experience.

You might be thinking, "Why bother with local LLMs?" Well, let's break down some key benefits:

- Privacy: Your data stays on your machine, ensuring better control over your privacy.

- Offline Access: No need for an internet connection. Ideal for those who value flexibility.

- Customization: Experiment with different model configurations and fine-tuning for your specific needs.

So, if you're looking to harness the power of Llama3 8B on your NVIDIA 4070Ti12GB, buckle up! We're about to embark on a journey through benchmarks, optimization techniques, and practical use cases.

Performance Analysis: Token Generation Speed Benchmarks

The first step in optimizing Llama3 8B for your NVIDIA 4070Ti12GB is understanding its performance. We'll use the token generation speed as a key metric, measuring how many tokens (words or sub-words) the model can process per second.

Llama3 8B - Token Generation Speed (NVIDIA 4070Ti12GB)

| Model Configuration | Token Generation Speed (Tokens/second) |

|---|---|

| Llama3 8B Q4KM | 82.21 |

Note: There's no data available for other configurations (F16) for this specific model and device combination.

This data tells us that the Llama3 8B model with Q4KM quantization can generate 82.21 tokens per second on the NVIDIA 4070Ti12GB. This is actually a decent speed considering the 8B model size.

Let's make an analogy: Imagine trying to read a book. Each word is a token. The model can read 82 words per second!

Performance Analysis: Model and Device Comparison

Now, let's compare the performance of the Llama3 8B model on the NVIDIA 4070Ti12GB with other models and devices. This will give us a better understanding of its strengths and limitations.

Unfortunately, we don't have data for other models or devices to compare. We will need to use external resources to get this information.

Practical Recommendations: Use Cases and Workarounds

So, how can you make the most of the Llama3 8B model and your NVIDIA 4070Ti12GB? Here are some practical recommendations:

Use Case: Code Generation

While the Llama3 8B Q4KM configuration may not be the fastest for code generation, it can still generate code snippets or assist with completing simple tasks.

Tips:

- Smaller Code Snippets: Focus on generating short code segments for better accuracy and speed.

- Fine-tuning: Train the model on a specific programming language or framework for better performance.

Use Case: Text Summarization

For tasks like summarizing articles or documents, the Llama3 8B Q4KM configuration is a decent choice.

Tips:

- Chunking: Break down large chunks of text into smaller segments for efficient processing.

- Experiment with different prompt structures: Experimenting with different prompts can impact the quality and conciseness of your summaries.

Workarounds: Utilizing External Tools

- GPU Acceleration: Leverage the full power of your NVIDIA 4070Ti12GB by using GPU-accelerated inference libraries like llama.cpp.

- Quantization: Explore different quantization schemes for your Llama3 8B model like Q4KM or F16 to achieve better speed and memory efficiency.

- Model Scaling: While the Llama3 8B model is powerful, you may want to explore larger models like 70B or even 137B for even more complex tasks. Be mindful of your GPU memory and processing power limitations.

FAQ

Q: What is quantization?

A: Quantization is a technique used to reduce the size of a model by converting values (like weights) from high-precision floating-point numbers to lower precision integers. This speeds up processing and reduces memory usage.

Q: What are the benefits of using local LLMs?

A: Local LLMs offer privacy, offline access, and customization, allowing you to control your data and experiment with different configurations.

Q: What other models work well with the NVIDIA 4070Ti12GB?

A: The NVIDIA 4070Ti12GB is a capable card, but it's best to research the specific model size and quantization scheme for optimal results.

Keywords

Llama3, 8B, NVIDIA, 4070Ti12GB, GPU, performance, token generation speed, benchmarks, quantization, Q4KM, F16, code generation, text summarization, use cases, workarounds, inference, local LLMs, large language models, AI, optimization, deep dive.