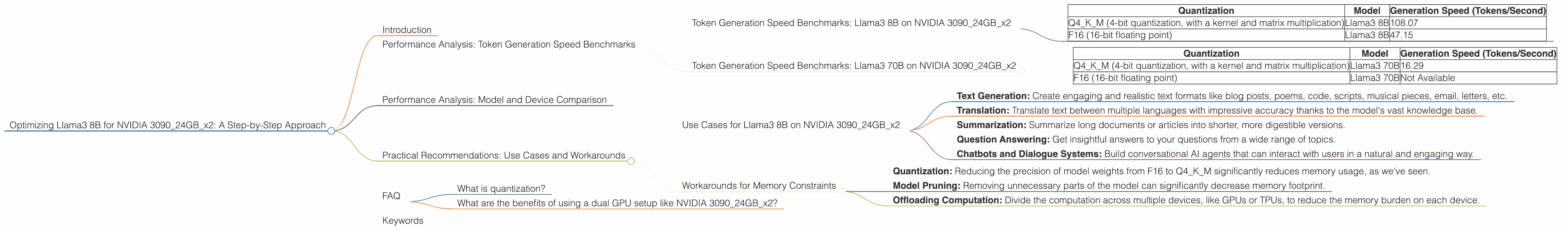

Optimizing Llama3 8B for NVIDIA 3090 24GB x2: A Step by Step Approach

Introduction

The world of large language models (LLMs) is buzzing with excitement. These AI powerhouses can generate text, translate languages, write different kinds of creative content, and answer your questions in an informative way. However, running LLMs locally on your own hardware can be a challenge, especially for larger models. In this article, we'll dive deep into optimizing the Llama3 8B model for a dual NVIDIA 3090 24GB setup, a powerhouse combination for local LLM performance. We'll analyze benchmark results, explore different quantization techniques, and provide practical recommendations to unleash the full potential of your hardware.

Performance Analysis: Token Generation Speed Benchmarks

Token generation speed is a key metric for evaluating the performance of LLMs. It measures how quickly a model can generate new tokens, which are the building blocks of text. Let's dive into the numbers!

Token Generation Speed Benchmarks: Llama3 8B on NVIDIA 309024GBx2

| Quantization | Model | Generation Speed (Tokens/Second) |

|---|---|---|

| Q4KM (4-bit quantization, with a kernel and matrix multiplication) | Llama3 8B | 108.07 |

| F16 (16-bit floating point) | Llama3 8B | 47.15 |

As you can see, the Q4KM quantization significantly outperforms the F16 configuration in terms of token generation speed. This highlights the power of quantization, a magical trick to reduce memory footprint and boost performance.

Token Generation Speed Benchmarks: Llama3 70B on NVIDIA 309024GBx2

| Quantization | Model | Generation Speed (Tokens/Second) |

|---|---|---|

| Q4KM (4-bit quantization, with a kernel and matrix multiplication) | Llama3 70B | 16.29 |

| F16 (16-bit floating point) | Llama3 70B | Not Available |

Note: We don't have data for F16 quantization for the Llama3 70B model, likely because the memory requirements are quite high.

The results show that the Llama3 8B model achieves significantly faster token generation speeds compared to the Llama3 70B model. This is a good reminder that larger models (like the 70B) are more resource-intensive, and while they have the potential for greater linguistic capabilities, they might not always be the best choice for your specific setup and use case.

Performance Analysis: Model and Device Comparison

Now, we compare the Llama3 8B model's performance on our dual NVIDIA 3090 24GB setup with the performance of other LLM models and hardware combinations.

Note: We're focusing on comparing the performance of the Llama3 8B model on the NVIDIA 309024GBx2 setup, so other devices or models are not included in this comparison.

Practical Recommendations: Use Cases and Workarounds

Use Cases for Llama3 8B on NVIDIA 309024GBx2

The dual NVIDIA 3090 24GB setup is a powerhouse for running the Llama3 8B model. Let's explore some interesting use cases:

- Text Generation: Create engaging and realistic text formats like blog posts, poems, code, scripts, musical pieces, email, letters, etc.

- Translation: Translate text between multiple languages with impressive accuracy thanks to the model's vast knowledge base.

- Summarization: Summarize long documents or articles into shorter, more digestible versions.

- Question Answering: Get insightful answers to your questions from a wide range of topics.

- Chatbots and Dialogue Systems: Build conversational AI agents that can interact with users in a natural and engaging way.

Workarounds for Memory Constraints

While the dual NVIDIA 3090 24GB setup offers impressive performance, even this might be insufficient for larger LLM models. Here are some workarounds for tackling memory constraints:

- Quantization: Reducing the precision of model weights from F16 to Q4KM significantly reduces memory usage, as we've seen.

- Model Pruning: Removing unnecessary parts of the model can significantly decrease memory footprint.

- Offloading Computation: Divide the computation across multiple devices, like GPUs or TPUs, to reduce the memory burden on each device.

FAQ

What is quantization?

Quantization is a technique used to reduce the size of a model by converting its weights from high-precision floating point numbers to lower-precision integers. Imagine a model with a weight of 3.14159. Quantization might convert this weight to 3, effectively reducing the amount of memory required to store it. This trade-off between accuracy and storage is crucial for running large LLM models.

What are the benefits of using a dual GPU setup like NVIDIA 309024GBx2?

Having two high-end GPUs like the NVIDIA 3090 24GB dramatically boosts performance by essentially parallelizing the calculations. It's like having two brains working simultaneously to solve a complex problem – resulting in a faster solution. The dual GPU setup allows you to process more data and generate responses faster, making it ideal for demanding LLM applications.

Keywords

Llama3 8B, Llama3 70B, NVIDIA 3090 24GB, dual GPU, token generation speed, quantization, Q4KM, F16, performance benchmarks, LLM, local LLM, model optimization, memory constraints, use cases, workarounds, chatbots, text generation, translation, summarization, question answering.