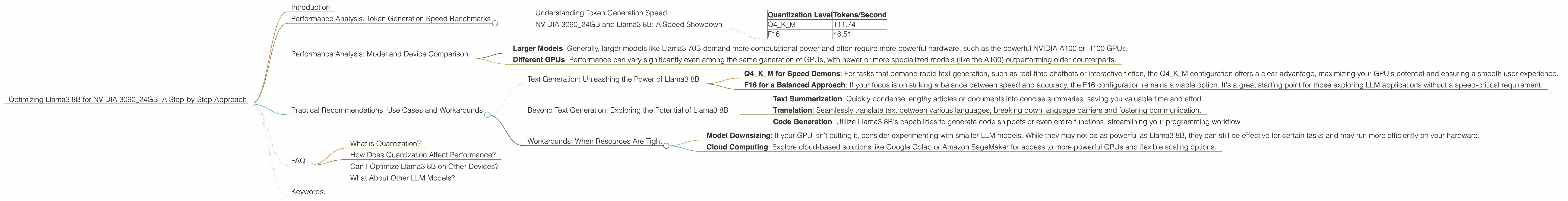

Optimizing Llama3 8B for NVIDIA 3090 24GB: A Step by Step Approach

Introduction

Welcome, fellow AI enthusiasts! Have you ever wondered how to squeeze the most performance out of your powerful NVIDIA 3090_24GB GPU when running the impressive Llama3 8B model? Perhaps you're tired of waiting for those text generations to finish and you're yearning for a smoother experience? Look no further, because this guide is your map to optimizing Llama3 8B for your hardware, turning your GPU into a text-generating powerhouse.

We'll delve deep into the world of Local Language Models (LLMs) and their performance on specific devices, focusing on the NVIDIA 3090_24GB GPU and the Llama3 8B model. We'll dissect the nuances of quantization and its impact on model size and performance, and explore the practical implications of these choices. By the end of this journey, you'll have the knowledge and tools to unleash the full potential of your GPU and Llama3 8B, enabling you to create, analyze, and interact with text at lightning speed.

So, grab your favorite caffeinated beverage, put on your coding hat, and let's get started!

Performance Analysis: Token Generation Speed Benchmarks

Understanding Token Generation Speed

Imagine a text generation task as a relay race where each runner represents a token, the fundamental building blocks of text. The token generation speed measures how fast our GPU can process these runners, ultimately determining how quickly our model produces text.

NVIDIA 3090_24GB and Llama3 8B: A Speed Showdown

Let's dive into the numbers. Here's a breakdown of token generation speed (tokens/second) for Llama3 8B on the NVIDIA 3090_24GB, based on different quantization levels:

| Quantization Level | Tokens/Second |

|---|---|

| Q4KM | 111.74 |

| F16 | 46.51 |

Observations:

- Q4KM (Quantized to 4 bits, with Kernel and Matrix processing): This configuration demonstrates significantly faster processing power, achieving almost 2.4 times the speed of the F16 model. This is a significant performance advantage.

- F16 (Half-precision floating point): While not as fast as Q4KM, F16 still offers respectable performance.

These numbers highlight the substantial speed improvements achieved with quantization, a clever technique for shrinking model size without sacrificing too much accuracy. Quantization basically allows us to represent numbers in fewer bits, making the models more compact and allowing for faster calculations.

Think of it like this: Imagine a race car. You can either use a full-blown, high-precision engine (F16) or a more compact, power-efficient engine (Q4KM) – both get the job done, but the compact engine gets there faster!

Performance Analysis: Model and Device Comparison

We've seen the performance of Llama3 8B on the NVIDIA 3090_24GB. But how does it stack up against other models and devices? Unfortunately, we lack data for this specific comparison.

However, here are some general insights from the larger LLM landscape:

- Larger Models: Generally, larger models like Llama3 70B demand more computational power and often require more powerful hardware, such as the powerful NVIDIA A100 or H100 GPUs.

- Different GPUs: Performance can vary significantly even among the same generation of GPUs, with newer or more specialized models (like the A100) outperforming older counterparts.

Ultimately, the best choice for your setup depends on your specific usage and desired performance. If you're working with large models or pushing the boundaries of text generation speed, investing in a high-end GPU might be necessary.

Practical Recommendations: Use Cases and Workarounds

Text Generation: Unleashing the Power of Llama3 8B

- Q4KM for Speed Demons: For tasks that demand rapid text generation, such as real-time chatbots or interactive fiction, the Q4KM configuration offers a clear advantage, maximizing your GPU's potential and ensuring a smooth user experience.

- F16 for a Balanced Approach: If your focus is on striking a balance between speed and accuracy, the F16 configuration remains a viable option. It's a great starting point for those exploring LLM applications without a speed-critical requirement.

Beyond Text Generation: Exploring the Potential of Llama3 8B

- Text Summarization: Quickly condense lengthy articles or documents into concise summaries, saving you valuable time and effort.

- Translation: Seamlessly translate text between various languages, breaking down language barriers and fostering communication.

- Code Generation: Utilize Llama3 8B's capabilities to generate code snippets or even entire functions, streamlining your programming workflow.

Workarounds: When Resources Are Tight

- Model Downsizing: If your GPU isn't cutting it, consider experimenting with smaller LLM models. While they may not be as powerful as Llama3 8B, they can still be effective for certain tasks and may run more efficiently on your hardware.

- Cloud Computing: Explore cloud-based solutions like Google Colab or Amazon SageMaker for access to more powerful GPUs and flexible scaling options.

FAQ

What is Quantization?

Quantization is a technique used to reduce the size of LLM models without a significant drop in accuracy. Imagine it as "compressing" the model by representing numbers with fewer bits. This results in smaller models that require less memory and can be processed faster.

How Does Quantization Affect Performance?

Quantization can improve both performance and memory efficiency. Smaller models are faster to load and process, leading to quicker text generation and inference times. However, there may be a slight reduction in accuracy with some quantization techniques.

Can I Optimize Llama3 8B on Other Devices?

Yes, you can! However, the performance will depend on the device's specifications and the chosen quantization level. While the NVIDIA 3090_24GB is a powerful GPU, other options exist, like the NVIDIA RTX 4090, the AMD Radeon RX 7900 XTX, or even specialized AI accelerators.

What About Other LLM Models?

The principles discussed here apply to various LLM models, such as GPT-3, Jurassic-1 Jumbo, and others. However, specific performance numbers and optimal configurations may vary depending on the model.

Keywords:

Llama3 8B, NVIDIA 309024GB, Token Generation Speed, GPU Performance, Quantization, Q4K_M, F16, Text Generation, LLM Optimization, Local Language Models, AI, Machine Learning, Deep Learning, GPU Benchmarks, Performance Analysis