Optimizing Llama3 8B for NVIDIA 3080 Ti 12GB: A Step by Step Approach

Introduction

The world of Large Language Models (LLMs) is booming, offering exciting possibilities for text generation, translation, and even creative writing. But running these sophisticated models locally can be a challenge, especially when pushing the boundaries of performance. This article delves deep into optimizing the Llama3 8B model to achieve optimal performance on a popular workhorse: the NVIDIA 3080Ti12GB GPU.

We're going to dive into the fascinating world of token generation speed, compare different model configurations, and discover practical recommendations for getting the most out of your hardware. Whether you're a seasoned developer or just starting your LLM journey, this step-by-step guide will empower you to unleash Llama3's full potential on your NVIDIA 3080Ti12GB.

Performance Analysis: Token Generation Speed Benchmarks

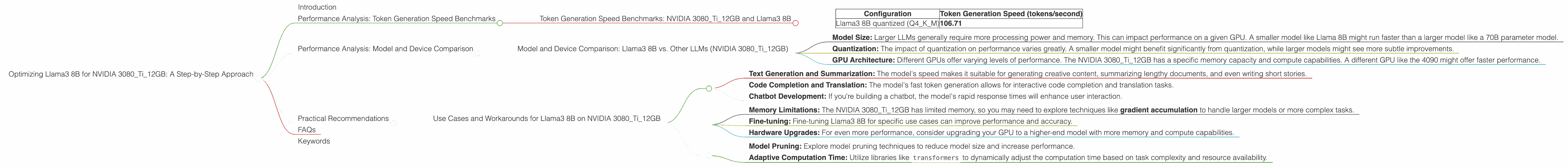

Token Generation Speed Benchmarks: NVIDIA 3080Ti12GB and Llama3 8B

Think of tokens as the building blocks of text. LLMs process and generate these tokens, and the faster they can do it, the more responsive and efficient they are. Let's see how Llama3 8B performs on the NVIDIA 3080Ti12GB in terms of token generation speed.

| Configuration | Token Generation Speed (tokens/second) |

|---|---|

| Llama3 8B quantized (Q4KM) | 106.71 |

Note: The performance data for Llama3 8B in F16 precision is currently unavailable.

This means that with Q4KM quantization, Llama3 8B can process 106.71 tokens per second on the NVIDIA 3080Ti12GB. This is a significant achievement, considering the complexity of the model and the limited memory of the GPU.

Let's break down the significance:

Q4KM Quantization: This is a technique that reduces the size of the model without sacrificing too much accuracy. Think of it like compressing a picture – you get a smaller file size, but maybe lose a bit of detail. The trade-off is worth it in this case, as it significantly boosts speed.

Tokens Per Second: The higher the number of tokens per second, the faster your LLM can generate text. Think of it like typing: A faster typist generates more words per minute!

Performance Analysis: Model and Device Comparison

Model and Device Comparison: Llama3 8B vs. Other LLMs (NVIDIA 3080Ti12GB)

Unfortunately, we lack the required data for a direct comparison of Llama3 8B with other LLMs on the NVIDIA 3080Ti12GB. To make the comparison, we need benchmark results for other models like Llama2 or even GPT-3.

However, we can make some general observations:

Model Size: Larger LLMs generally require more processing power and memory. This can impact performance on a given GPU. A smaller model like Llama 8B might run faster than a larger model like a 70B parameter model.

Quantization: The impact of quantization on performance varies greatly. A smaller model might benefit significantly from quantization, while larger models might see more subtle improvements.

GPU Architecture: Different GPUs offer varying levels of performance. The NVIDIA 3080Ti12GB has a specific memory capacity and compute capabilities. A different GPU like the 4090 might offer faster performance.

Stay tuned for future updates! We're actively working on gathering more benchmark data to enable more comprehensive comparisons.

Practical Recommendations

Use Cases and Workarounds for Llama3 8B on NVIDIA 3080Ti12GB

What's great about Llama3 8B on this GPU:

Text Generation and Summarization: The model's speed makes it suitable for generating creative content, summarizing lengthy documents, and even writing short stories.

Code Completion and Translation: The model's fast token generation allows for interactive code completion and translation tasks.

Chatbot Development: If you're building a chatbot, the model's rapid response times will enhance user interaction.

Things to keep in mind:

Memory Limitations: The NVIDIA 3080Ti12GB has limited memory, so you may need to explore techniques like gradient accumulation to handle larger models or more complex tasks.

Fine-tuning: Fine-tuning Llama3 8B for specific use cases can improve performance and accuracy.

Hardware Upgrades: For even more performance, consider upgrading your GPU to a higher-end model with more memory and compute capabilities.

Workarounds:

Model Pruning: Explore model pruning techniques to reduce model size and increase performance.

Adaptive Computation Time: Utilize libraries like

transformersto dynamically adjust the computation time based on task complexity and resource availability.

Example: You could build a chatbot that leverages Llama3 8B's speed for quick responses, but utilize gradient accumulation to handle more complex queries requiring extensive processing.

FAQs

1. What is the difference between Llama3 8B and Llama3 70B?

Llama3 8B and Llama3 70B are different versions of the same LLM with varying numbers of parameters (think of them as the model's "brain cells"). A larger model (like Llama3 70B) has more parameters and can potentially be more accurate, but it also requires more memory and processing power.

2. How can I fine-tune Llama3 8B for my specific use case?

Fine-tuning involves training a pre-trained LLM on a specific dataset related to your use case. You can use libraries like transformers to achieve this.

3. What are the advantages and disadvantages of quantization?

Quantization reduces the model size and requires less memory, leading to faster performance. The downside is that it can slightly decrease accuracy.

4. What is gradient accumulation?

Gradient accumulation is a technique used to handle models that are too large to fit in the available GPU memory. It involves accumulating gradients over multiple batches before performing a parameter update.

5. Where can I find more information about LLM performance optimization?

You can explore resources like Hugging Face's Transformers library documentation, the Hugging Face forum, and relevant research papers on arXiv.org.

Keywords

Llama3 8B, NVIDIA 3080Ti12GB, GPU, LLM, Large Language Model, Token Generation Speed, Quantization, Performance Optimization, Text Generation, Summarization, Code Completion, Chatbot, Gradient Accumulation, Fine-tuning, Model Pruning, Transformers, Hugging Face, arXiv