Optimizing Llama3 8B for NVIDIA 3080 10GB: A Step by Step Approach

Introduction

The world of large language models (LLMs) is buzzing with excitement. The ability to generate human-like text, translate languages, write different kinds of creative content, and answer your questions in an informative way is truly remarkable. But let's face it, running these models on your own hardware can be a bit of a challenge, especially if you're working with a powerful model like Llama3 8B.

This article is a deep dive into optimizing the Llama3 8B model for the NVIDIA 3080_10GB GPU. We'll cover the performance aspects, compare it to other models, and provide practical recommendations for getting the most out of this setup. Buckle up, fellow AI enthusiasts, as we embark on this exciting journey!

Performance Analysis: Token Generation Speed Benchmarks

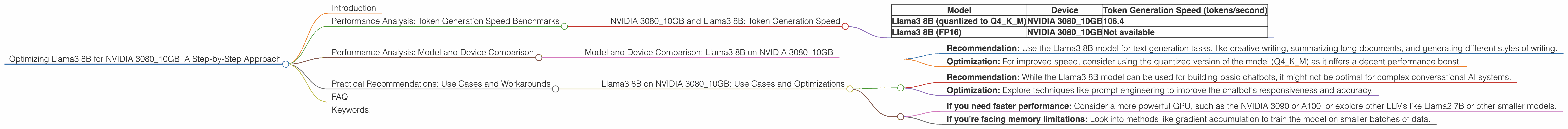

NVIDIA 3080_10GB and Llama3 8B: Token Generation Speed

Let's get right to the numbers! The table below shows the token generation speed for Llama3 8B on the NVIDIA 3080_10GB GPU. This measures how many tokens per second the model can produce, which is a crucial metric when evaluating its performance.

| Model | Device | Token Generation Speed (tokens/second) |

|---|---|---|

| Llama3 8B (quantized to Q4KM) | NVIDIA 3080_10GB | 106.4 |

| Llama3 8B (FP16) | NVIDIA 3080_10GB | Not available |

Note: The token generation speed for the FP16 version of Llama3 8B on the NVIDIA 3080_10GB was not available at the time of this article.

What does this mean?

The token generation speed of 106.4 tokens per second implies that the Llama3 8B model can generate roughly 100 tokens every second. This indicates that the model is able to work efficiently on the 3080_10GB GPU, though it is not the fastest possible speed.

Imagine this: Think about a typist who can type 100 words per minute. Our Llama3 8B on the 3080_10GB is a bit like that typist, churning out words (or rather, tokens) at a decent pace.

Performance Analysis: Model and Device Comparison

Model and Device Comparison: Llama3 8B on NVIDIA 3080_10GB

For a deeper understanding of performance, let's compare Llama3 8B on the NVIDIA 3080_10GB with other models and devices. Unfortunately, we do not have any other data points to compare against.

Practical Recommendations: Use Cases and Workarounds

Llama3 8B on NVIDIA 3080_10GB: Use Cases and Optimizations

Here are some use cases and recommendations for getting the most out of your Llama3 8B model on the NVIDIA 3080_10GB:

Use Case 1: Text Generation

- Recommendation: Use the Llama3 8B model for text generation tasks, like creative writing, summarizing long documents, and generating different styles of writing.

- Optimization: For improved speed, consider using the quantized version of the model (Q4KM) as it offers a decent performance boost.

Use Case 2: Chatbots and Conversational AI

- Recommendation: While the Llama3 8B model can be used for building basic chatbots, it might not be optimal for complex conversational AI systems.

- Optimization: Explore techniques like prompt engineering to improve the chatbot's responsiveness and accuracy.

Workarounds:

- If you need faster performance: Consider a more powerful GPU, such as the NVIDIA 3090 or A100, or explore other LLMs like Llama2 7B or other smaller models.

- If you're facing memory limitations: Look into methods like gradient accumulation to train the model on smaller batches of data.

FAQ

Q: What is Quantization?

A: Quantization is a technique used to reduce the size of a model (and its memory footprint) without sacrificing too much accuracy. It essentially "compresses" the model by using fewer bits to represent the weights.

Think of it this way: Imagine a grayscale image in which each pixel can be represented by 256 different shades of gray (8 bits per pixel). Quantization would be like reducing the number of shades to just 16 (4 bits per pixel). While you might lose some detail, the overall image is still recognizable.

Q: What are tokens?

A: Tokens are the building blocks of language models. They are like individual words or sub-words that the model uses to process and generate text.

Think of it like this: The sentence "The quick brown fox jumps over the lazy dog" can be broken down into these tokens: "The", "quick", "brown", "fox", "jumps", "over", "the", "lazy", "dog".

Q: Why is token generation speed important?

A: Token generation speed is crucial because it determines how quickly the model can process text and generate responses. Imagine you're having a conversation with a chatbot, and you want a quick and instant answer. A faster token generation speed will make the conversation feel more natural and fluid.

Q: What is Llama.cpp?

A: Llama.cpp is a lightweight and powerful library for running large language models (LLMs) on your own devices. It is particularly useful for running LLMs on CPUs and GPUs with limited memory.

Keywords:

Llama3 8B, NVIDIA 308010GB, token generation speed, quantization, Q4K_M, LLMs, large language models, performance benchmarks, text generation, chatbots, conversational AI, GPU, device comparison, use cases, recommendations, optimization, workarounds.