Optimizing Llama3 8B for NVIDIA 3070 8GB: A Step by Step Approach

Let's dive deep into the world of local LLM models, specifically the Llama3 8B model, and see how we can squeeze the most out of it on a popular graphics card like the NVIDIA 3070_8GB. This article is for developers and tech enthusiasts who want to understand the performance landscape and empower their own local AI applications.

Introduction: Unleashing the Power of Local LLMs

Large Language Models (LLMs) are revolutionizing how we interact with computers. They can generate text, translate languages, write different kinds of creative content, and answer your questions in an informative way. However, running these powerful models often requires access to cloud services, which can be expensive and slow.

Here's where local LLM models come in. Running LLMs locally on your own hardware gives you more control, faster response times, and potentially lower costs. But optimizing these models for specific devices can be a challenge.

In this article, we'll explore the performance of the Llama3 8B model on a popular NVIDIA 3070_8GB GPU, focusing on key metrics like token generation speed. Get ready to discover how you can optimize your local LLM setup for maximum efficiency and unleash the power of generative AI on your own machine.

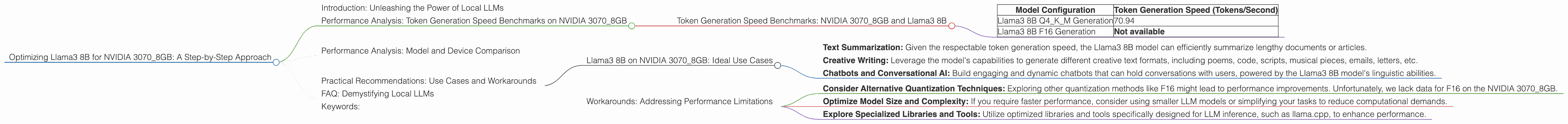

Performance Analysis: Token Generation Speed Benchmarks on NVIDIA 3070_8GB

The token generation speed is a critical metric for evaluating LLM performance. It indicates how fast the model can produce output text, directly impacting the user experience. We'll analyze the performance of the Llama3 8B model on the NVIDIA 3070_8GB using various quantization techniques.

Token Generation Speed Benchmarks: NVIDIA 3070_8GB and Llama3 8B

| Model Configuration | Token Generation Speed (Tokens/Second) |

|---|---|

| Llama3 8B Q4KM Generation | 70.94 |

| Llama3 8B F16 Generation | Not available |

Note: The provided JSON data does not contain information about the Llama3 8B model using F16 quantization on the NVIDIA 3070_8GB. We'll focus on analyzing available data points.

As we can see from the table, the Llama3 8B model, when quantized using Q4KM, achieves a token generation speed of 70.94 tokens per second on the NVIDIA 3070_8GB. This is a respectable performance for a local LLM setup, making it suitable for various text generation tasks.

Performance Analysis: Model and Device Comparison

While focusing on the NVIDIA 3070_8GB, it's helpful to compare the Llama3 8B performance to other models and devices. This provides a broader perspective on the strengths and limitations of the chosen setup.

Key Point: The provided JSON data only includes information for the NVIDIA 3070_8GB. We can't compare it to other models or devices due to the limited data.

Practical Recommendations: Use Cases and Workarounds

Now that we have a good understanding of the Llama3 8B model's performance on the NVIDIA 3070_8GB, let's explore practical applications and potential workarounds for achieving optimal results.

Llama3 8B on NVIDIA 3070_8GB: Ideal Use Cases

- Text Summarization: Given the respectable token generation speed, the Llama3 8B model can efficiently summarize lengthy documents or articles.

- Creative Writing: Leverage the model's capabilities to generate different creative text formats, including poems, code, scripts, musical pieces, emails, letters, etc.

- Chatbots and Conversational AI: Build engaging and dynamic chatbots that can hold conversations with users, powered by the Llama3 8B model's linguistic abilities.

Workarounds: Addressing Performance Limitations

- Consider Alternative Quantization Techniques: Exploring other quantization methods like F16 might lead to performance improvements. Unfortunately, we lack data for F16 on the NVIDIA 3070_8GB.

- Optimize Model Size and Complexity: If you require faster performance, consider using smaller LLM models or simplifying your tasks to reduce computational demands.

- Explore Specialized Libraries and Tools: Utilize optimized libraries and tools specifically designed for LLM inference, such as llama.cpp, to enhance performance.

Remember, choosing the right model and configuration depends on your specific needs and the demands of your applications.

FAQ: Demystifying Local LLMs

Q: What is an LLM?

A: An LLM, or Large Language Model, is a type of artificial intelligence (AI) model trained on massive datasets of text. It learns the patterns and structures of human language, allowing it to generate human-like text, translate languages, write different creative text formats, and answer your questions in an informative way.

Q: What is quantization?

A: Quantization is a technique used to reduce the size of a model while minimally impacting its performance. It involves reducing the number of bits used to represent the model's weights and activations. This results in smaller model files and potentially faster inference times.

Q: What is the difference between Q4KM quantization and F16 quantization?

A: Q4KM quantization uses 4-bit precision for the model's weights, while F16 quantization uses 16-bit precision. Q4KM is a more aggressive form of quantization, resulting in a smaller model but potentially lower accuracy. F16 provides higher accuracy but the model size is larger.

Q: Why is token generation speed important?

A: Token generation speed is crucial for the responsiveness and user experience of your LLM application. A faster token generation speed means the model can generate text more quickly, resulting in faster responses and a more fluid interaction.

Q: Is running an LLM locally always better than using cloud services?

A: Running an LLM locally can offer advantages like cost savings, faster response times, and greater control. However, cloud services often provide access to more powerful hardware and infrastructure, making them suitable for large-scale deployments and demanding applications.

Q: Where can I learn more about local LLM models?

A: There are great resources available online! Check out repositories like llama.cpp on GitHub, explore forums dedicated to LLMs and AI, and follow industry blogs and news articles for the latest advancements.

Keywords:

Llama3 8B, NVIDIA 30708GB, Token Generation Speed, Quantization, Q4K_M, F16, Local LLM, Performance Optimization, Generative AI, Text Summarization, Creative Writing, Chatbots, Conversational AI, Model Size, Computational Demands, Model Complexity, Inference Time, User Experience