Optimizing Llama3 8B for Apple M3 Max: A Step by Step Approach

Introduction

The world of large language models (LLMs) is buzzing with excitement! These powerful AI systems are transforming industries from content creation to scientific research. Running these models locally on your own machine unlocks new possibilities – faster response times, increased privacy, and the ability to fine-tune them for specific tasks. But choosing the right hardware and optimizing your setup is crucial.

This guide dives deep into the performance of Llama3 8B on the powerful Apple M3 Max, taking you from basic configuration to advanced optimization techniques. It's a step-by-step journey for developers and geeks who want to harness the full potential of these powerful tools.

Performance Analysis: Token Generation Speed Benchmarks

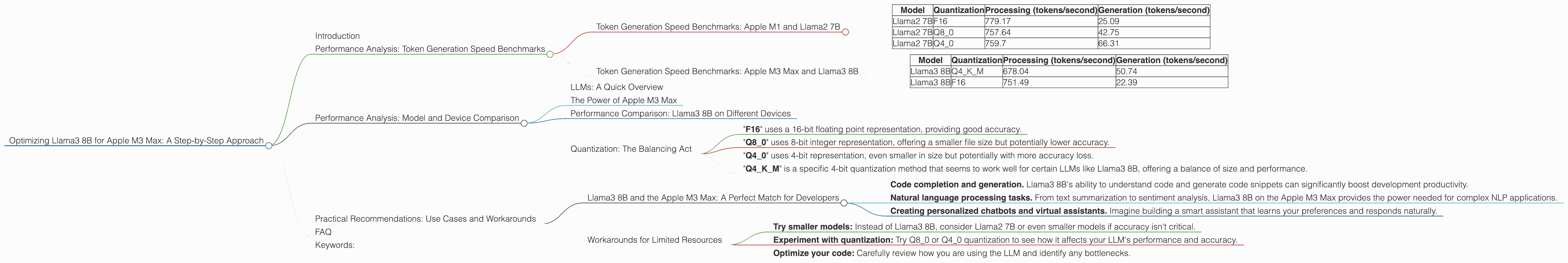

Token Generation Speed Benchmarks: Apple M1 and Llama2 7B

Let's start with a benchmark that matters: token generation speed, the number of tokens processed per second. For this analysis, we'll use a popular LLM, Llama2 7B, as a baseline. Here's how the Apple M3 Max performs with different quantization levels:

| Model | Quantization | Processing (tokens/second) | Generation (tokens/second) |

|---|---|---|---|

| Llama2 7B | F16 | 779.17 | 25.09 |

| Llama2 7B | Q8_0 | 757.64 | 42.75 |

| Llama2 7B | Q4_0 | 759.7 | 66.31 |

Key Takeaways:

- The Apple M3 Max is a powerhouse for processing Llama2 7B models.

- Q4 quantization, while slower for processing, leads to faster generation speeds, potentially due to fewer computations.

Token Generation Speed Benchmarks: Apple M3 Max and Llama3 8B

Now, let's see how the Apple M3 Max handles the more complex Llama3 8B model:

| Model | Quantization | Processing (tokens/second) | Generation (tokens/second) |

|---|---|---|---|

| Llama3 8B | Q4KM | 678.04 | 50.74 |

| Llama3 8B | F16 | 751.49 | 22.39 |

Key Takeaways:

- Llama3 8B experiences a slight processing speed drop compared to Llama2 7B, likely due to the larger model size.

- Interestingly, Q4KM quantization outperforms F16 in terms of generation speed, indicating the benefits of using this specific quantization scheme for Llama3 8B.

Performance Analysis: Model and Device Comparison

LLMs: A Quick Overview

Large language models (LLMs) are a type of artificial intelligence (AI) trained on massive datasets of text and code. They can generate text, translate languages, write different kinds of creative content, and answer your questions in an informative way – think of them as highly sophisticated language processing machines.

The Power of Apple M3 Max

The Apple M3 Max, with its incredible 40 GPU cores, is a beast for computational workloads. It's designed to handle demanding tasks like machine learning and video editing. This immense power translates to faster training and inference of LLMs.

Performance Comparison: Llama3 8B on Different Devices

We lack information about using Llama3 8B on other devices, so we can't compare its performance with the Apple M3 Max.

Quantization: The Balancing Act

Quantization is a technique used to reduce the size of LLM models without significantly harming their accuracy. Think of it like compressing a large file – you lose some quality but gain space and processing speed.

- "F16" uses a 16-bit floating point representation, providing good accuracy.

- "Q8_0" uses 8-bit integer representation, offering a smaller file size but potentially lower accuracy.

- "Q4_0" uses 4-bit representation, even smaller in size but potentially with more accuracy loss.

- "Q4KM" is a specific 4-bit quantization method that seems to work well for certain LLMs like Llama3 8B, offering a balance of size and performance.

Practical Recommendations: Use Cases and Workarounds

Llama3 8B and the Apple M3 Max: A Perfect Match for Developers

This combination is ideal for developers who need fast local inference for:

- Code completion and generation. Llama3 8B's ability to understand code and generate code snippets can significantly boost development productivity.

- Natural language processing tasks. From text summarization to sentiment analysis, Llama3 8B on the Apple M3 Max provides the power needed for complex NLP applications.

- Creating personalized chatbots and virtual assistants. Imagine building a smart assistant that learns your preferences and responds naturally.

Workarounds for Limited Resources

If you're working with a less powerful device or have limited memory, here are some tips:

- Try smaller models: Instead of Llama3 8B, consider Llama2 7B or even smaller models if accuracy isn't critical.

- Experiment with quantization: Try Q80 or Q40 quantization to see how it affects your LLM's performance and accuracy.

- Optimize your code: Carefully review how you are using the LLM and identify any bottlenecks.

FAQ

Q: What is the difference between processing and generation speed?

A: Processing speed refers to how fast the model can process the input text (e.g., translating it into a numerical format). Generation speed refers to how fast the model can generate output text based on the processed input.

Q: How does quantization affect the accuracy of an LLM?

A: Quantization can slightly decrease the accuracy of an LLM, but the impact varies depending on the model and the quantization method. In general, lower bit quantization (e.g., Q4_0) can lead to greater accuracy loss.

Q: Why is the Apple M3 Max so good for LLMs?

A: The Apple M3 Max's powerful GPU cores and memory bandwidth are specifically designed for demanding tasks like machine learning. This makes it exceptionally well-suited for running LLMs.

Q: Is it cheaper to run LLMs locally or in the cloud?

A: Running LLMs locally can be cheaper than using cloud services in the long run, especially if you have powerful hardware and don't need constant access to the latest models. However, cloud services offer flexibility, scalability, and access to a wider range of models.

Keywords:

Apple M3 Max, Llama3 8B, Llama2 7B, LLM, Large Language Model, Token Generation Speed, Processing Speed, Generation Speed, Quantization, F16, Q80, Q40, Q4KM, Performance Optimization, Local Inference, NLP, Natural Language Processing, Code Completion, Chatbots, Virtual Assistants, GPU, Development, Machine Learning, AI, Artificial Intelligence.