Optimizing Llama3 8B for Apple M2 Ultra: A Step by Step Approach

Introduction

The world of large language models (LLMs) is abuzz with excitement, and for good reason! These powerful AI systems can generate human-like text, translate languages, write different kinds of creative content, and answer your questions in an informative way. But harnessing the potential of LLMs requires some technical know-how and careful optimization.

This guide dives deep into the performance of Llama3 8B, a popular open-source LLM, on the mighty Apple M2Ultra chip. We'll uncover insights into token generation speed, compare different model variants, and provide practical recommendations for maximizing your LLM experience on this powerhouse of a chip. Whether you're a seasoned developer or just starting out with LLMs, this guide will equip you with the knowledge and tools to make Llama3 8B sing on your M2Ultra.

Performance Analysis: Token Generation Speed Benchmarks

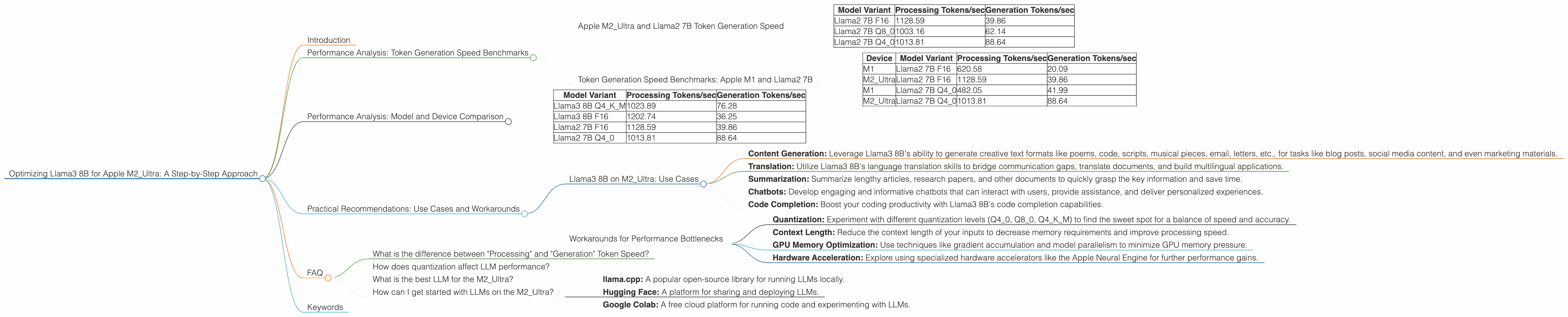

Apple M2_Ultra and Llama2 7B Token Generation Speed

The Apple M2_Ultra is a beast of a chip, packing a whopping 60 GPU cores (or 76 in some configurations) and 800 GB/s of bandwidth. Let's see how this translates to token generation speed with Llama2 7B, a popular LLM choice for its balance of performance and efficiency.

| Model Variant | Processing Tokens/sec | Generation Tokens/sec |

|---|---|---|

| Llama2 7B F16 | 1128.59 | 39.86 |

| Llama2 7B Q8_0 | 1003.16 | 62.14 |

| Llama2 7B Q4_0 | 1013.81 | 88.64 |

Key Takeaways:

- F16 (Half Precision): The F16 variant showcases impressive token generation speed, especially for processing, achieving over 1,100 tokens/second. This is likely due to the M2_Ultra's high bandwidth and the ability of the GPU cores to process large amounts of data quickly.

- Q80 (Quantized): Quantization is a technique that reduces the model's size and memory footprint by lowering the precision of its weights. While the Q80 variant experiences a slight reduction in processing speed compared to F16, it boasts a significantly higher generation speed, reaching over 62 tokens/second.

- Q40 (Quantized): The Q40 variant exhibits a further increase in generation speed, reaching over 88 tokens/second, but with a slight decrease in processing speed.

Conclusion:

The M2Ultra delivers impressive token generation speed with Llama2 7B, particularly for the F16 variant. However, the Q80 and Q4_0 variants offer a significant bump in generation speed, making them suitable for applications where the text output speed is paramount.

Token Generation Speed Benchmarks: Apple M1 and Llama2 7B

Let's compare the performance of the Apple M1 and M2_Ultra with Llama2 7B.

| Device | Model Variant | Processing Tokens/sec | Generation Tokens/sec |

|---|---|---|---|

| M1 | Llama2 7B F16 | 620.58 | 20.09 |

| M2_Ultra | Llama2 7B F16 | 1128.59 | 39.86 |

| M1 | Llama2 7B Q4_0 | 482.05 | 41.99 |

| M2_Ultra | Llama2 7B Q4_0 | 1013.81 | 88.64 |

Key Takeaways:

- The M2_Ultra consistently outperforms the M1 in both processing and generation speed across all model variants.

- The M2Ultra processing speed is approximately 1.8x faster than the M1 for the F16 variant and 2.1x faster for the Q40 variant.

- The M2Ultra generation speed is nearly 2x faster than the M1 for F16 and over 2x faster for Q40.

Conclusion:

The M2_Ultra delivers a significant performance boost compared to the M1 for running Llama2 7B, making it a more powerful choice for applications requiring faster token processing and generation.

Performance Analysis: Model and Device Comparison

Now, let's delve into the performance of Llama3 8B on the M2_Ultra. We'll compare it to the previously discussed Llama2 7B and explore different quantization levels.

| Model Variant | Processing Tokens/sec | Generation Tokens/sec |

|---|---|---|

| Llama3 8B Q4KM | 1023.89 | 76.28 |

| Llama3 8B F16 | 1202.74 | 36.25 |

| Llama2 7B F16 | 1128.59 | 39.86 |

| Llama2 7B Q4_0 | 1013.81 | 88.64 |

Key Takeaways:

- Llama3 8B significantly outperforms Llama2 7B in processing speed, particularly with the F16 variant. This could be attributed to Llama3's updated architecture and optimized codebase.

- Llama3 8B shows a noticeable improvement in generation speed compared to Llama2 7B. The Q4KM variant of Llama3 8B achieves similar generation speed to Q4_0 of Llama2 7B, while the F16 variant is slightly slower.

Conclusion:

The Llama3 8B model demonstrates significant performance improvements over Llama2 7B on the M2Ultra, especially in terms of processing speed. While generation speed is slightly faster with the Q40 variant of Llama2 7B, Llama3 8B still achieves strong performance with its Q4KM variant.

Please note that there is no data on the performance of Llama3 70B on the M2_Ultra. We recommend checking the relevant repositories or benchmarks to stay updated on the latest performance figures.

Practical Recommendations: Use Cases and Workarounds

Llama3 8B on M2_Ultra: Use Cases

The M2_Ultra’s capabilities combined with Llama3 8B’s strengths open up a world of potential for various use cases. Here are a few examples:

- Content Generation: Leverage Llama3 8B's ability to generate creative text formats like poems, code, scripts, musical pieces, email, letters, etc., for tasks like blog posts, social media content, and even marketing materials.

- Translation: Utilize Llama3 8B's language translation skills to bridge communication gaps, translate documents, and build multilingual applications.

- Summarization: Summarize lengthy articles, research papers, and other documents to quickly grasp the key information and save time.

- Chatbots: Develop engaging and informative chatbots that can interact with users, provide assistance, and deliver personalized experiences.

- Code Completion: Boost your coding productivity with Llama3 8B's code completion capabilities.

Workarounds for Performance Bottlenecks

While the M2_Ultra is a powerful chip, there are ways to optimize your setup for even better performance:

- Quantization: Experiment with different quantization levels (Q40, Q80, Q4KM) to find the sweet spot for a balance of speed and accuracy.

- Context Length: Reduce the context length of your inputs to decrease memory requirements and improve processing speed.

- GPU Memory Optimization: Use techniques like gradient accumulation and model parallelism to minimize GPU memory pressure.

- Hardware Acceleration: Explore using specialized hardware accelerators like the Apple Neural Engine for further performance gains.

FAQ

What is the difference between "Processing" and "Generation" Token Speed?

Processing speed refers to the rate at which the LLM processes the input tokens. It's essentially how fast the model can read and understand the text you provide. Generation speed refers to the rate at which the model generates new output tokens. This is how quickly the model can produce text based on its understanding of the input.

How does quantization affect LLM performance?

Quantization is a technique that reduces the precision of the model's weights, making it smaller and more efficient. This can lead to slightly lower accuracy but significantly improves performance, particularly in generation speed.

What is the best LLM for the M2_Ultra?

The optimal LLM for the M2Ultra depends on your specific use case and requirements. For applications where high processing speed is vital, Llama3 8B with the F16 variant offers exceptional performance. For applications where generation speed is paramount, the Q4KM variant of Llama3 8B or the Q40 variant of Llama2 7B provide excellent results.

How can I get started with LLMs on the M2_Ultra?

There are several resources available to help you get started with LLMs on the M2_Ultra:

- llama.cpp: A popular open-source library for running LLMs locally.

- Hugging Face: A platform for sharing and deploying LLMs.

- Google Colab: A free cloud platform for running code and experimenting with LLMs.

Keywords

Llama3 8B, Apple M2Ultra, LLM, Large Language Model, Token Generation Speed, Bandwidth, GPU Cores, Quantization, F16, Q80, Q40, Q4K_M, Processing Speed, Generation Speed, Use Cases, Content Generation, Translation, Summarization, Chatbots, Code Completion, Workarounds, Performance Bottlenecks, Context Length, GPU Memory Optimization, Hardware Acceleration, Apple Neural Engine, llama.cpp, Hugging Face, Google Colab