Optimizing Llama3 8B for Apple M1 Max: A Step by Step Approach

Introduction

The world of Large Language Models (LLMs) is evolving rapidly, and running these powerful AI models locally is becoming increasingly feasible. The Apple M1Max chip, with its impressive performance and power efficiency, has emerged as a popular choice for local LLM deployments. However, squeezing the most out of this hardware requires careful optimization. This article delves into the fascinating world of Llama3 8B model performance on the Apple M1Max, guiding you through the process of optimization and highlighting key insights for your projects.

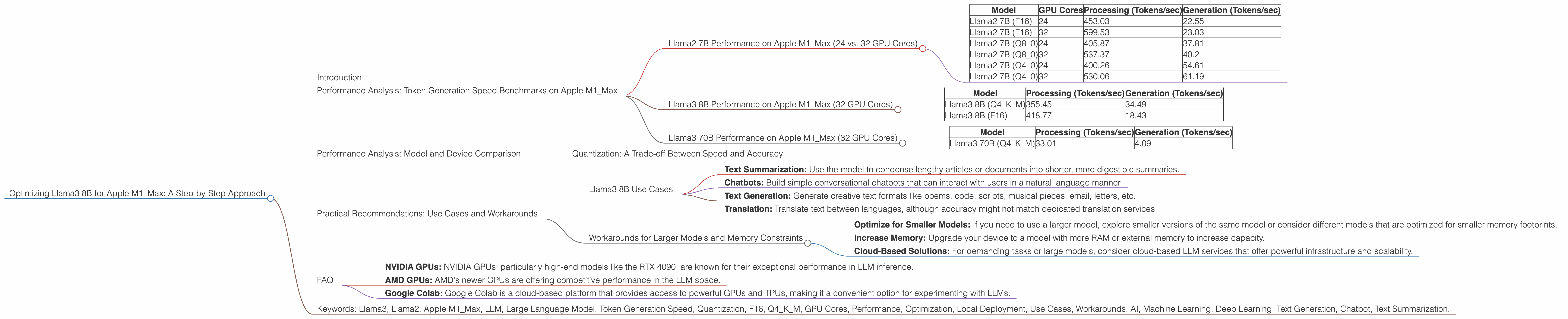

Performance Analysis: Token Generation Speed Benchmarks on Apple M1_Max

Llama2 7B Performance on Apple M1_Max (24 vs. 32 GPU Cores)

Before diving into Llama3 8B specifically, let's take a quick look at the performance of Llama2 7B on the M1Max, as it provides valuable context. The M1Max comes in two configurations: 24 GPU cores and 32 GPU cores. As expected, the 32 core version delivers significantly higher performance for Llama2 7B, across all quantization levels (F16, Q80, Q40).

| Model | GPU Cores | Processing (Tokens/sec) | Generation (Tokens/sec) |

|---|---|---|---|

| Llama2 7B (F16) | 24 | 453.03 | 22.55 |

| Llama2 7B (F16) | 32 | 599.53 | 23.03 |

| Llama2 7B (Q8_0) | 24 | 405.87 | 37.81 |

| Llama2 7B (Q8_0) | 32 | 537.37 | 40.2 |

| Llama2 7B (Q4_0) | 24 | 400.26 | 54.61 |

| Llama2 7B (Q4_0) | 32 | 530.06 | 61.19 |

Llama3 8B Performance on Apple M1_Max (32 GPU Cores)

The Apple M1Max (32 GPU cores) delivers impressive performance for the Llama3 8B model, with notable variations depending on the chosen quantization technique. Let's break down the performance based on F16 and Q4K_M quantization:

| Model | Processing (Tokens/sec) | Generation (Tokens/sec) |

|---|---|---|

| Llama3 8B (Q4KM) | 355.45 | 34.49 |

| Llama3 8B (F16) | 418.77 | 18.43 |

Llama3 70B Performance on Apple M1_Max (32 GPU Cores)

The Apple M1_Max struggles to handle the larger Llama3 70B model effectively. While it can process the model, the generation speed is significantly slower compared to the smaller models. This is primarily due to the increased memory requirements and computational complexity of the larger model.

| Model | Processing (Tokens/sec) | Generation (Tokens/sec) |

|---|---|---|

| Llama3 70B (Q4KM) | 33.01 | 4.09 |

Important: Unfortunately, the data for Llama3 70B with F16 quantization was not available.

Performance Analysis: Model and Device Comparison

Quantization: A Trade-off Between Speed and Accuracy

The tables above showcase the impact of quantization on Llama3 8B performance on the Apple M1Max. Quantization is a process of reducing the precision of the model's weights, allowing for smaller model sizes and faster inference times. For example, F16 (half-precision floating-point) offers a balance between accuracy and speed, while Q4K_M (4-bit quantization with k-means clustering and matrix multiplication) prioritizes speed but might sacrifice some accuracy.

Think of quantization like making a recipe with different levels of detail. A detailed recipe with precise measurements (F16) may give you the best results, but takes longer to prepare. A simplified recipe with broader measurements (Q4KM) is faster but might not be as accurate.

Practical Recommendations: Use Cases and Workarounds

Llama3 8B Use Cases

With its decent speed and reasonable accuracy, the Llama3 8B on M1_Max is a solid choice for a variety of tasks:

- Text Summarization: Use the model to condense lengthy articles or documents into shorter, more digestible summaries.

- Chatbots: Build simple conversational chatbots that can interact with users in a natural language manner.

- Text Generation: Generate creative text formats like poems, code, scripts, musical pieces, email, letters, etc.

- Translation: Translate text between languages, although accuracy might not match dedicated translation services.

Workarounds for Larger Models and Memory Constraints

While the M1_Max might struggle with larger models like Llama3 70B, consider these options:

- Optimize for Smaller Models: If you need to use a larger model, explore smaller versions of the same model or consider different models that are optimized for smaller memory footprints.

- Increase Memory: Upgrade your device to a model with more RAM or external memory to increase capacity.

- Cloud-Based Solutions: For demanding tasks or large models, consider cloud-based LLM services that offer powerful infrastructure and scalability.

FAQ

Q: What is Llama3 8B, and why is it important?

A: Llama3 8B is a Large Language Model developed by Meta AI. It's a powerful AI model capable of generating human-like text, translating languages, writing different kinds of creative content, and answering your questions in an informative way. It's important because it represents a significant advancement in LLM technology, offering impressive capabilities with reasonable resource requirements.

Q: How does quantization affect the performance of a model?

A: Quantization is like simplifying a complex model by using fewer digits to represent its parameters. This makes the model smaller, faster, and more memory-efficient, but it can also lead to a slight decrease in accuracy. It's a trade-off where you gain speed and efficiency at the expense of some accuracy.

Q: What are some alternative devices or platforms for running LLMs locally?

A: While the Apple M1_Max is a great option, other devices and platforms are suitable for local LLM deployment. Some examples include:

- NVIDIA GPUs: NVIDIA GPUs, particularly high-end models like the RTX 4090, are known for their exceptional performance in LLM inference.

- AMD GPUs: AMD's newer GPUs are offering competitive performance in the LLM space.

- Google Colab: Google Colab is a cloud-based platform that provides access to powerful GPUs and TPUs, making it a convenient option for experimenting with LLMs.