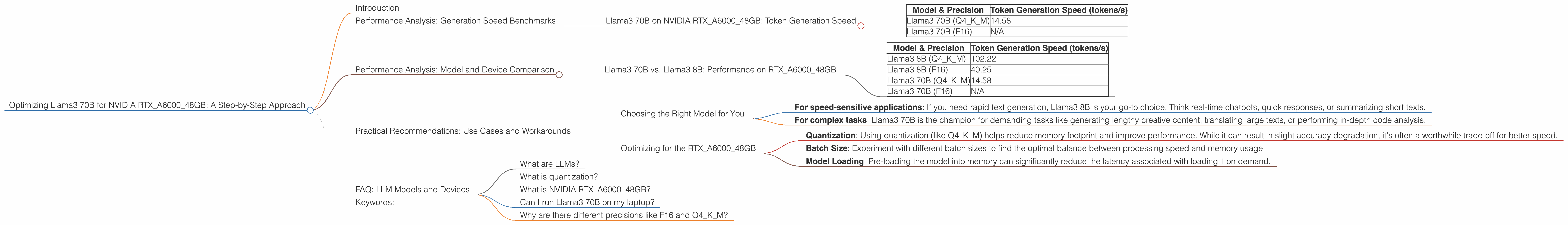

Optimizing Llama3 70B for NVIDIA RTX A6000 48GB: A Step by Step Approach

Introduction

The world of large language models (LLMs) is evolving rapidly. Llama3, the latest iteration of the Llama family, brings impressive advancements in performance and capabilities. But with its massive size, running these models locally can be a challenge, especially for resource-constrained developers. This article focuses on optimizing Llama3 70B for the NVIDIA RTXA600048GB GPU, a powerful card designed for demanding applications. We'll dive deep into performance analysis, model comparisons, and practical recommendations to get the most out of your local Llama3 setup.

Performance Analysis: Generation Speed Benchmarks

Llama3 70B on NVIDIA RTXA600048GB: Token Generation Speed

Let's get to the juicy stuff! We're interested in how fast Llama3 70B can generate text on the RTXA600048GB. We'll measure this using tokens per second (tokens/s).

| Model & Precision | Token Generation Speed (tokens/s) |

|---|---|

| Llama3 70B (Q4KM) | 14.58 |

| Llama3 70B (F16) | N/A |

As you can see, Llama3 70B with Q4KM quantization can generate around 14.58 tokens per second, which is pretty respectable considering the model's size. While the F16 precision data is currently unavailable, it's worth noting that this typically leads to faster generation speeds but at the cost of potential accuracy degradation.

Performance Analysis: Model and Device Comparison

Llama3 70B vs. Llama3 8B: Performance on RTXA600048GB

Comparing Llama3 70B with its smaller sibling, Llama3 8B, sheds light on the impact of model size on performance.

| Model & Precision | Token Generation Speed (tokens/s) |

|---|---|

| Llama3 8B (Q4KM) | 102.22 |

| Llama3 8B (F16) | 40.25 |

| Llama3 70B (Q4KM) | 14.58 |

| Llama3 70B (F16) | N/A |

As expected, Llama3 8B significantly outperforms Llama3 70B in terms of generation speed. This is primarily due to the smaller model size, resulting in less computational overhead. However, the larger Llama3 70B model provides significantly increased capacity for complex tasks and richer outputs.

Practical Recommendations: Use Cases and Workarounds

Choosing the Right Model for You

Choosing between Llama3 70B and Llama3 8B depends on your specific needs and priorities.

- For speed-sensitive applications: If you need rapid text generation, Llama3 8B is your go-to choice. Think real-time chatbots, quick responses, or summarizing short texts.

- For complex tasks: Llama3 70B is the champion for demanding tasks like generating lengthy creative content, translating large texts, or performing in-depth code analysis.

Optimizing for the RTXA600048GB

- Quantization: Using quantization (like Q4KM) helps reduce memory footprint and improve performance. While it can result in slight accuracy degradation, it's often a worthwhile trade-off for better speed.

- Batch Size: Experiment with different batch sizes to find the optimal balance between processing speed and memory usage.

- Model Loading: Pre-loading the model into memory can significantly reduce the latency associated with loading it on demand.

FAQ: LLM Models and Devices

What are LLMs?

LLMs are large neural networks trained on massive datasets of text and code. They excel at understanding and generating human-like text, offering a wide range of applications like chatbots, text summarization, and creative writing.

What is quantization?

Quantization is a technique used to reduce the size of a model by using less precise numbers to represent the model's parameters. Imagine shrinking a high-resolution image to a smaller size. Quantization does something similar with the model's data, making it easier to store and faster to process.

What is NVIDIA RTXA600048GB?

The NVIDIA RTXA600048GB is a high-performance graphics card designed for demanding applications like machine learning, scientific simulations, and graphics rendering. It boasts significant memory (48GB) and powerful processing capabilities, making it ideal for running large LLMs locally.

Can I run Llama3 70B on my laptop?

It's possible, but you might need a top-of-the-line laptop with a powerful GPU. Running larger LLMs on laptops usually requires compromises in terms of performance and may lead to increased processing time.

Why are there different precisions like F16 and Q4KM?

Precision refers to the level of detail used to represent the model's parameters. F16 (half-precision floating point) is faster but less precise, while Q4KM (quantized) is even smaller and faster but has a greater impact on accuracy. Finding the right balance between speed and accuracy is crucial.

Keywords:

Llama3, NVIDIA RTXA600048GB, LLM, performance, token generation speed, quantization, Q4KM, F16, batch size, use cases, optimization, practical guide, local models, deep dive, GPU, processing speed, model size, text generation, development, AI, developer, geek.