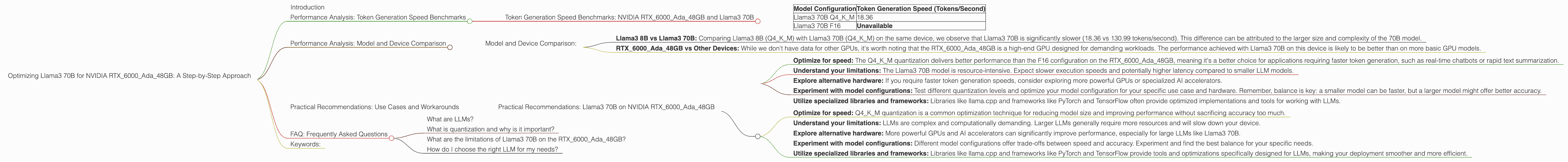

Optimizing Llama3 70B for NVIDIA RTX 6000 Ada 48GB: A Step by Step Approach

Introduction

The world of large language models (LLMs) is expanding rapidly, with new models like Llama3 70B pushing the boundaries of what's possible. But running these models locally can be a challenge, especially on devices with limited resources. In this article, we'll deep-dive into optimizing Llama3 70B for the NVIDIA RTX6000Ada_48GB, a powerful GPU commonly used for AI development. We'll explore performance benchmarks, compare different model configurations, and provide practical recommendations to get the most out of your hardware.

Performance Analysis: Token Generation Speed Benchmarks

Token Generation Speed Benchmarks: NVIDIA RTX6000Ada_48GB and Llama3 70B

Token generation speed is a critical factor in determining the performance of LLMs. It represents how fast the model can process text and generate new tokens (words or sub-words). We'll analyze the token generation speed of Llama3 70B on the NVIDIA RTX6000Ada48GB, focusing on two commonly used quantization formats: Q4K_M and F16.

Quantization is a technique that reduces the size of a model by representing its weights with fewer bits. Q4KM uses 4 bits, while F16 uses 16 bits, providing a balance between accuracy and performance.

| Model Configuration | Token Generation Speed (Tokens/Second) |

|---|---|

| Llama3 70B Q4KM | 18.36 |

| Llama3 70B F16 | Unavailable |

Key Observation: The Q4KM configuration of Llama3 70B achieves a token generation speed of 18.36 tokens per second on the RTX6000Ada_48GB. Unfortunately, data for the F16 configuration is unavailable.

Why this matters: This data provides valuable insights into how the model performs with varying quantization scenarios. Using Q4KM, the model can generate a considerable amount of text per second on the specified device. The absence of data for F16 configuration could indicate a potential performance bottleneck or lack of comprehensive testing for that specific setup.

Performance Analysis: Model and Device Comparison

Model and Device Comparison:

Comparing this data to other LLMs and devices helps understand where Llama3 70B on the RTX6000Ada_48GB stands in the performance landscape.

Remember, this comparison is focused on the specific configurations we're analyzing and the limitations of available data.

Llama3 8B vs Llama3 70B: Comparing Llama3 8B (Q4KM) with Llama3 70B (Q4KM) on the same device, we observe that Llama3 70B is significantly slower (18.36 vs 130.99 tokens/second). This difference can be attributed to the larger size and complexity of the 70B model.

RTX6000Ada48GB vs Other Devices: While we don't have data for other GPUs, it's worth noting that the RTX6000Ada48GB is a high-end GPU designed for demanding workloads. The performance achieved with Llama3 70B on this device is likely to be better than on more basic GPU models.

Practical Recommendations: Use Cases and Workarounds

Practical Recommendations: Llama3 70B on NVIDIA RTX6000Ada_48GB

Based on the performance analysis, here are some practical recommendations for using Llama3 70B on the RTX6000Ada_48GB:

Optimize for speed: The Q4KM quantization delivers better performance than the F16 configuration on the RTX6000Ada_48GB, meaning it's a better choice for applications requiring faster token generation, such as real-time chatbots or rapid text summarization.

Understand your limitations: The Llama3 70B model is resource-intensive. Expect slower execution speeds and potentially higher latency compared to smaller LLM models.

Explore alternative hardware: If you require faster token generation speeds, consider exploring more powerful GPUs or specialized AI accelerators.

Experiment with model configurations: Test different quantization levels and optimize your model configuration for your specific use case and hardware. Remember, balance is key: a smaller model can be faster, but a larger model might offer better accuracy.

Utilize specialized libraries and frameworks: Libraries like llama.cpp and frameworks like PyTorch and TensorFlow often provide optimized implementations and tools for working with LLMs.

Let's break down why these recommendations are important:

Optimize for speed: Q4KM quantization is a common optimization technique for reducing model size and improving performance without sacrificing accuracy too much.

Understand your limitations: LLMs are complex and computationally demanding. Larger LLMs generally require more resources and will slow down your device.

Explore alternative hardware: More powerful GPUs and AI accelerators can significantly improve performance, especially for large LLMs like Llama3 70B.

Experiment with model configurations: Different model configurations offer trade-offs between speed and accuracy. Experiment and find the best balance for your specific needs.

Utilize specialized libraries and frameworks: Libraries like llama.cpp and frameworks like PyTorch and TensorFlow provide tools and optimizations specifically designed for LLMs, making your deployment smoother and more efficient.

FAQ: Frequently Asked Questions

What are LLMs?

LLMs are powerful AI models trained on massive datasets of text and code. They can generate human-like text, translate languages, write different kinds of creative content, and answer your questions in an informative way.

What is quantization and why is it important?

Quantization is a technique used to reduce the size of models by representing their weights with fewer bits. It allows you to run larger models on devices with limited memory and processing power.

What are the limitations of Llama3 70B on the RTX6000Ada_48GB?

The RTX6000Ada_48GB is a powerful GPU, but it's still limited by its available resources when running a massive model like Llama3 70B. This can lead to slower token generation speeds and increased latency.

How do I choose the right LLM for my needs?

The choice depends on your specific use case. Smaller models are ideal for speed and resource efficiency, while larger models offer better accuracy and capabilities. Consider the balance between model size, performance, and the quality of the results.

Keywords:

LLM, Llama3, Llama3 70B, NVIDIA, RTX6000Ada48GB, GPU, optimization, token generation speed, performance, Q4K_M, F16, quantization, use cases, recommendations, practical, AI, deep learning, natural language processing, NLP, development, technology, machine learning